Many people seem to get confused with the actual function of GPUs used in embedded (ARM / MIPS) SoC, and I can often read comments similar to “with lima drivers we should get video decoding in XBMc soon”, and I’ve just received any email reading “My main task is to build a full hd media player based on ffmpeg with hardware decoding acceleration for Linux. Is it possible with mali400mp4?”. So I’ve decided to write a short post about it to make things a bit more clear. Contrary to GPUs in the PC world, embedded GPUs only take care of 3D, and sometimes 2D graphics, and leave video encoding and/or decoding to another block called Video Processing Unit (VPU). There’s at least one exception with Broadcom Videocore IV GPU as found in the processor used in the Raspberry Pi that apparently takes care of 2D & 3D graphics as well as hardware video decoding & encoding, but this is not the norm.

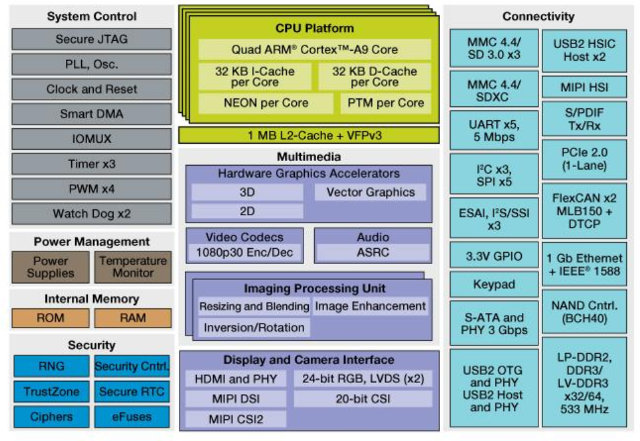

Let’s take an example with Freescale i.MX6 Quad SoC.

In the multimedia section in the middle of the block diagram above you’ll see hardware graphics accelerators, and video codecs:

- 3D via Vivante GC2000 GPU

- 2D via Vivante GC320 GPU

- Vector Graphics (OpenVG 1.1) via Vivante GC355 GPU

- 1080p30 Enc/Dec via a Video Processing Unit (VPU)

Freescale SoC is using one GPU for 3D, two separate GPUs for 2D composition and vector graphics, and a VPU to handle video by hardware. That means Vivante GC2000 has nothing to do with video hardware decoding for example.

Let’s give another short example. AllWinner A20 features a Mali-400 (MP2) GPU with 3D graphics and OpenVG support, a separate 2D engine, and CedarX VPU for hardware video processing. So please, don’t come to ask me if it is possible to use Mali-400 hardware video decoder in Linux. 🙂

Where it gets a little confusing, is that some of the GPU capabilities can be used to decode video codecs that are not supported by the Video Processing Unit. For example, the Raspberry Pi guys used some features of the VideoCore IV GPU, but not the hardware codecs, to implemented VP6, VP8, MJPEG decoding in standard resolution. More recent GPUs comes with Renderscript and OpenCL support, which allows 1080p HEVC (H.265) video decoding using the CPU and GPU. That’s called GPU compute, and although it works, it won’t be as power efficient as video hardware decoding in the VPU.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

i am sorry i am not the sharpest tool in the shed but what does this all mean what is your message i did not quiet understand also the pi does not have hardware acceleration except when playing multimedia via xbmc or omxplayer or something. and one more Q what part of the gpu is used for hw acceleration thanks

@adem I sort of hope my post was clear. Normally in an ARM SoC you’ve got one or more GPU to handle 2D and 3D graphics, and a VPU to handle hardware video decoding. The Raspberry Pi is using Broadcom BCM2835 with Videocore IV GPU. This GPU comes with the blocks for 2D & 3D graphic as well as video hardware acceleration (There’s a VPU inside the Videocore IV). Now Videocore IV GPU support hardware decode for H.264, MPG2, VC1 and more a few other codecs. In order to support more codecs, the Raspberry Pi team used vector graphics engine… Read more »

I wonder where this misunderstanding started since it’s so wide-spread? Maybe it’s just the connection with intel architecture where the cpu just does cpu stuff, the other chips are just glue, and a graphics card is now the only commercially viable place to put extra functional units like gpu, vpu, physics, or sound dsps. SoC’s have so many functional units these days even the distinction between ‘gpu’ ‘cpu’ or ‘vpu’ starts to blur as you demonstrate with the 3 separate parts related to graphics synthesis above – multiple blocks might be involved. And the graphics output stage will likely have… Read more »

I had to look up HSA (Heterogeneous Systems Architecture Foundation) – http://hsafoundation.com/

Instead of ” So please, don’t come to ask me if it is possible to use Mali-400 hardware video decoder in Linux.” statement, it would be more helpful to clarify “why” not ask that question at all.

I understand that the main target market for most popular SoCs including the A80 are the setupbox and tablets. Most developers and users are focus on “decoding” capabilities/performance, but not about encoding. In some way, the market for “decoding” is almost saturated with solutions already competing hard. SoC’s manufacturers and important reference sites like cnx-software could give a chance to the “encoding” market, dominated by specific FPGA and ASICs without the benefit of integrated CPU/GPU for additional pre/post processing. My particular interest is the “encoding” VPUs, hope to see something this year here and at the next CES2015. Happy 2015… Read more »

“So please, don’t come to ask me if it is possible to use Mali-400 hardware video decoder in Linux.”

So… Is the answer is ‘Yes’? I’m confused by this article.

@BUzer

The answer is No for Linux, Android, or any other operating systems, since Mali-400 does not have the IP blocks to decode video.