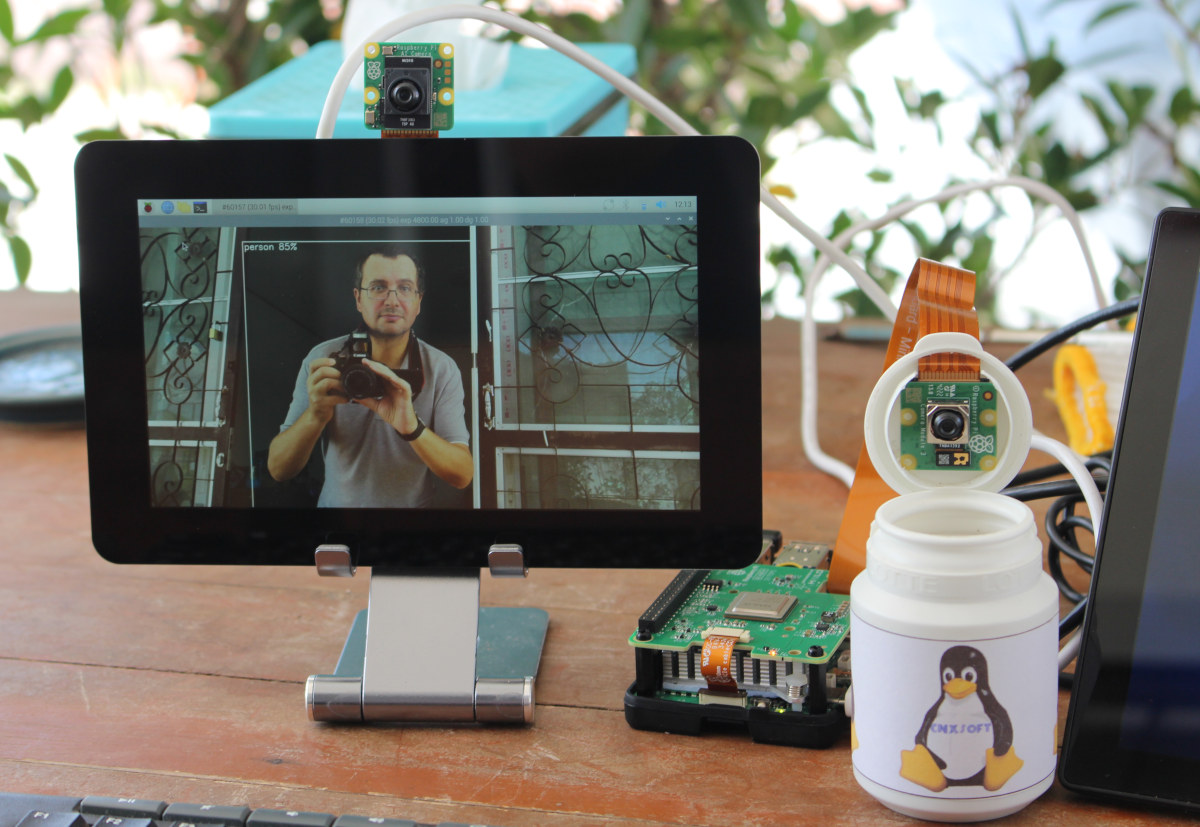

Raspberry Pi recently launched several AI products including the Raspberry Pi AI HAT+ for the Pi 5 with 13 TOPS or 26 TOPS of performance and the less powerful Raspberry Pi AI camera suitable for all Raspberry Pi SBC with a MIPI CSI connector. The company sent me samples of the AI HAT+ (26 TOPS) and the AI camera for review, as well as other accessories such as the Raspberry Pi Touch Display 2 and Raspberry Pi Bumper, so I’ll report my experience getting started mostly following the documentation for the AI HAT+ and AI camera. Hardware used for testing In this tutorial/review, I’ll use a Raspberry Pi 5 with the AI HAT+ and a Raspberry Pi Camera Module 3, while I’ll connect the AI camera to a Raspberry Pi 4. I also plan to use one of the boards with the new Touch Display 2. Let’s go through a […]

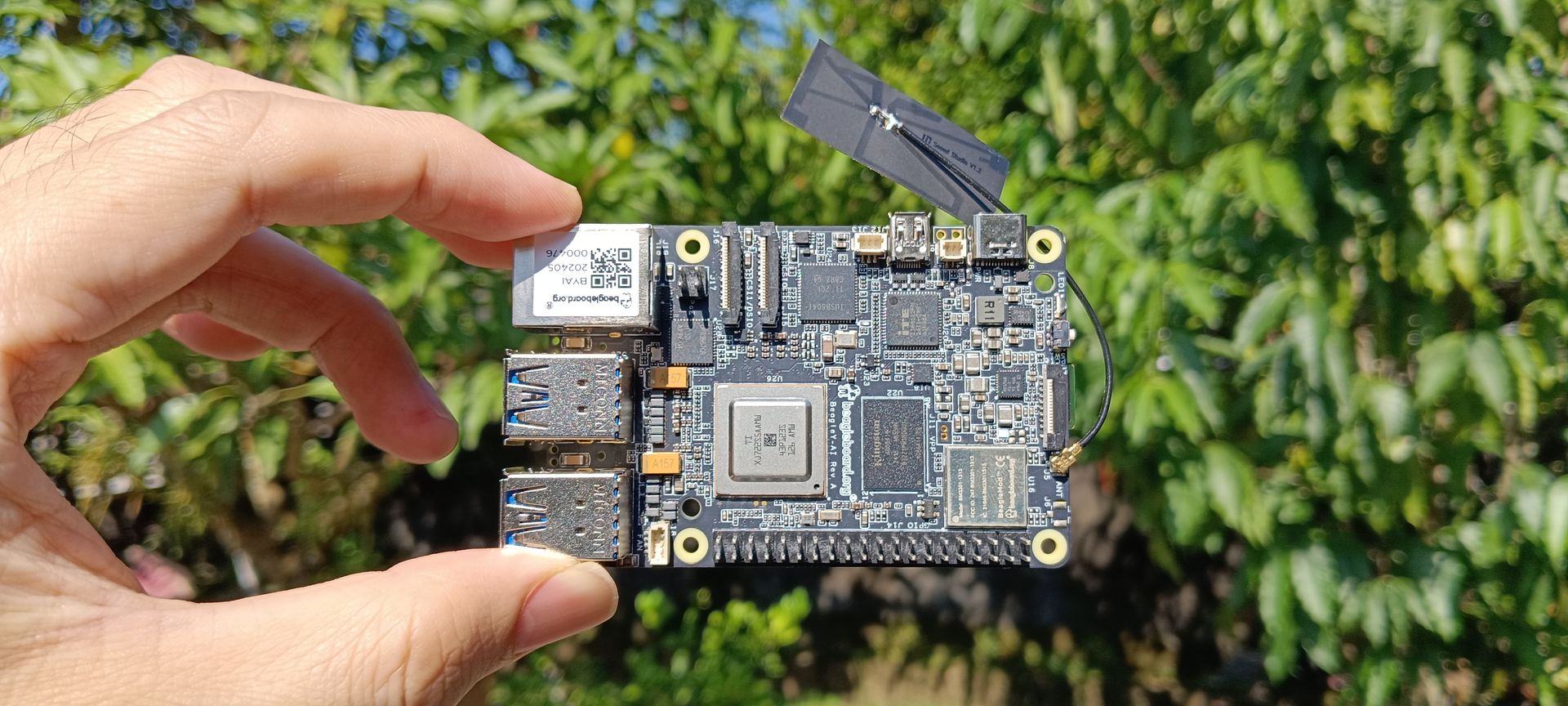

BeagleY-AI SBC review with Debian 12, TensorFlow Lite, other AI demos

Today I’ll be reviewing the BeagleY-AI open-source single-board computer (SBC) developed by BeagleBoard.org for artificial intelligence applications. It is powered by a Texas Instruments AM67A quad-core Cortex-A53 processor running at 1.4 GHz along with an ARM Cortex-R5F processor running at 800 MHz for handling general tasks and low-latency I/O operations. The SoC is also equipped with two C7x DSP units and a Matrix Multiply Accelerator (MMA) to enhance AI performance and accelerate deep learning tasks. Each C7x DSP delivers 2 TOPS, offering a total of up to 4 TOPS. Additionally, it includes an Imagination BXS-4-64 graphics accelerator that provides 50 GFlops of performance for multimedia tasks such as video encoding and decoding. For more information, refer to our previous article on CNX Software or visit the manufacturer’s website. BeagleY-AI unboxing The BeagleY-AI board was shipped from India in a glossy-coated, printed corrugated cardboard box. Inside, the board is protected by […]

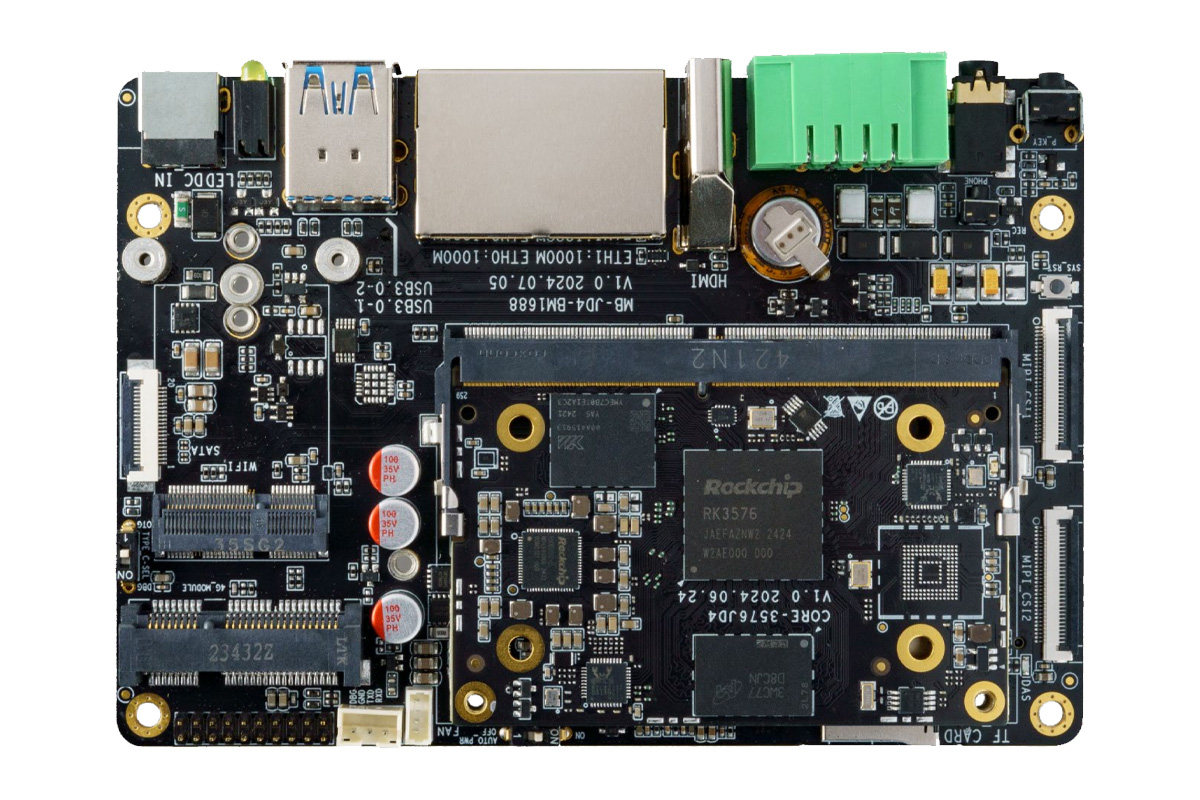

Firefly introduces Rockchip RK3576 SoM and All-in-One carrier board compatible with NVIDIA Jetson Orin Nano and Orin NX modules

Firefly has released a Rockchip RK3576 SoM and development board called the Core-3576JD4 Core Board with a SO-DIMM edge connector and the AIO-3576JD4 carrier board respectively. The core board or the SoM is built around an octa-core 64-bit processor with a Mali G52 MC3 GPU and a 6 TOPS NPU, so it can handle demanding AI tasks while maintaining low power consumption. The AIO-3576JD4 is a full-fledged carrier board with a wide range of on-board interfaces, like dual Gigabit Ethernet ports, MIPI-CSI, HDMI 2.1, USB 3.0, USB 2.0, USB Type-C, a Phoenix connector for serial, dual-row pin headers (SPI, I2C, Line in, and Line out), an M.2 socket for 5G, a mini PCIe for 4G LTE, an M.2 socket for WiFi 6/BT 5.2, and a third M.2 socket for SATA/PCIe NVMe SSD expansion. RK3576 AI SoM and dev board specification Core-3576JD4 specifications SoC – Rockchip RK3576 CPU – Octa-core CPU […]

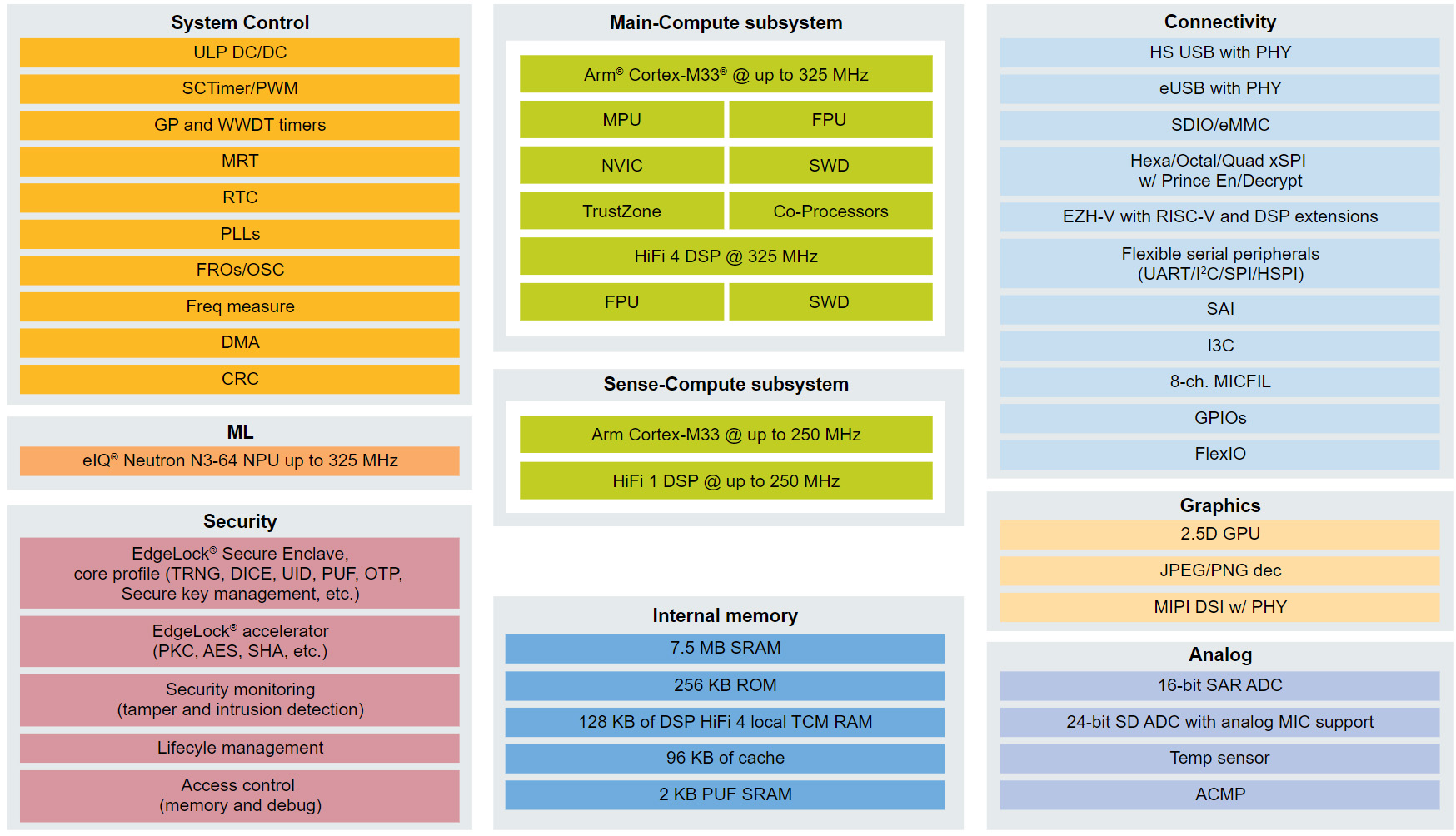

NXP i.MX RT700 dual-core Cortex-M33 AI Crossover MCU includes eIQ Neutron NPU and DSPs

NXP has recently announced the release of NXP i.MX RT700 RT700 AI crossover MCU following the NXP i.MX RT600 series release in 2018 and the i.MX RT500 series introduction in 2021. The new i.MX RT700 Crossover MCU features two Cortex-M33 cores, a main core clocked at 325 MHz with a Tensilica HiFi 4 DSP and a secondary 250 MHz core with a low-power Tensilica HiFi 1 DSP for always-on sensing tasks. Additionally, it integrates a powerful eIQ Neutron NPU with an upgraded 7.5 MB of SRAM and a 2D GPU with a JPEG/PNG decoder. These features make this device suitable for applications including AR glasses, hearables, smartwatches, wristbands, and more. NXP i.MX RT700 specifications: Compute subsystems Main Compute Subsystem Cortex-M33 @ up to 325 MHz with Arm TrustZone, built-in Memory Protection Unit (MPU), a floating-point unit (FPU), a HiFi 4 DSP and supported by NVIC for interrupt handling and SWD […]

reCamera modular AI camera features SG2002 RISC-V AI SoC, supports interchangeable image sensors and baseboards

Seeed Studio’s reCamera AI camera is a modular RISC-V smart camera system for edge AI applications based on SOPHGO SG2002 SoC. The camera is made up of three boards: the Core board, the Sensor board, and the Baseboard. The Core board includes hosts the processor, storage, and optional Wi-Fi. The Sensor board consists of image choice of image sensors, and the Baseboard provides various connectivity options including USB Type-C, UART, microSD, and optional PoE port and CAN bus connectivity options. At the time of writing the company has released the C1_2002w and C1_2002 core boards. The C1_2002w core board includes eMMC storage, Wi-Fi, and BLE modules, and the C1_2002 features extra SDIO and UART connectivity, but not WiFi. Both boards use the SOPHGO SG2002 tri-core processor and can be paired with various camera sensors for applications such as robotics, healthcare, smart home, as well as buildings and industrial automation. […]

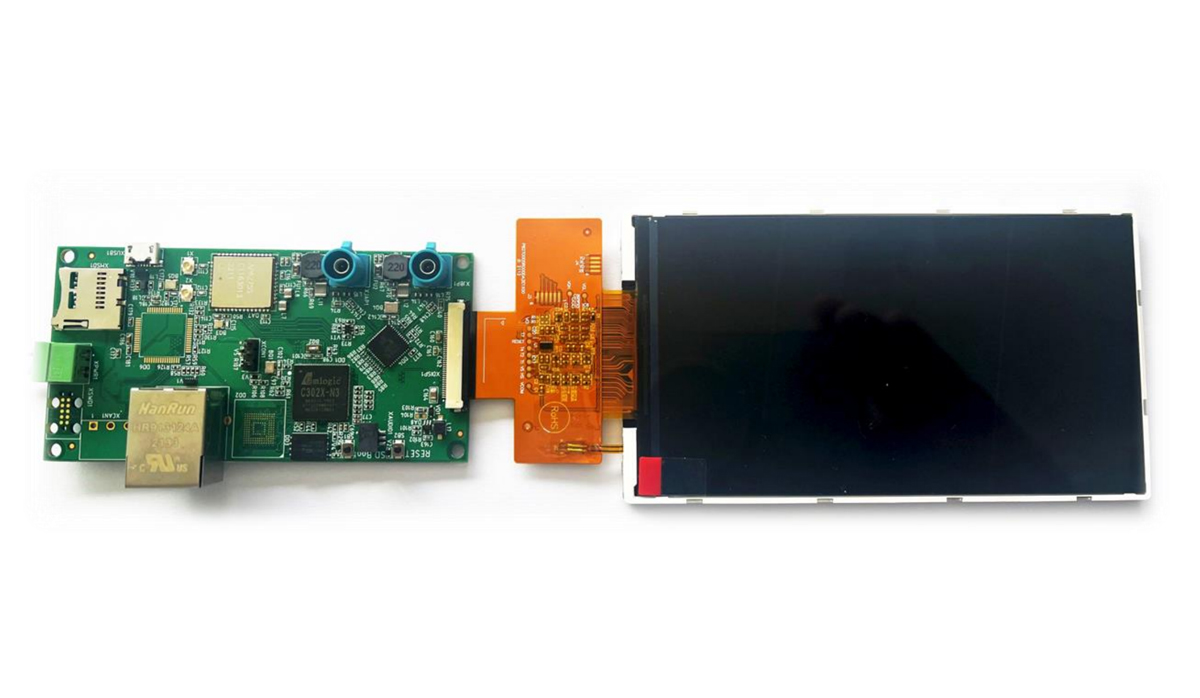

Amlogic C302X embedded AI camera kit features GMSL2 connectors, Ethernet, WiFi, BLE, and CAN Bus interface

The DAB Embedded CAMKIT-AML302-IMX462, is a compact AI camera kit built around the Amlogic C302X processor with 256MB DDR3 on-chip (SiP) and designed for image processing and machine learning applications. The board also includes GMSL2 interfaces that add support for a range of Sony camera sensors, including global shutter and 3D options. Additionally, it has 100Mbit LAN, Wi-Fi, BLE, and CAN bus connectivity which makes this board useful for various computer vision applications. Previously we have written about similar camera kits including the Pivistation 5, the OpenCV AI Kit Lite, the e-con Systems 360° Camera Kit, and much more Feel free to check those out if you are interested in the topic. DAB Embedded CAMKIT-AML302-IMX462 specifications Processor – Amlogic C302X CPU – Dual Core ARM Cortex-A35 2 TOPS Neural Processing Unit (NPU) OpenCV (Hardware Accelerator) Video Encoder – H.264/H.265/JPEG ISP – High-performance, up to 5MP with HDR System Memory – 256MB […]

The Open Home Foundation adds HACS, microWakeWord, and Music Assistant open-source projects

HACS, microWakeWord, and Music Assistant projects have joined the Open Home Foundation launched a few months ago to manage open-source projects related to Home Assistant and Smart Home applications in general separating them from Nabu Casa’s commercial activities. Note the HACS, microWakeWord, and Music Assistant projects will not operate directly under the Open Home Foundation’s umbrella, but they are external projects that the foundation collaborates on since it believes those are projects worth investing in to further develop the Smart Home ecosystem. Let’s have a quick look at the three projects. Home Assistant Community Store (HACS) is the most used custom integration for Home Assistant and allows users to easily install custom integrations, cards, and themes. Music Assistant gives users control over their media players and audio files handling both local music collection and music streaming services so that users can play any tune anywhere in their house without restrictions. […]

Firefly ROC-RK3576-PC low-profile Rockchip RK3576 SBC supports AI models like Gemma-2B, LlaMa2-7B, ChatGLM3-6B

Firefly ROC-RK3576-PC is a low-power, low-profile SBC built around the Rockchip RK3576 octa-core Cortex-A72/A53 SoC which we also find in the Forlinx FET3576-C, the Banana Pi BPI-M5, and Mekotronics R57 Mini PC. In terms of power and performance, this SoC falls in between the Rockchip RK3588 and RK3399 SoCs and can be used for AIoT applications thanks to its 6 TOPS NPU. Termed “mini computer” by Firefly this SBC supports up to 8GB LPDDR4/LPDDR4X memory and 256GB of eMMC storage. Additionally, it offers Gigabit Ethernet, WiFi 5, and Bluetooth 5.0 for connectivity. An M.2 2242 PCIe/SATA socket and microSD card can be used for storage, and the board also offers HDMI and MIPI DSI display interfaces, two MIPI CSI camera interfaces, a few USB ports, and a 40-pin GPIO header. Firefly ROC-RK3576-PC specifications SoC – Rockchip RK3576 CPU 4x Cortex-A72 cores at 2.2GHz, four Cortex-A53 cores at 1.8GHz Arm Cortex-M0 MCU at 400MHz GPU […]