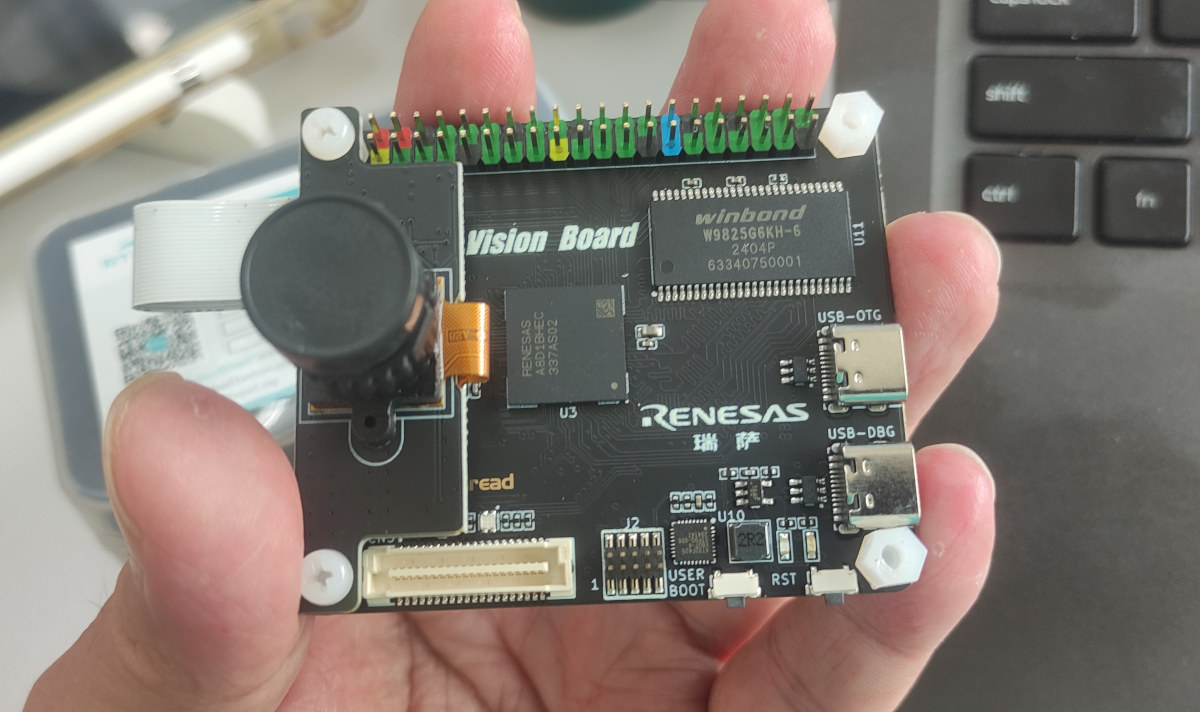

It’s already Friday, and the fifty prize of CNX Software’s Giveaway Week 2024 will be the RT-Thread Vision board equipped with a Renesas RA8D1 Arm Cortex-M85 microcontroller, a camera, an optional LCD display, and a 40-pin GPIO header. The board is used as an evaluation platform for the Renesas RA8D1 MCU and RT-Thread real-time operating system. As its name implies, it’s mainly designed for computer vision applications leveraging the Helium MVE (M-Profile Vector Extension) for digital signal processing (DSP) and machine learning (ML) applications. I haven’t reviewed it myself and instead, received two samples from RT-Thread who sent them to me by mistake, so I’ll give them away here and on the Thai website. But it was reviewed by Supachai who tested the RT-Thread Vision board with OpenMV and ran a few benchmarks last June. The Helium MVE did not seem to be utilized in OpenMV at that time (June […]

OpenUC2 10x is an ESP32-S3 portable microscope with AI-powered real-time image analysis

Seeed Studio has recently launched the OpenUC2 10x AI portable microscope built around the XIAO ESP32-S3 Sense module. Designed for educational, environmental research, health monitoring, and prototyping applications this microscope features an OV2640 camera with a 10x magnification with precise motorized focusing, high-resolution imaging, and real-time TinyML processing for image handling. The microscope is modular and open-source making it easy to customize and expand its features using 3D-printed parts, motorized stages, and additional sensors. It supports Wi-Fi connectivity with a durable body, uses USB-C for power and swappable objectives make it usable in various applications. Previously we have written about similar portable microscopes like the ioLight microscope and the KoPa W5 Wi-Fi Microscope, and Jean-Luc also tested a cheap USB microscope to read part number of components. Feel free to check those out if you are looking for a cheap microscope. OpenUC2 10x specifications: Wireless MCU – Espressif Systems ESP32-S3 CPU […]

Particle launches Photon 2 Realtek RTL8721DM dual-band WiFi and BLE IoT board, Particle P2 module

Particle has launched the Photon 2 dual-band WiFi and BLE IoT board powered by a 200 MHz Realtek RTL8721DM Arm Cortex-M33 microcontroller, as well as the corresponding Particle P2 module for integration into commercial products. The original “Spark Photon” WiFi IoT board was launched in 2014 with an STM32 MCU and a BCM43362 wireless module, but the market and company name have changed since then, and Particle has now launched the Photon 2 board and P2 module with a more modern Cortex-M33 WiFi & BLE microcontroller with support for security features such as Arm TrustZone. Particle Photon 2 specifications: Wireless MCU – Realtek RTL8721DM CPU – Arm Cortex-M33 core @ 200 MHz Memory – 4.5MB embedded SRAM of which 3072 KB (3 MB) is available to user applications Connectivity – Dual-band WiFi 4 up to 150Mbps and Bluetooth 5.0 Security Hardware Engine Arm Trustzone-M Secure Boot SWD Protection Wi-Fi WEP, […]

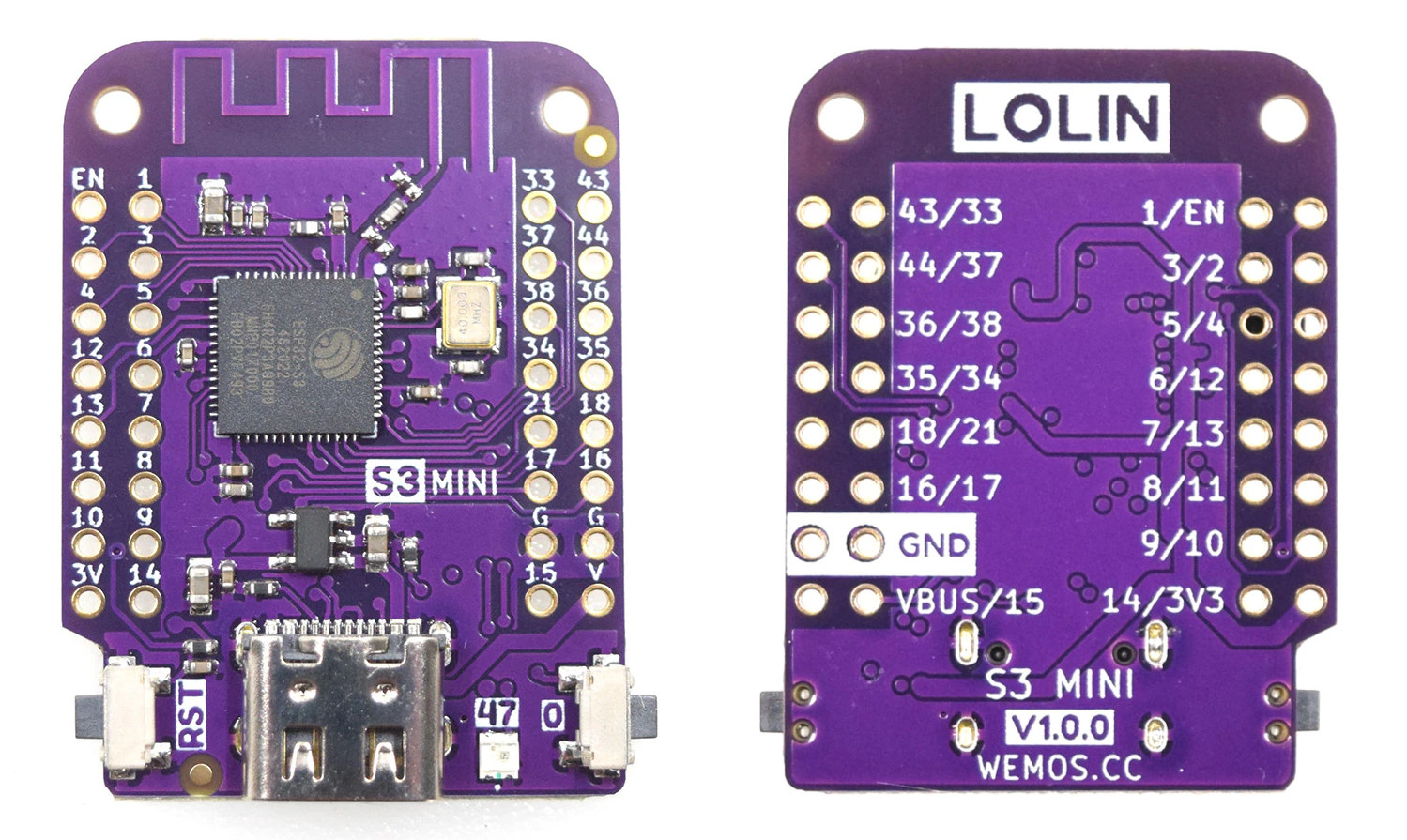

Lolin S3 Mini – Tiny $5 ESP32-S3 board follows Wemos D1 Mini form factor

LOLIN S3 Mini is a tiny ESP32-S3 WiFi and Bluetooth IoT development board that follows the Wemos D1 Mini form factor and supports its equally tiny stackable shields to add relays, displays, sensors, and so on. Wemos/LOLIN introduced their first ESP32-S3 board last year with the LOLIN S3 board with plenty of I/Os and an affordable $7 price tag. But I prefer the company’s Mini form factor because of its size and the ability to select add-on boards to easily add a range of features to your projects. So I’m pleased to find out the company has now launched the LOLIN S3 Mini following the ESP32-C3 powered LOLIN C3 Mini board unveiled in March 2022. LOLIN S3 Mini specifications: WiSoC – Espressif Systems ESP32-S3FH4R2 CPU – dual-core Tensilica LX7 @ up to 240 MHz with vector instructions for AI acceleration Memory – 512KB RAM, 2MB PSRAM Storage – 4MB QSPI […]

Sony IMX500-based smart camera works with AITRIOS software

Raspberry Pi recently received a strategic investment from Sony (Semiconductor Solutions Corporation) in order to provide a development platform for the company’s edge AI devices leveraging the AITRIOS platform. We don’t have many details about the upcoming Raspberry Pi / Sony device, so instead, I decided to look into the AITRIOS platform, and currently, there’s a single hardware platform, LUCID Vision Labs SENSAiZ SZP123S-001 smart camera based on Sony IMX500 intelligent vision sensor, designed to work with Sony AITRIOS software. LUCID SENSAiZ Smart camera SENSAiZ SZP123S-001 specifications: Imaging sensor – 12.33MP Sony IMX500 progressive scan CMOS sensor with rolling shutter, built-in DSP and dedicated on-chip SRAM to enable high-speed edge AI processing. Focal Length – 4.35 mm Camera Sensor Format – 1/2.3″ Pixels (H x V) – 4,056 x 3,040 Pixel Size, H x V – 1.55 x 1.55 μm Networking – 10/100M RJ45 port Power Supply – PoE+ via […]

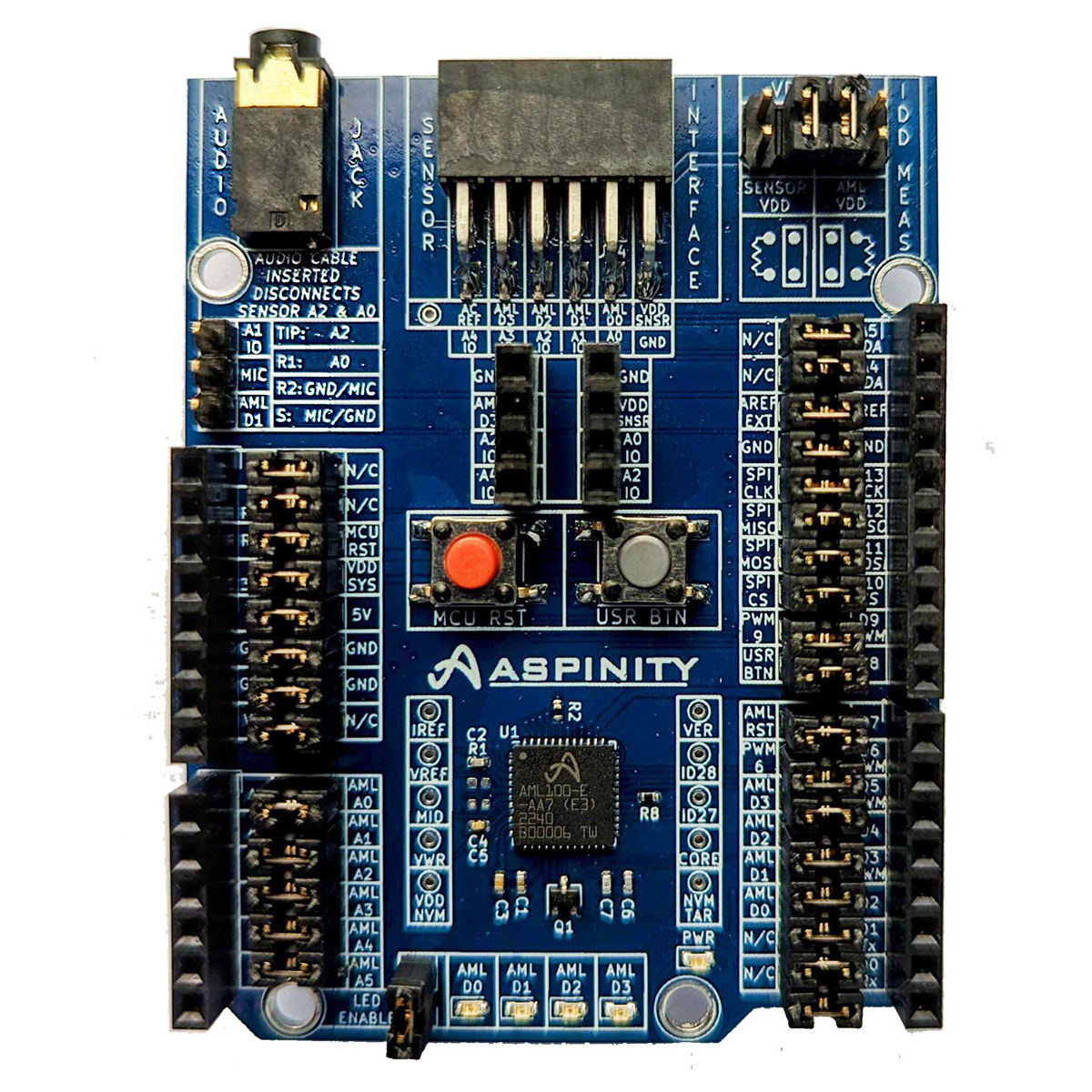

Aspinity AB2 AML100 Arduino Shield supports ultra-low-power analog machine learning

Aspinity AB2 AML100 is an Arduino Shield based on the company’s AML100 analog machine learning processor that reduces power consumption by 95 percent compared to equivalent digital ML processors, and the shield works with Renesas Quick-Connect IoT platform or other development platforms with Arduino Uno Rev3 headers. The AML100 analog machine learning processor is said to consume just 15µA for sensor interfacing, signal processing, and decision-making and operates completely within the analog domain offloading most of the work from the microcontroller side that can stay its lowest power state until an event/anomaly is detected. Aspinity AB2 AML100 Arduino Shield specifications: ML chip – Aspinity AML100 analog machine learning chip Software programmable analogML core with an array of configurable analog blocks (CABs) with non-volatile memory and analog signal processing Processes natively analog data Near-zero power for inference and events detection Consumes <20µA when always-sensing Reduces analog data by 100x Supports up […]

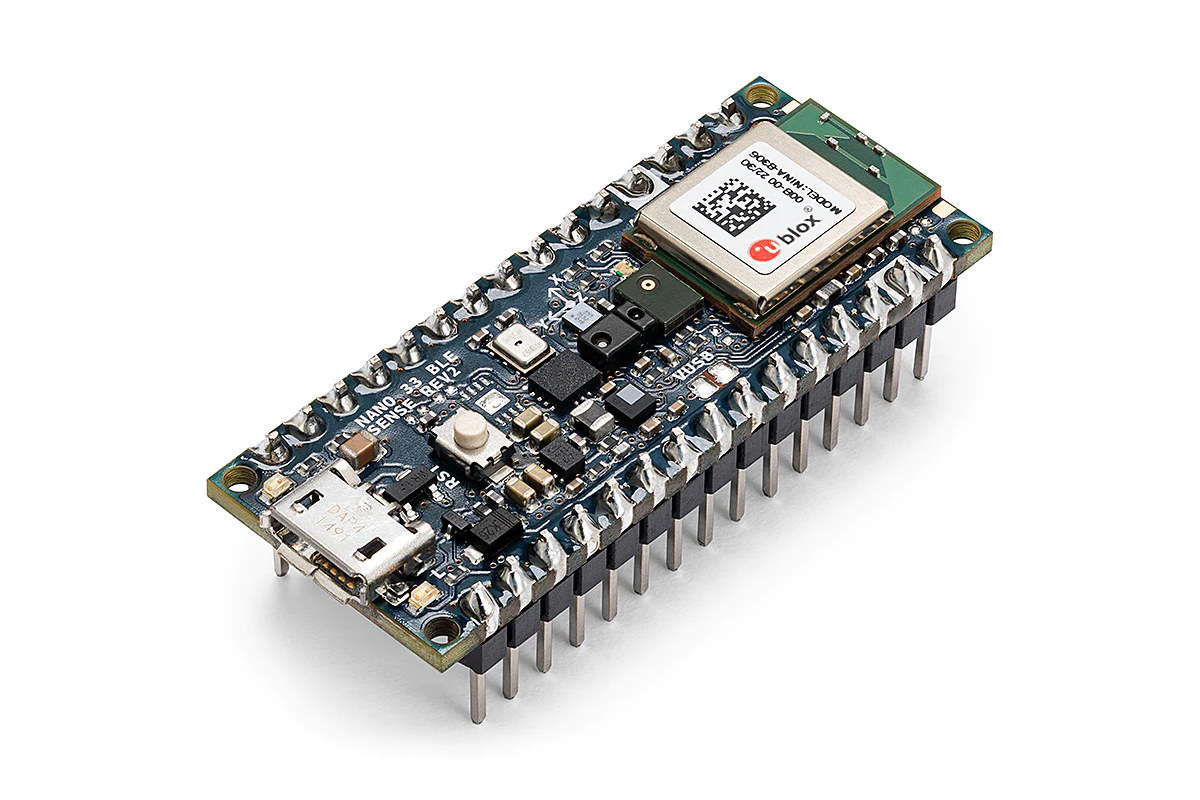

Arduino Nano 33 BLE Sense Rev2 switches to BMI270 & BMM150 IMUs, HS3003 temperature & humidity sensor

Arduino Nano 33 BLE Sense Rev2 is a new revision of the Nano 33 BLE Sense machine learning board with basically the same functionality but some sensors have changed along with some other modifications “to improve the experience of the users”. The main changes are that STMicro LSM9DS1 9-axis IMU has been replaced by two IMUs from Bosch SensorTech, namely the BMI270 6-axis accelerometer and gyroscope, and the BMM150 3-axis magnetometer, a Renesas HS3003 temperature & humidity sensor has taken the place of an STMicro HTS221, and the microphone is now an MP34DT06JTR from STMicro instead of an MP34DT05. All of the replaced parts are from STMicro, so it’s quite possible the second revision of the board was mostly to address supply issues. Arduino Nano 33 BLE Sense Rev2 (ABX00069) specifications: Wireless Module – U-blox NINA-B306 module powered by a Nordic Semi nRF52840 Arm Cortex-M4F microcontroller @ 64MHz with 1MB […]

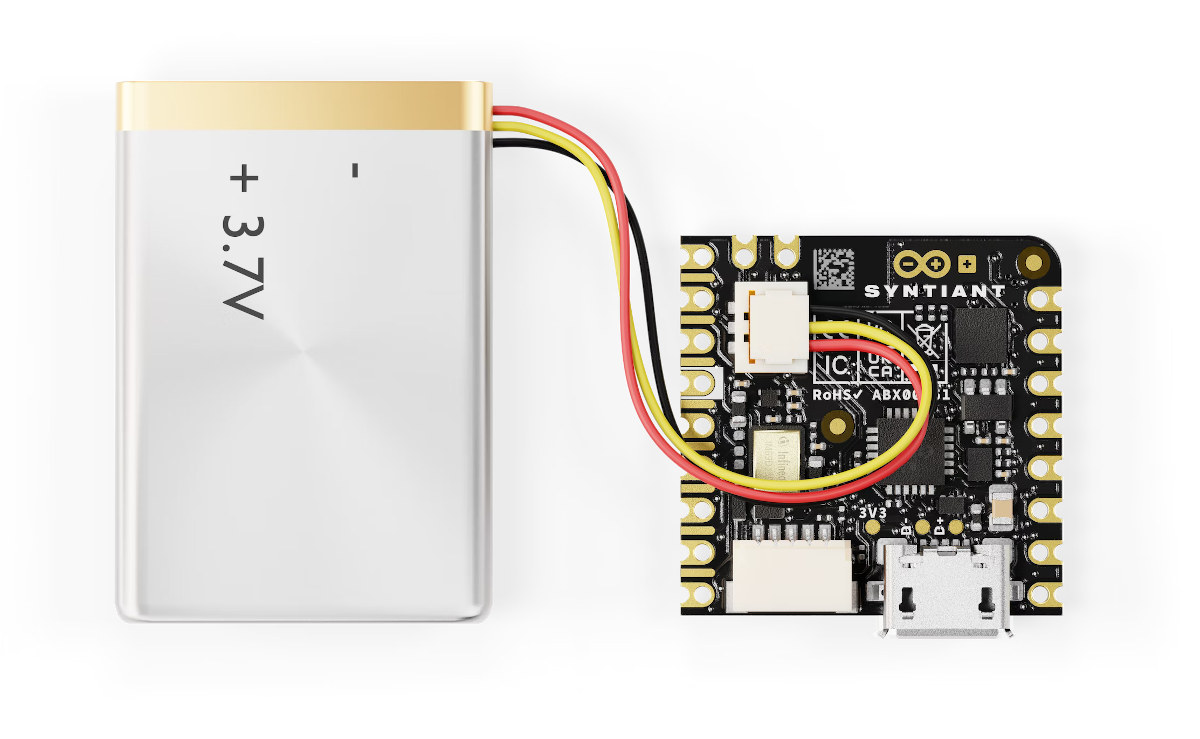

Arduino Nicla Voice enables always-on speech recognition with Syntiant NDP120 “Neural Decision Processor”

Nicla Voice is the latest board from the Arduino PRO family with support for always-on speech recognition thanks to the Syntiant NDP120 “Neural Decision Processor” with a neural network accelerator, a HiFi 3 audio DSP, and a Cortex-M0+ microcontroller core, and the board also includes a Nordic Semi nRF52832 MCU for Bluetooth LE connectivity. Arduino previously launched the Nicla Sense with Bosch SensorTech’s motion and environmental sensors, followed by the Nicla Vision for machine vision applications, and now the company is adding audio and voice support for TinyML and IoT applications with the Nicla Voice. Nicla Voice specifications: Microprocessor – Syntiant NDP120 Neural Decision Processor (NDP) with one Syntiant Core 2 ultra-low-power deep neural network inference engine, 1x HiFi 3 Audio DSP, 1x Arm Cortex-M0 core up to 48 MHz, 48KB SRAM Wireless MCU – Nordic Semiconductor nRF52832 Arm Cortex-M4 microcontroller @ 64 MHz with 512KB Flash, 64KB RAM, Bluetooth […]