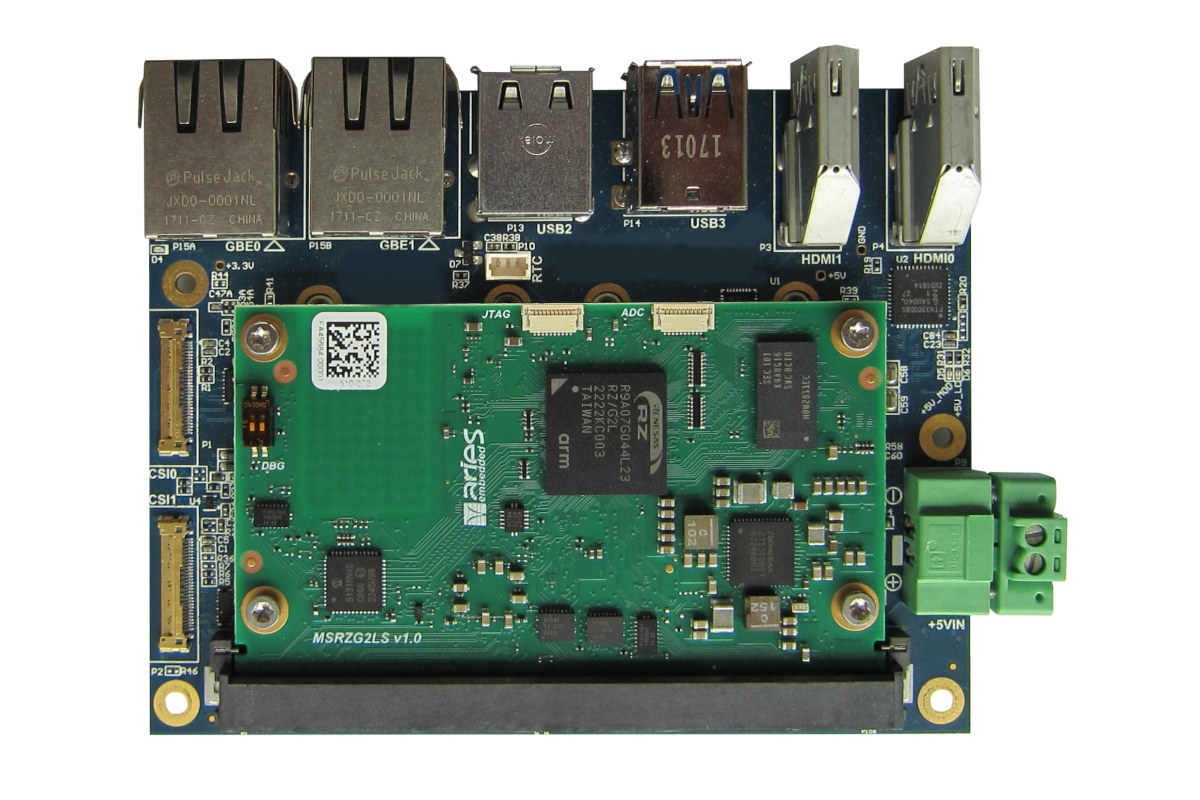

ARIES Embedded has recently launched two SMARC-compliant MRZG2LS and MRZV2LS system-on-modules (SoM) powered by respectively a Renesas RZ/G2L dual-core Cortex-A55/M33 microprocessor with Arm Mali-G31 GPU and H.264 video codec (H.264) and a similar Renesas RZ/V2L MPU adding a built-in ‘DRP-AI’ AI accelerator for vision applications. Those are the first SMARC modules from the company and they are well-suited for applications such as entry-class industrial human machine interfaces (HMIs), embedded vision, edge artificial intelligence (edge-AI), real-time control, industrial Ethernet connectivity, and embedded devices with video capabilities. ARIES Embedded MRZG2LS and MRZV2LS key features and specifications: SoC – Renesas RZ/G2L or GZ-V2L with Application CPU – Single or dual Arm Cortex-A55 up to 1.2GHz Real-time core -Arm Cortex-M33 GPU – Arm Mali-G31 VPU – H.264 codec AI accelerator – DRP-AI on Renesas RZ/V2l only (MRZV2L SoM) System Memory – 512MB to 4GB DDR4 RAM Storage – SPI NOR flash, 4GB to 64GB […]

Review of CM4 XGO Lite – A Raspberry Pi CM4 based smart robot dog with a robotic arm

The CM4 XGO Lite is a smart robot dog based on Raspberry Pi CM4 system-on-module and designed to learn to program using Blockly, Python, and ROS. This four-legged robot also happens to feature a 3-joint robot arm and a robot gripper installed on the back that can pick up light objects. The Raspberry Pi CM4 module drives the LCD screen and camera and performs AI and computer vision processing, while each joint is controlled with a servo motor, and a 6-axis tilt sensor ensures stable walking and movement. We’ve already discussed the capabilities of the CM4 XGO Lite, aka XGO Lite 2, when it was announced earlier this year, so we’re not going to go into details here, but some of the highlights include support for faster AI edge computing applications such as face detection and object classification, omnidirectional movement, six-dimensional posture control, posture stability, and multiple motion gaits. Robot […]

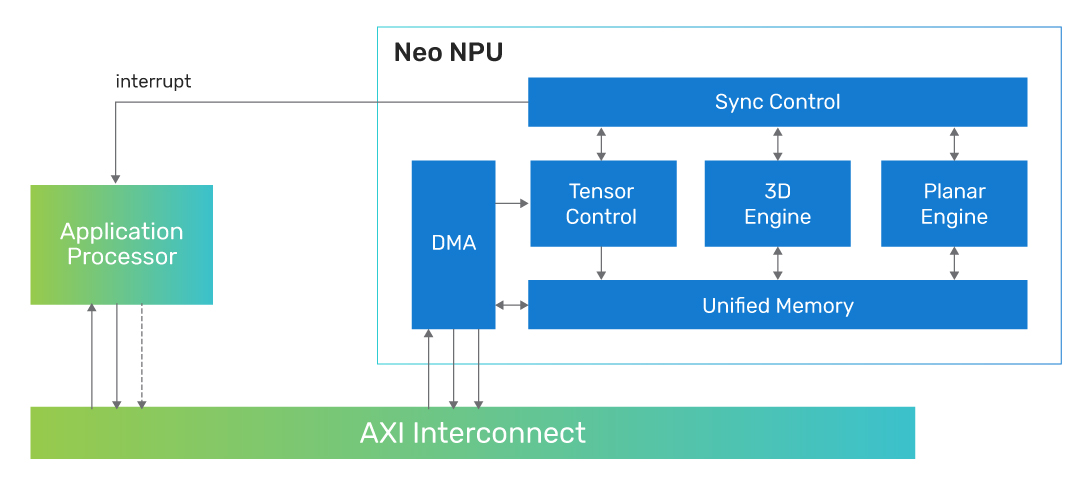

Cadence Neo NPU IP scales from 8 GOPS to 80 TOPS

Cadence Neo NPU (Neural Processing Unit) IP delivers 8 GOPS to 80 TOPS in single core configuration and can be scaled to multicore configuration for hundreds of TOPS. The company says the Neo NPUs deliver high AI performance and energy efficiency for optimal PPA (Power, Performance, Area) and cost points for next-generation AI SoCs for intelligent sensors, IoT, audio/vision, hearables/wearables, mobile vision/voice AI, AR/VR and ADAS. Some highlights of the new Neo NPU IP include: Scalability – Single-core solution is scalable from 8 GOPS to 80 TOPS, with further extension to hundreds of TOPS with multicore Supports 256 to 32K MACs per cycle to allow SoC architects to meet power, performance, and area (PPA) tradeoffs Works with DSPs, general-purpose microcontrollers, and application processors Support for Int4, Int8, Int16, and FP16 data types for CNN, RNN and transformer-based networks. Up to 20x higher performance than the first-generation Cadence AI IP, with […]

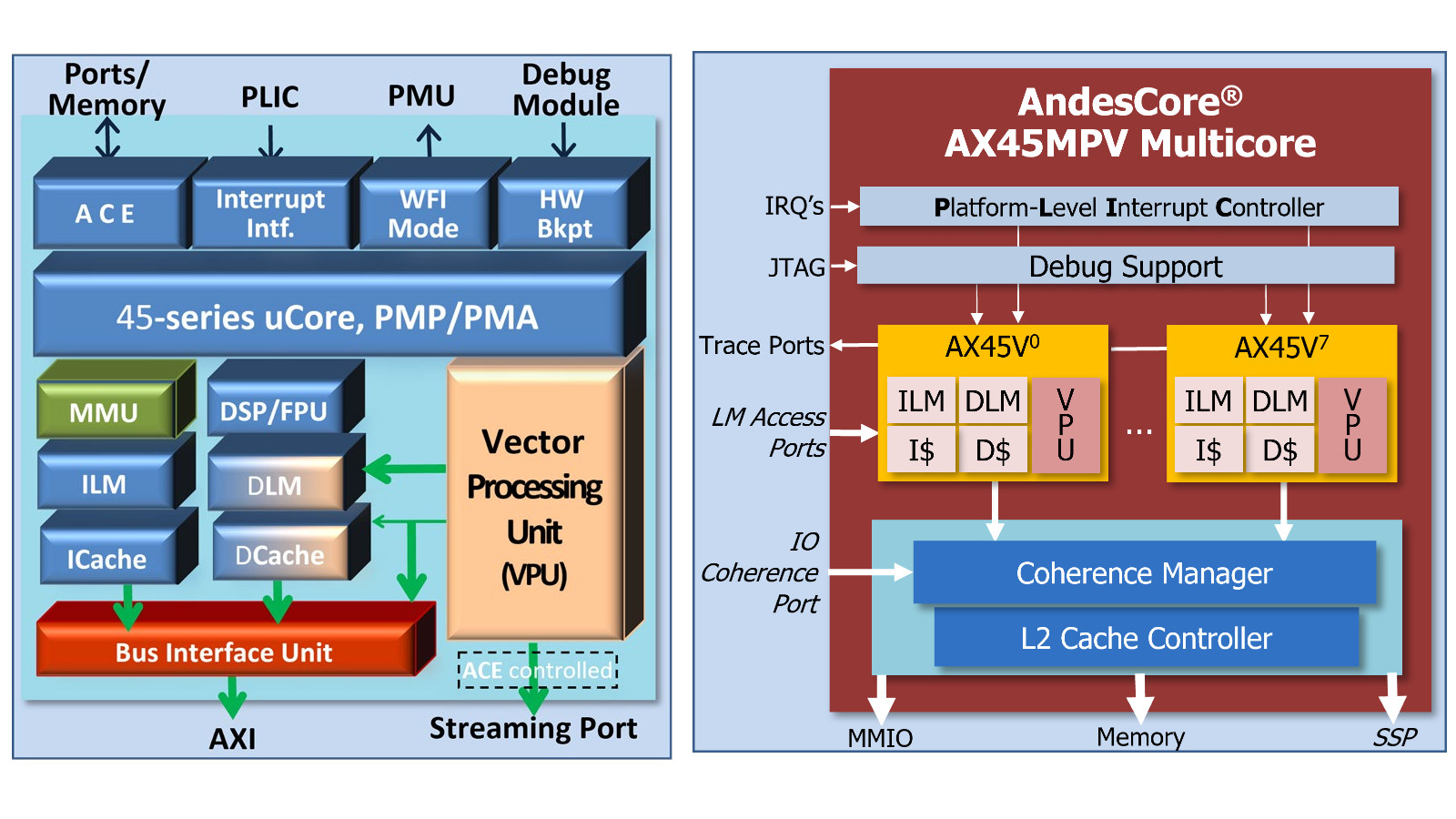

Andes launches AX45MPV RISC-V CPU core with Vector Extension 1.0

Andes Technology has recently announced the general availability of the AndesCore AX45MPV RISC-V CPU which builds upon the AX45MP multicore processor and adds RISC-V Vector Extension 1.0. Equipped with RISC-V vector processing and parallel execution capability, the new RISC-V CPU core targets SoCs processing large amounts of data for applications such as ADAS, AI inference and training, AR/VR, multimedia, robotics, and signal processing. AX45MPV key features and specifications: 64-bit in-order dual-issue 8-stage CPU core with up to 1024-bit Vector Processing Unit (VPU) – compliant with RISC-V V-extension (RVV) 1.0 + custom extensions Supports clusters of up to 8 cores L2 cache and coherence support High bandwidth vector local memory (HVM) AndeStar V5 Instruction Set Architecture (ISA) Compliant with RISC-V GCBPV extensions Andes performance extension Andes CoDense extension for further compaction of code size Separately licensable Andes Custom Extension (ACE) for customized scalar and vector instruction 64-bit architecture for memory space […]

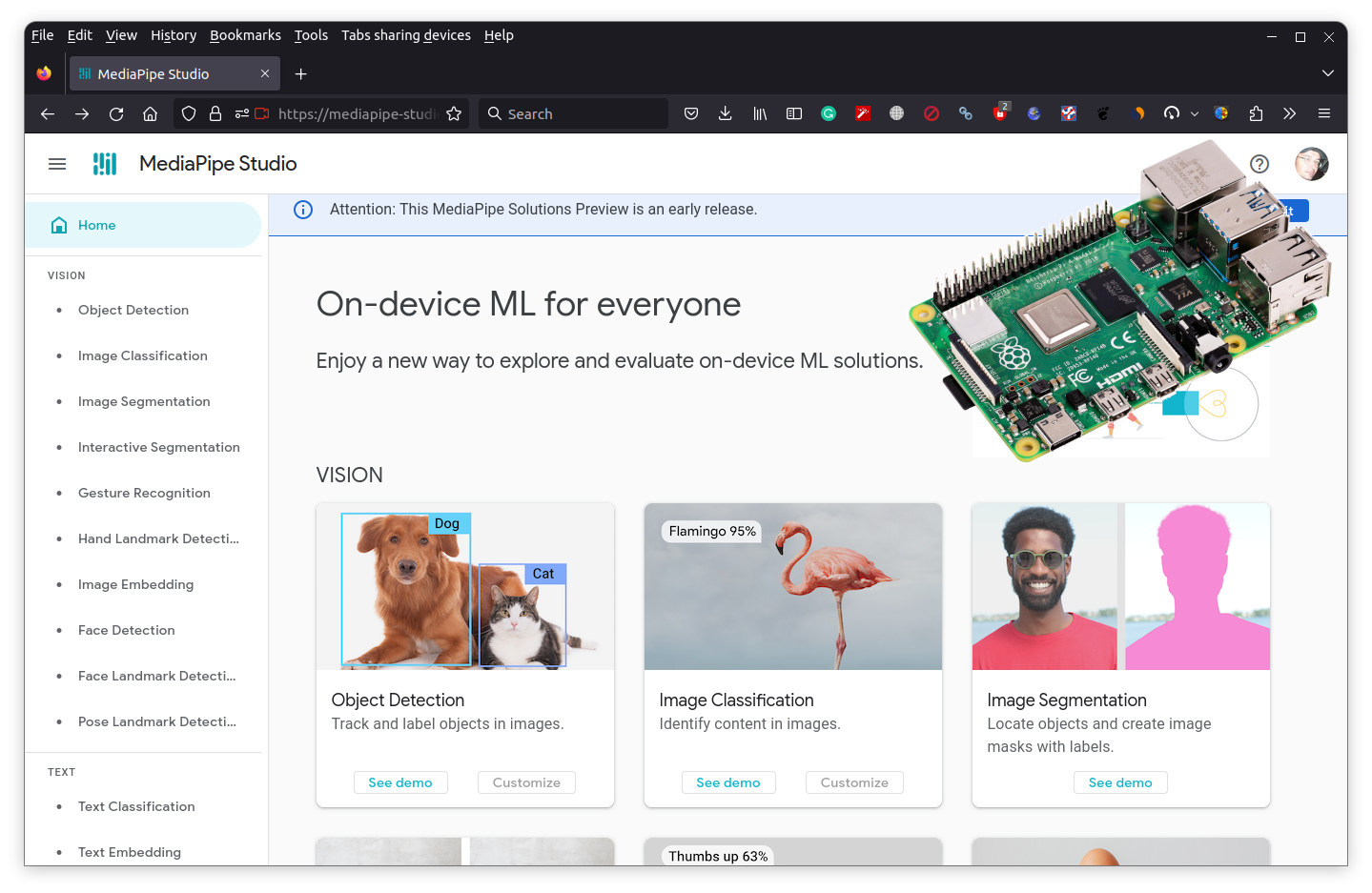

MediaPipe for Raspberry Pi released – No-code/low-code on-device machine learning solutions

Google has just released MediaPipe Solutions for no-code/low-code on-device machine learning for the Raspberry Pi (and an iOS SDK) following the official release in May for Android, web, and Python, but it’s been years in the making as we first wrote about the MediaPipe project back in December 2019. The Raspberry Pi port is an update to the Python SDK and supports audio classification, face landmark detection, object detection, and various natural language processing tasks. MediaPipe Solutions consists of three components: MediaPipe Tasks (low-code) to create and deploy custom end-to-end ML solution pipelines using cross-platform APIs and libraries MediaPipe Model Maker (low-code) to create custom ML models MediaPipe Studio (no-code) webpage to create, evaluate, debug, benchmark, prototype, and deploy production-level solutions. You can try it out directly in your web browser at least on PC and I could quickly test the object detection on Ubuntu 22.04. MediaPipe Tasks can be […]

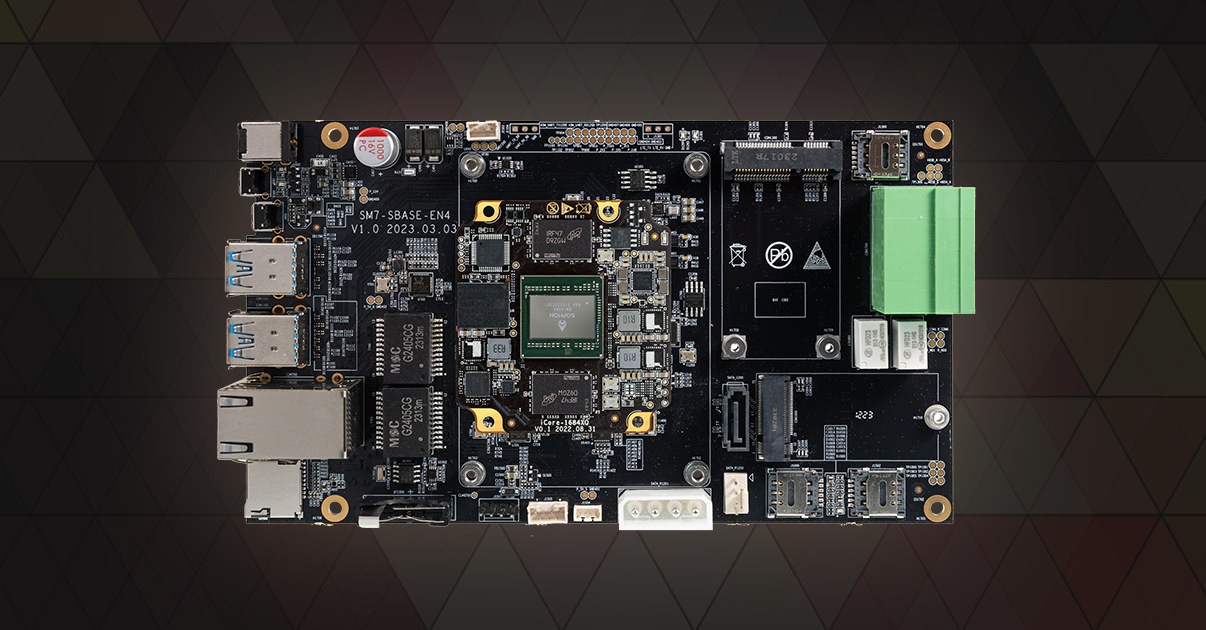

Firefly AIO-1684XQ motherboard features BM1684X AI SoC with up to 32 TOPS for video analytics, computer vision

Firefly AIO-1684XQ is a motherboard based on SOPHGO SOPHON BM1684X octa-core Cortex-A53 AI SoC delivering up to 32TOPS for AI inference, and designed for computer vision applications and video analytics. The headless machine vision board is equipped with 16GB RAM, 64GB eMMC flash, and 128MB SPI flash, and comes with a SATA 3.0 port, dual Gigabit Ethernet, optional 4G LTE or 5G modules, four USB 3.0 ports, and a terminal block with two RS485 interface, two relay outputs, and a few GPIOs. Firefly AIO-1684XQ specifications: SoC – SOPHGO SOPHON BM1684X CPU – Octa-core Arm Cortex-A53 processor @ up to 2.3 GHz TPU – Up to 32TOPS (INT8), 16 TFLOPS (FP16/BF16), 2 TFLOPS (FP32) VPU Up to 32-channel H.265/H.264 1080p25 video decoding Up to 32-channel 1080p25 HD video processing (decoding + AI analysis) Up to 12-channel H.265/H.264 1080p25fps video encoding System Memory – 16GB LPDDR4x Storage 64GB eMMC flash 128MB SPI […]

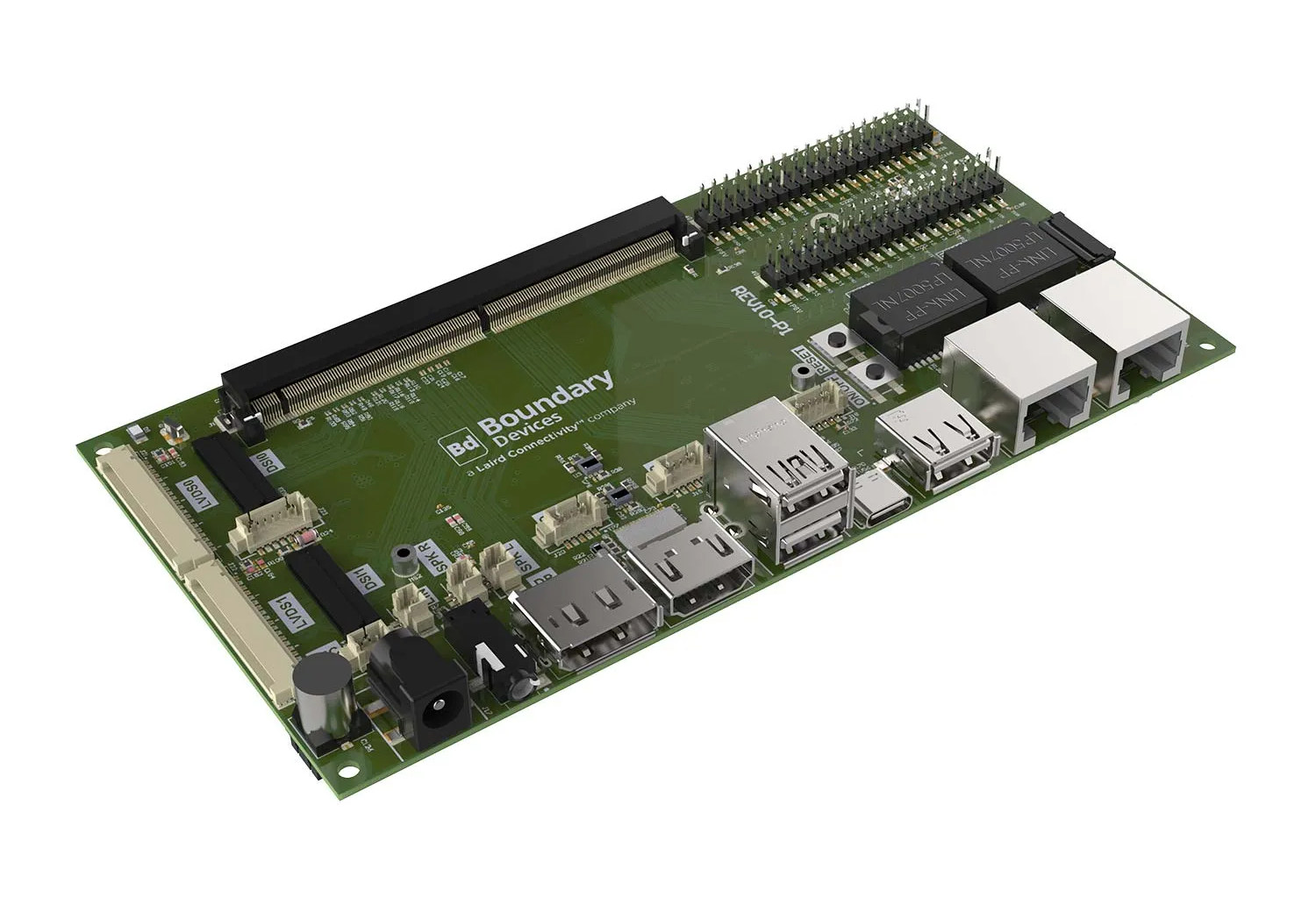

Tungsten700 SMARC SoM and devkit features MediaTek Genio 700 AIoT processor

Laird Connectivity Tungsten700 SOM is a SMARC system-on-module powered by a MediaTek Genio 700 Arm Cortex-A78/A55 AIoT processor with up to 8GB LPDDR4, 16GB eMMC flash, and a Sona MT320 Wi-Fi 6/Bluetooth 5.3 module based on the Filogic 320 chipset. The board was designed by Boundary Devices, recently acquired by Laird, and is offered with a SMARC 2.1 carrier board that can be used for development or as a single board computer integrated into designs. Tungsten700 SMARC module Tungsten700 specifications: SoC – MediaTek Genio 700 (MT8390) CPU – Octa-core processor with 2x Arm Cortex-A78 cores @ up to 2.2 GHz, 6x Arm Cortex-A55 cores @ up to 2.0 GHz GPU – ARM Mali-G57 MC3 GPU VPU as in “Video Processing Unit” Encode up to 4Kp30 HEVC/H.264 Decode up to 4Kp75 HEVC/H.264/AV1/VP9 VPU as in “Vision Processing Unit” – Tensilica VP6 Vision Processing Unit ISP Single Camera: 32MP @ 30FPS Dual […]

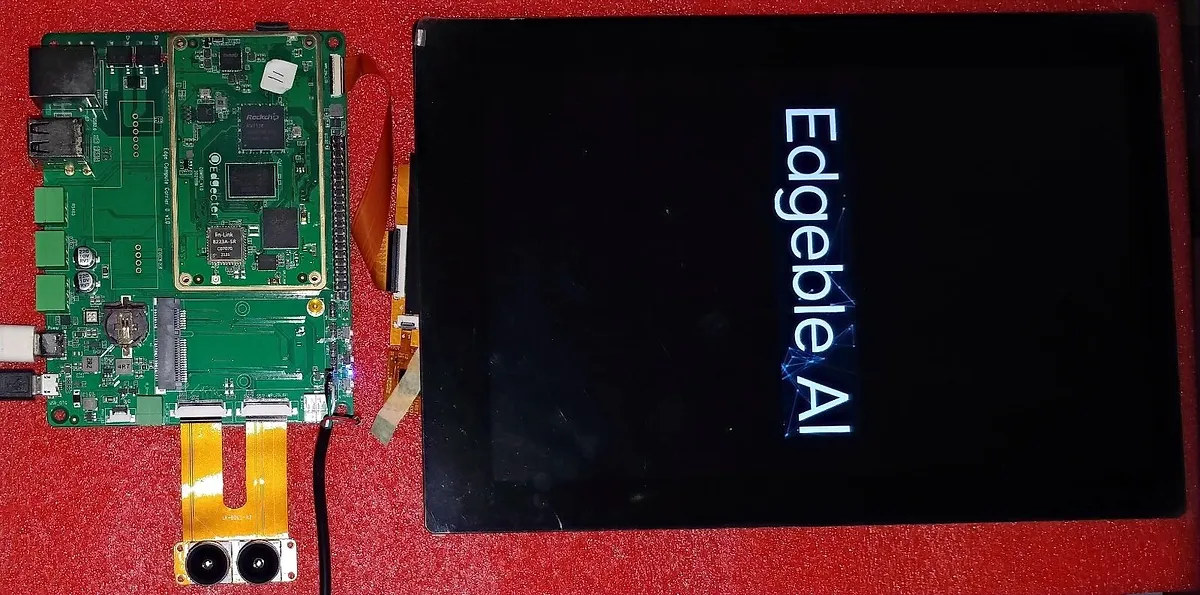

Edgeble AI Neural Compute Module 2 (Neu2) follows 96Boards SoM form factor

Edgeble AI’s Neurable Compute Module 2, or Neu2 for shorts, is a system-on-module for computer vision applications based on the Rockchip RV1126 quad-core Cortex-A7 camera processor that follows the 96Boards SoM form factor. I first found the Neu2 and Neu6 (Rockchip RK3588) in the release log for the Linux 6.3 kernel, but at the time I found there was not enough information about those. The specifications for the Neu6 are still wrong (e.g. “64-bit processor with 4x Cortex-A7 core”) at the time of writing, so I’ll check the Neu2 system-on-module and its industrial version – the Neu2K based on RK1126K – for which we have more details. Edgeble Neu2 SoM specifications: SoC – Rockchip RV1126/RV1126K with CPU – Quad-core Arm Cortex-A7 @ 1.5GHz, RISC-V MCU @ 200MHz; (14nm SMIC process) GPU – 2D graphics engine NPU – 2 TOPS with INT8/INT16 VPU 4K H.264/H.265 video encoder up to 3840 x […]