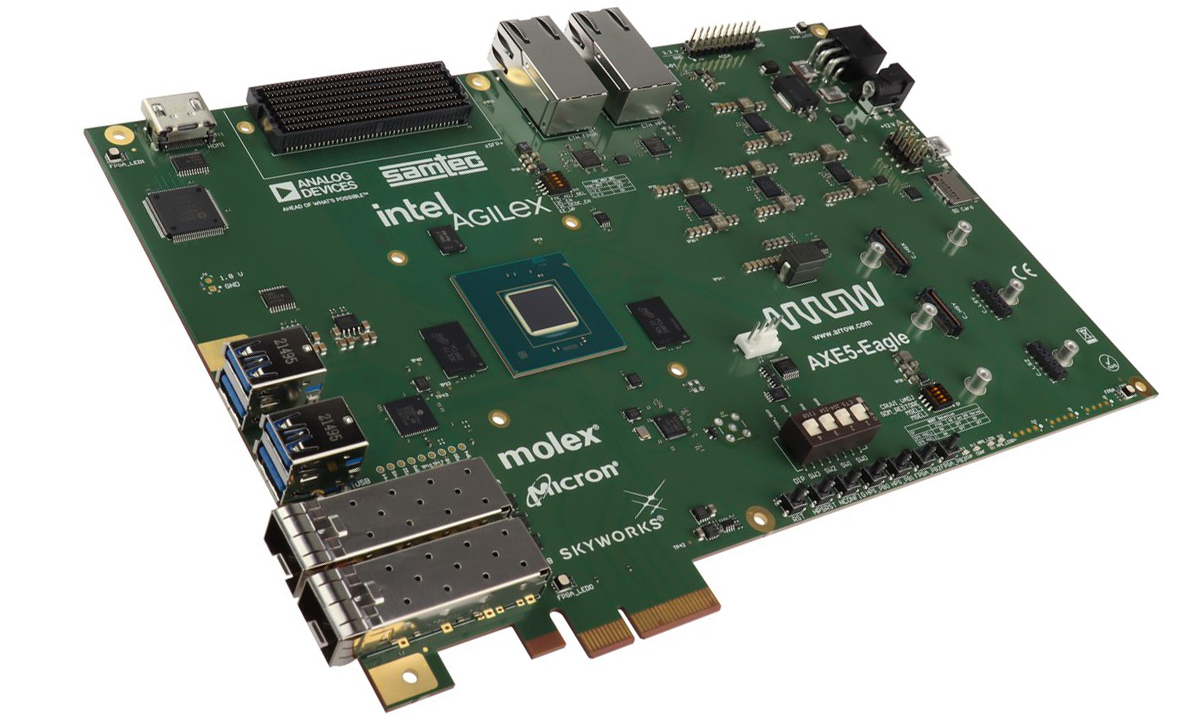

The Trenz Electronic AXE5-EAGLE-ES devkit (development kit) is powered by an Intel Agilex 5 E-series SoC FPGA, codenamed Sundance Mesa. It is designed for FPGA applications in sectors such as wireless communication, video broadcast, and defense. The kit provides 656k logic elements and a quad-core processing system featuring dual-core Arm Cortex-A76 and Cortex-A55 clusters. It supports high-speed transceivers up to 17 Gbps, PCIe 4.0 connectivity, and integrated memory interfaces like DDR4 and LPDDR4/5. Built on Intel’s 7 technology, the Agilex 5 architecture is optimized for midrange FPGA applications, offering a compact design with efficient performance-per-watt. The FPGA includes enhanced DSP blocks with AI Tensor capabilities for AI and digital signal processing tasks. Additionally, it incorporates Intel’s second-generation Hyperflex Architecture, enabling greater flexibility for handling dynamic workloads. Previously, we covered the iW-RainboW-G58M system-on-module, which features Intel’s Agilex 5 SoC FPGA E-series, a cost-effective, midrange solution for intelligent edge and embedded applications. […]

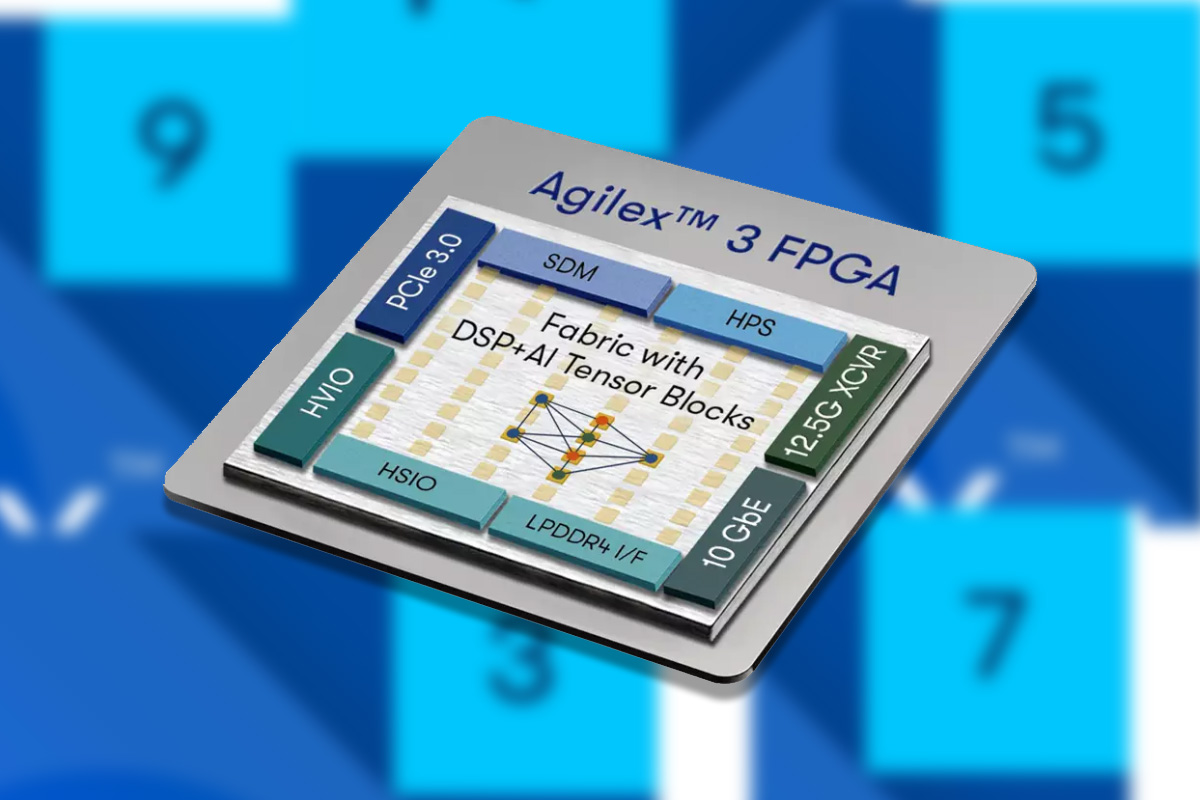

Altera’s 7nm Agilex 3 SoC FPGA features Cortex-A55 cores, AI Tensor Block, DSP, 10 GbE, and more.

Altera, an independent subsidiary of Intel, has launched the Altera Agilex 3 SoC FPGA lineup built on Intel’s 7nm technology. According to Altera, these FPGAs prioritize cost and power efficiency while maintaining essential performance. Key features include an integrated dual-core Arm Cortex A55 processor, AI capabilities within the FPGA fabric (tensor blocks and AI-optimized DSP sections), enhanced security, 25K–135K logic elements, 12.5 Gbps transceivers, LPDDR4 support, and a 38% lower power consumption versus competing FPGAs. Built on the Hyperflex architecture, it offers nearly double the performance compared to previous-generation Cyclone V FPGAs. These features make this device useful for manufacturing, surveillance, medical, test and measurement, and edge computing applications. Altera’s Agilex 3 AI SoC FPGA specifications Device Variants B-Series – No definite information is available C-Series – A3C025, A3C050, A3C065, A3C100, A3C135 SoC FPGAs Hard Processing System (HPS) – Dual-core 64-bit Arm Cortex-A55 up to 800 MHz that supports secure […]

Intel Agilex 5 SoC FPGA embedded SoM targets 5G equipment, 100GbE networking, Edge AI/ML applications

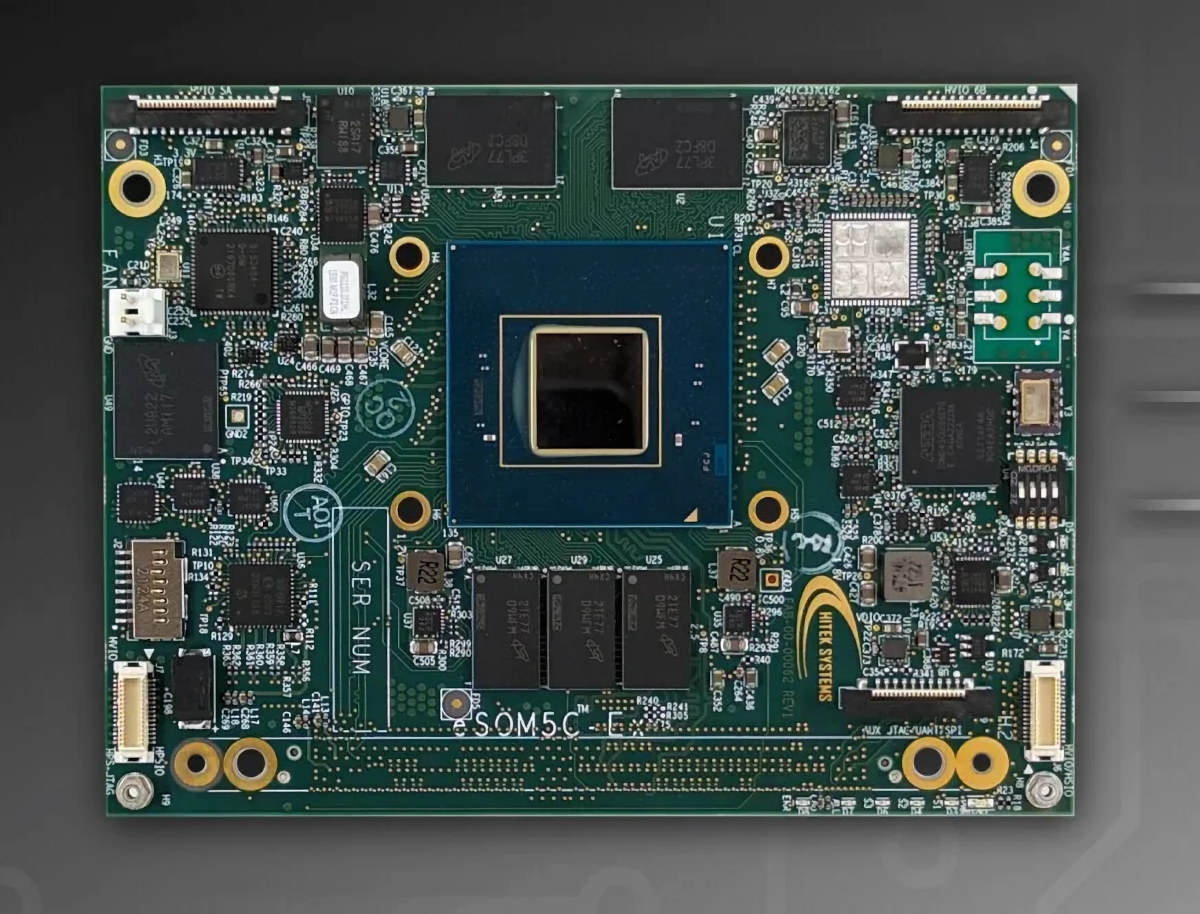

Hitek Systems eSOM5C-Ex is a compact embedded System-on-Module (SOM) based on the mid-range Intel Agilex 5 SoC FPGA E-Series and a pin-to-pin compatible with the company’s earlier eSOM7C-xF based on the Agilex 7 FPGA F-Series. The module exposes all I/Os, including up to 24 transceivers, through the same 400-pin high-density connector found in the Agilex 7 FPGA-powered eSOM7-xF and the upcoming Agilex 5 FPGA D-Series SOM that will allow flexibility from 100K to 2.7 million logic elements (LEs) for the whole product range. Hitek eSOM5C-Ex specifications: SoC FPGA – Intel Agilex 5 E-series group A and Group B FPGAs in B32 package Supported variants: A5E065A/B, A5E043A/B and A5E043A/B Hard Processing System (HPS) – Dual-core Cortex-A76 and dual-core Cortex-A55 FPGA Up to 656,080 Logic elements 24 x transceivers up to 28Gbps System Memory Up to 2x 8GB LPDDR4 for FPGA 2 or 4GB DDR4 for HPS Storage – 32GB eMMC flash, […]

Luxonis OAK Thermal – A PoE thermal camera with Myriad X AI accelerator, waterproof M12 and M8 connectors

Luxonis has announced its first thermal camera with the OAK Thermal (OAK-T) based on the company’s OAK-SoM Pro AI module featuring an Intel Movidius Myriad X, and two waterproof ports with an M12 PoE/Ethernet connector and an M8 auxiliary connector. Luxonis has been making AI cameras based on Myriad X AI accelerator and its Depth AI solution at least since 2019, and its module is also found in third-party cameras as we’ve recently found out with the Arducam PiNSIGHT AI camera. But they had never made a thermal model, and following customers’ requests to fuse thermal and RGB data, they’ve now developed the OAK Thermal, or OAK-T for shorts, that is suitable for detecting leaks and fires or more accurately detect humans & animals than traditional vision-only based cameras. OAK Thermal camera specifications: System-on-Module – Luxonis OAK-SoM Pro with AI accelerator – Intel Movidius Myriad X AI vision processing unit […]

Arducam PiNSIGHT – A 4 TOPS AI camera board for the Raspberry Pi 5

Arducam PiNSIGHT is an AI camera board designed for the Raspberry Pi 5 equipped with a 12.3MP auto-focus module and an Intel Movidius Myriad X-powered SoM delivering up to 4 TOPS and supporting Intel OpenVINO deep learning models. The PiNSIGHT “AI Mate” is mounted underneath the Raspberry Pi 5 to which it is connected through a 15-cm USB-A to USB-C cable. The case is made of metal and acts as a heatsink, and Arducam told CNX Software it dissipates heat enough to cool down the Raspberry Pi 5, and they don’t think the active cooler is needed anymore. In other words, it also acts as a fanless enclosure albeit it’s not in contact with the CPU, so whether it’s enough probably depends on your use case… Arducam PiNSIGHT “AI Mate” specifications: AI accelerator – Luxonis OAK-SoM based on Intel Movidius Myriad X vision processing unit delivering up to 4 TOPS […]

iW-RainboW-G58M is a compact module based on the Intel Agilex 5 SoC FPGA series

iWave Systems, an embedded systems solutions company based in India, has announced the launch of the iW-RainboW-G58M system-on-module (SoM). The module is based on Intel’s Agilex 5 SoC FPGA E-series family, a lineup of affordable, midrange FPGAs for intelligent edge and embedded applications. The Agilex 5 E-series is optimized to deliver better performance-per-watt than its predecessors at a smaller form factor. They feature an asymmetric applications processor system comprising two Arm Cortex-A76 cores and two Cortex-A55 cores for optimized performance and power efficiency. The Arm cores in the Agilex 5 SoC FGPA family are more powerful than the Cortex-A53 cores in the Intel Agilex 7 and 9 products, but those have faster high-speed interfaces and more logic elements. The Agilex 5 SoM is suitable for development in fields like wireless communications, video/broadcast, and industrial test and measurement sectors. The last iWave module we covered, the iW-RainboW-G55M, was based on a […]

Linux 6.6 LTS release – Highlights, Arm, RISC-V and MIPS architectures

The Linux 6.6 release has just been announced by Linus Torvalds on the Linux Kernel Mailing List (LKML): So this last week has been pretty calm, and I have absolutely no excuses to delay the v6.6 release any more, so here it is. There’s a random smattering of fixes all over, and apart from some bigger fixes to the r8152 driver, it’s all fairly small. Below is the shortlog for last week for anybody who really wants to get a flavor of the details. It’s short enough to scroll through. This obviously means that the merge window for 6.7 opens tomorrow, and I appreciate how many early pull requests I have lined up, with 40+ ready to go. That will make it a bit easier for me to deal with it, since I’ll be on the road for the first week of the merge window. Linus About two months ago, […]

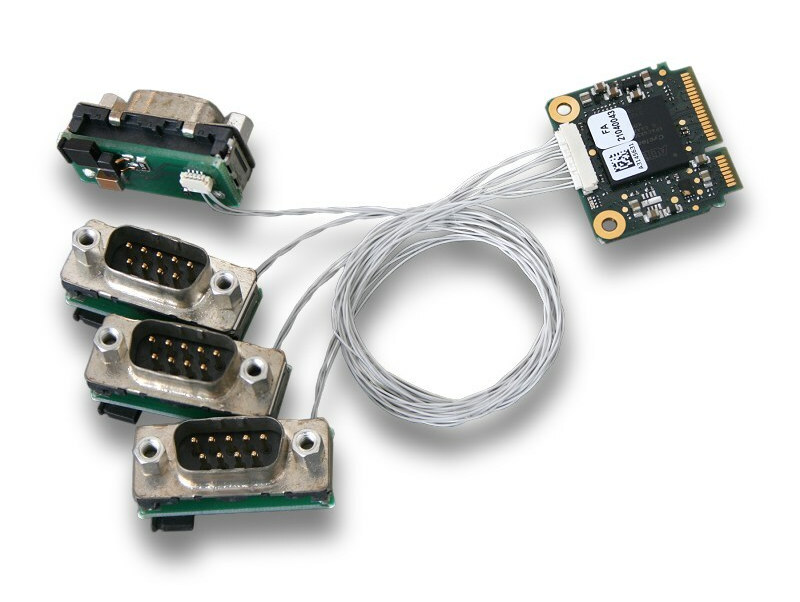

Half-size mini PCIe card adds up to 4 CAN FD interfaces to embedded systems

esd electronics CAN-PCIeMiniHS/402 is a half-size mini PCIe card with four CAN FD Interfaces designed for embedded systems with one model adding extended temperature range support from -40C to 85°C. The company also introduced the CAN-Mini/402-4-DSUB9-150mm adapter to more easily connect the four CAN network interfaces via DSUB9 connectors. It comes with four individual small adapter boards, each equipped with a DSUB9 plug and a jumper for selectable onboard CAN termination, as well as 150 mm long wires. CAN-PCIeMiniHS/402 highlights: 4x CAN FD interfaces according to ISO 11898-2, no galvanic isolation, bit rates from 10 Kbit/s up to 8 Mbit/s Bus mastering and local data management by FPGA (Intel Cyclone IV EP4CGX) PCIe Mini interface according to Mini Card Electromechanical Spec. R1.2 Supports MSI (Message Signaled Interrupts) HW-Timestamp capable Dimensions – 30 mm x 27 mm (Half-size mini PCIe form factor) Temperature Range Standard – 0°C … +75°C Extended range: […]