The Raspberry Pi Foundation introduced the first official Raspberry Pi camera in May 2013. The $25 camera module came with a 5MP sensor and connected via the board’s MIPI CSI connector. Then in 2016, the company launched version 2 of the camera with an 8MP sensor.

The foundation has now launched a much better camera called Raspberry Pi HQ Camera (High-Quality Camera) with a 12MP sensor, improved sensitivity, and support for interchangeable lenses both in C- and CS-mount form factors.

The module itself is equipped with a Sony IMX477 sensor, a milled aluminum lens mount with integrated tripod mount and focus adjustment ring, a C- to CS-mount adapter, and an FPC cable for connection to a Raspberry Pi SBC.

The module itself is equipped with a Sony IMX477 sensor, a milled aluminum lens mount with integrated tripod mount and focus adjustment ring, a C- to CS-mount adapter, and an FPC cable for connection to a Raspberry Pi SBC.

RPi HQ camera specification:

- Sensor – 12.3MP Sony IMX477R stacked, back-illuminated sensor; 7.9 mm sensor diagonal, 1.55 μm × 1.55 μm pixel size

- Output – RAW12/10/8, COMP8

- Back focus – Adjustable (12.5 mm–22.4 mm)

- Lens standards – C-mount and CS-mount (C-CS adapter included)

- Integrated IR cut filter (can be removed to enable IR sensitivity, but the modification is irreversible)

- 20 cm Ribbon cable

- Tripod mount – 1/4”-20

- Compliance – FCC, EMC-2014/30/EU, RoHS, Directive 2011/65/EU

- Long term availability – In production until at least January 2026

Raspberry Pi HQ camera targets industrial and consumer applications, such as security cameras, requiring high visual fidelity and/or integration with specialist optics, and works with all models of Raspberry Pi using the latest OS.

By default, the module does not ships with any lens so it’s not quite usable as is, so the Raspberry Pi Foundation also offers 6mm and 16mm lenses which should be OEM products.

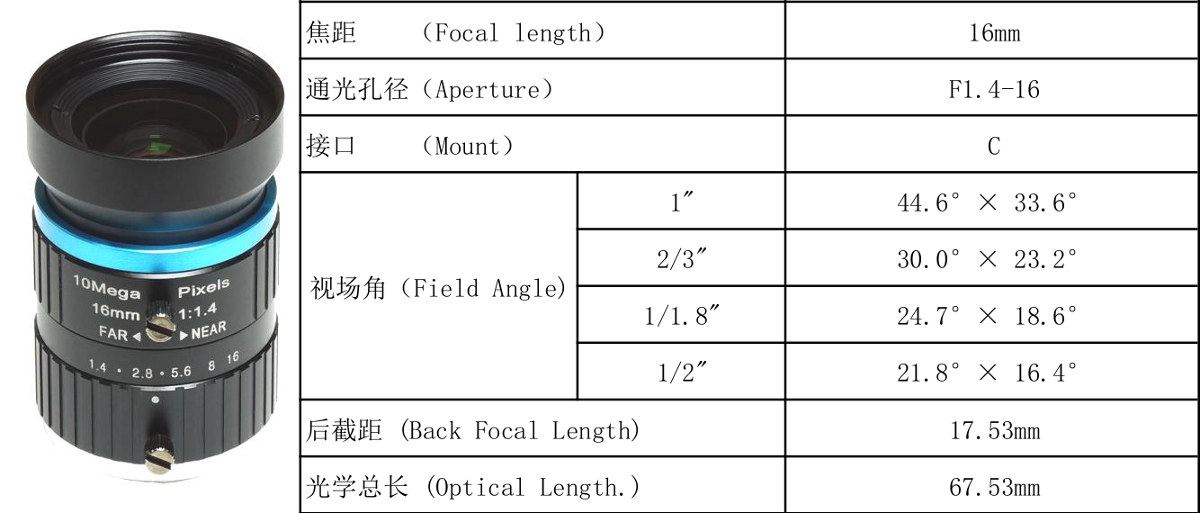

The CS-mounted 6mm lens (PT361060M) offers 3MP resolution, F1.2 aperture, 63° viewing angle, while the C-mounted 16mm lens (PT3611614M10MP) delivers 10MP resolution, F1.4-16 aperture, and a variety of viewing angles depending on the selected size.

Raspberry Pi HQ camera module is sold for $50, but remember you’ll need to add your own lens, or purchased the official Raspberry Pi 6mm or 16mm lenses for respectively $25 or $50. You’ll find the module and lenses on your usual distributors including Element14, Cytron, Digikey among others.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

I never quite got the point of the dedicated camera connector.. is the idea that through the CSI connector you could push more uncompressed frame faster than through a USB port? And without compression artifacts you can do some less noisy CV?

seemingly none of the other SBCs have dedicated camera connectors.. (Nano 2 Duo has some connector but it only accepts their camera I think)

12MP is a lot of MB per frame though.

That said I think it’s cool you can slap on some adapters and attach it to a telescope or some old lenses. I can see people playing around with this quite a bit

> seemingly none of the other SBCs have dedicated camera connectors

Huh? Just look at these +20 SBC from FriendlyELEC alone: https://www.armbian.com/download/?tx_maker=friendlyelec

Most of them have 1 or even 2 CSI connectors, same is true for Orange Pi. And why not? The SoCs can deal with it, the connector is ultra cheap. Only driver support (in Linux) is often crap. Same applies to the RPi too since here the whole video encoding job is done in ThreadX on the VideoCore and not within Linux/ARM.

Oh thanks for the correction. I should have been more careful before speaking. They also seem to have their own “DVP Camera” for some boards which is rather confusing

And that’s interesting you can just pipeline it into the encoder and save to file. I guess that’s quite useful for some applications

> you can just pipeline it into the encoder and save to file

Or encode to a stream and pipe it through the network or provide it on an USB port. As megi explained USB cameras do essentially the same using a camera SoC. But most general purpose and especially mobile SoCs have similar capabilities and with appropriate driver support there you have better control over sensor and settings.

Fascinating. So combined with a small board you could make an enhanced “smart” webcam. The encoding and USB pipelining would basically leave your CPU mostly idle. You could then catch maybe a few frames a second, do some ML or processing to adjust parameters, and then overlay information on the steam – is that the idea?

And thanks for pointing me to FriendlyARM

they have this cheaper alternative:

https://www.friendlyarm.com/index.php?route=product/product&product_id=247

It’s a bit thin on details and it’s unclear what can be controlled programmatically. I remember getting a usb cam once and discovering (as other comments point out) that a lot of stuff wasn’t possible. the main issue being the focus wasn’t controllable. so it’s ajust the focus randomly and make a mess haha

> is that the idea?

I’m pretty much clueless when it’s about this stuff. Better search this blog’s comments section for ‘Jon Smirl’ and ‘mangogeek’, they’re both into it.

My impression is that all mobile ARM SoCs have some IP block capable of video encoding since that’s what they’re made for (with Android). Even the old and boring VideoCore IV we’re talking here about from ~10 years ago has an h.264 encoder.

5 years ago we did some stuff with Raspberries for video surveillance just because Linux driver support everywhere else wasn’t in shape or more probably I failed to recognize. And even with the single core RPi models you get a somewhat ok-ish h.264 Videostream suitable for some purposes (even when the ARM CPU is downclocked to 200 MHz since everything is running inside the proprietary VideoCore domain).

But I simply piped the ‘raw’ h.264 stream through the network to some central monitoring host with an Allwinner A20 capable of ‘repurposing’ up to 10 such h.264 streams to be viewed simultaneously with VLC while recording them to disk at the same time. So no idea what to do with the video frames on the SBC itself 🙂

Thanks for your insights 🙂

I really appreciate your input on this website btw

The only problem is, you don’t know if these CSI/DSI/MIPI buses are compatible with your video at all. That’s why 99% buy RPi + official PiCam or PiDAC.

nVidia Jetson Nano also has (a pair of inputs on the latest revision?) CSI inputs I believe. Given that Jetsons are aimed at CUDA-enhanced AI stuff, and computer vision analysis is a big area for this, that also makes sense.

More often than not with USB cams you just get the same sensor connected over CSI/I2C to some black box USB controller that exposes limited set of controls to the USB host. You may not get to set various params, that could limit the power consumption of the sensor (like PLL settings, etc.) and it may overheat causing shitty quality image no matter what lens you put on the sensor. You may get limited set of framerates, no option to set exposure, etc. So in the end you pay more (for USB controller) to get less options and optimization opportunities.

There are some USB cams with “open” controller that allows you to flash it with a better formware, but those are quite expensive.

Good quality is a priority now that people connect RPi4 to 4k screens, maybe 8k soon. 4k is already 8MP. Since it might be 3:2, you’ll need all the pixels

Except when powering two separte screens tthe Rpi tart 4 can only play 1080 video , spam bot Jerry.

> 4k is already 8MP. Since it might be 3:2, you’ll need all the pixels

Valid point 🙂