Firefly ROC-RK3576-PC is a low-power, low-profile SBC built around the Rockchip RK3576 octa-core Cortex-A72/A53 SoC which we also find in the Forlinx FET3576-C, the Banana Pi BPI-M5, and Mekotronics R57 Mini PC. In terms of power and performance, this SoC falls in between the Rockchip RK3588 and RK3399 SoCs and can be used for AIoT applications thanks to its 6 TOPS NPU. Termed “mini computer” by Firefly this SBC supports up to 8GB LPDDR4/LPDDR4X memory and 256GB of eMMC storage. Additionally, it offers Gigabit Ethernet, WiFi 5, and Bluetooth 5.0 for connectivity. An M.2 2242 PCIe/SATA socket and microSD card can be used for storage, and the board also offers HDMI and MIPI DSI display interfaces, two MIPI CSI camera interfaces, a few USB ports, and a 40-pin GPIO header. Firefly ROC-RK3576-PC specifications SoC – Rockchip RK3576 CPU 4x Cortex-A72 cores at 2.2GHz, four Cortex-A53 cores at 1.8GHz Arm Cortex-M0 MCU at 400MHz GPU […]

Testing AI and LLM on Rockchip RK3588 using Mixtile Blade 3 SBC with 32GB RAM

We were interested in testing artificial intelligence (AI) and specifically large language models (LLM) on Rockchip RK3588 to see how the GPU and NPU could be leveraged to accelerate those and what kind of performance to expect. We had read that LLMs may be computing and memory-intensive, so we looked for a Rockchip RK3588 SBC with 32GB of RAM, and Mixtile – a company that develops hardware solutions for various applications including IoT, AI, and industrial gateways – kindly offered us a sample of their Mixtile Blade 3 pico-ITX SBC with 32 GB of RAM for this purpose. While the review focuses on using the RKNPU2 SDK with computer vision samples running on the 6 TOPS NPU, and a GPU-accelerated LLM test (since the NPU implementation is not ready yet), we also went through an unboxing to check out the hardware and a quick guide showing how to get started […]

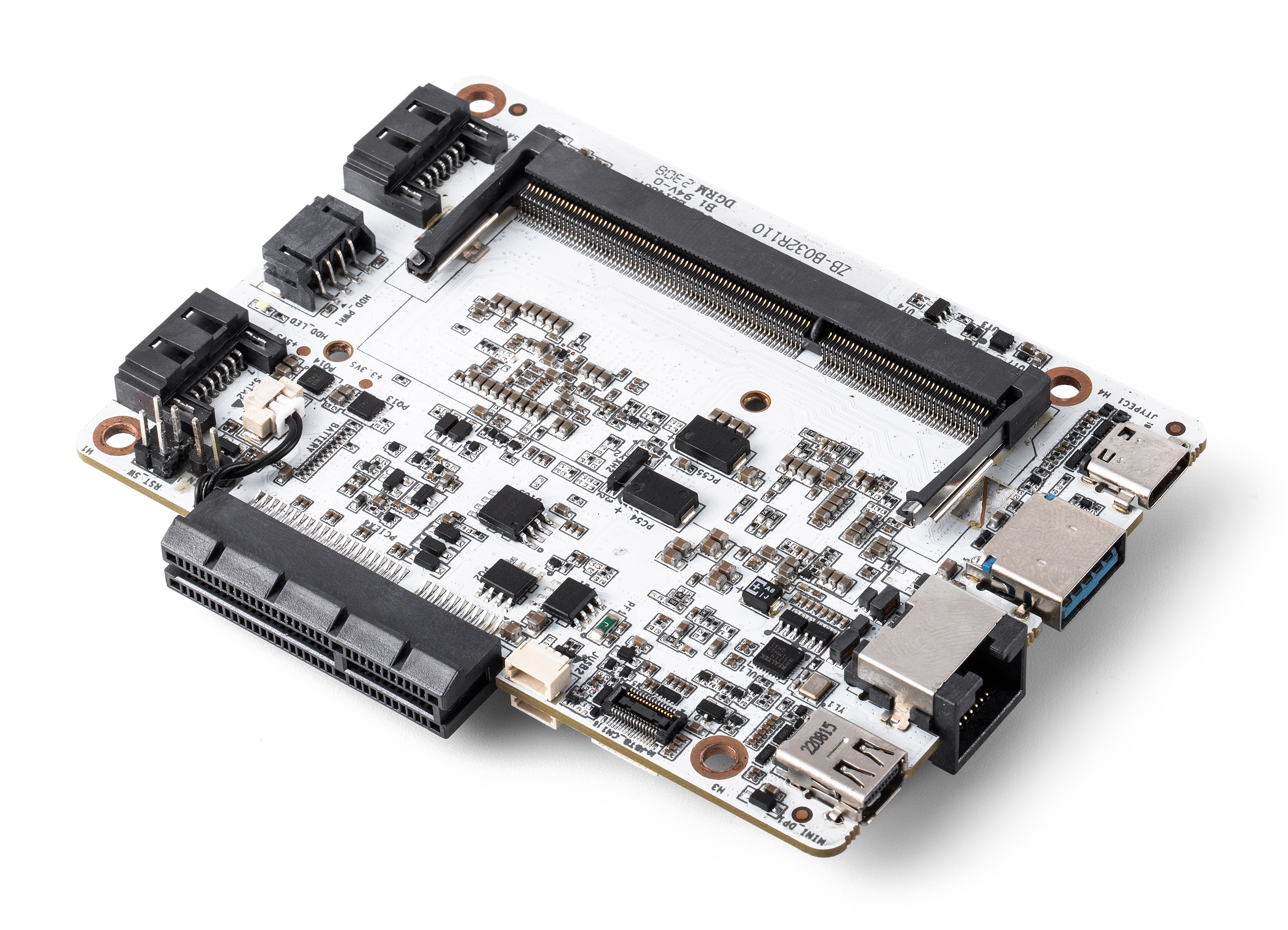

ZimaBlade – A $64+ low-profile Intel Celeron board for server applications and more (Crowdfunding)

ZimaBlade is an inexpensive low-profile board based on an Intel Celeron dual-core or quad-core processor and designed for server applications with a low-profile RJ45 Gigabit Ethernet port, two SATA connectors, and a PCIe slot, but not only as the board also comes with display interfaces such as mini DP and USB-C DisplayPort Alt. mode and a few USB ports. It’s not IceWhale Technology’s first venture into portable server board as the company previously introduced the Zimaboard based on Intel Celeron Apollo Lake processors with many of the same features back in 2021. The new ZimaBlade offers more interfaces as well as a complete enclosure instead of just a large heatsink. ZimaBlade specifications: SoC (one or the other) ZimaBlade 3760 – Intel Celeron dual-core processor up to 2.2 GHz (Turbo) with Intel UHD graphics; 6W TDP ZimaBlade 7700 – Intel Celeron quad-core processor up to 2.4 GHz (Turbo) with Intel UHD […]

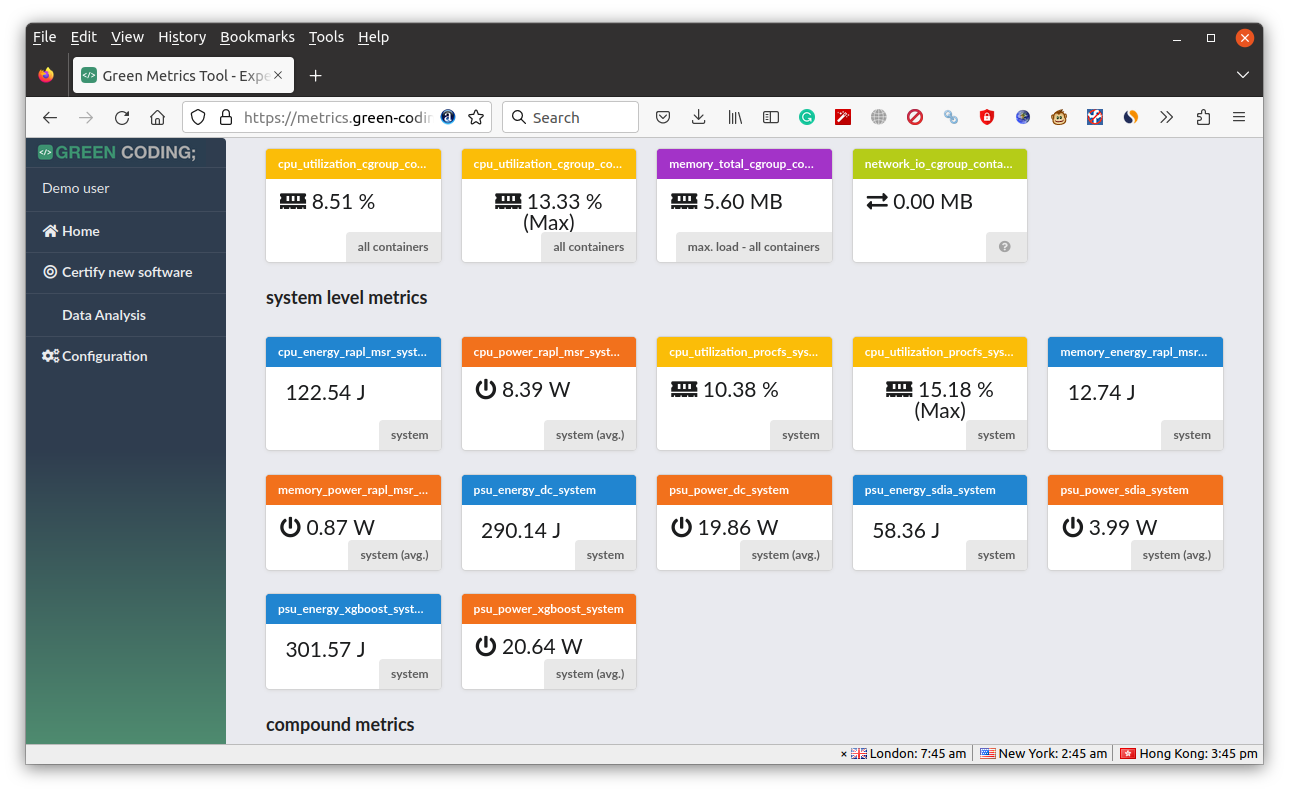

Green Metrics Tool helps developers measuring & optimizing software power consumption

The Green Metrics Tool (GMT) is an open-source framework that allows the measurement, comparison, and optimization of the energy consumption of software with the goal of empowering both software engineers and users to make educated decisions about libraries, code snippets, and software in order to save energy along with carbon emissions. While the firmware of battery-powered embedded devices and the OS running on your smartphone are typically optimized for low power consumption in order to extend the battery life, the same can not be said of most software running on SBCs, desktop computers, and servers. But there are still benefits of having power-optimized programs on this type of hardware including lower electricity bills, a lower carbon footprint, and potentially quieter devices since the cooling fan may not have to be turned on as often. The Green Metrics Tool aims to help in that regard. The developers explain how that works: […]

Odyssey Blue mini PC bundle ships with Frigate open-source NVR, Coral USB AI accelerator

Odyssey Blue mini PC based on the ODYSSEY-X86J4125 SBC is now offered as part of a bundle with Frigate open-source NVR platform with support for real-time local object detection, and an Coral USB AI accelerator. The Odyssey Blue mini PC is equipped with an Intel Celeron J4125 quad-core Gemini Lake Refresh processor, 8GB RAM, and a 128GB SSD preloaded with an unnamed Linux OS (probably Debian 11) and Frigate Docker container. The solution can run over object detection at 100+ FPS when equipped with a Coral USB accelerator. Since the hardware is not exactly new, and we’ve covered it in detail in the past, even reviewing the earlier generation SBC with Celeron J4105 processor and Re_Computer enclosure, I’ll focus on the software, namely Frigate NVR in this post. Frigate is an open-source NVR program designed for Home Assistant with AI-powered object detection that runs as a Docker container and uses […]

NanoPi R4SE dual Gigabit Ethernet router adds 32GB eMMC flash

NanoPi R4SE is a variant of the Rockchip RK3399-powered NanoPi R4S dual Gigabit Ethernet router that adds a 32GB eMMC flash instead of only relying on a microSD card for the operating system. Most of the specifications remain the same with dual GbE, two USB 3.0 ports, but the router is now only offered with 4GB LPDDR4 and there’s no option for only 1GB RAM, and the GPIO and USB 2.0 headers are gone. The listed temperature range also changed from -20°C to 70°C to 0°C to 80°C. NanoPi R4SE specifications: SoC – Rockchip RK3399 hexa-core processor with dual-Core Cortex-A72 up to 2.0 GHz, quad-core Cortex-A53 up to 1.5 GHz, Mali-T864 GPU with OpenGL ES1.1/2.0/3.0/3.1, OpenCL, DX11, and AFBC support, 4K VP9 and 4K 10-bit H265/H264 60fps video decoder System Memory – 4GB LPDDR4 Storage – 32GB eMMC flash, MicroSD card slot Networking – 2x GbE, including one native Gigabit […]

Embedded World 2022 – June 21-23 – Virtual Schedule

Embedded World 2020 was a lonely affair with many companies canceling attendance due to COVID-19, and Embedded World 2021 took place online only. But Embedded World is back to Nuremberg, Germany in 2022 albeit with the event moved from the traditional month of February to June 21-23. Embedded systems companies and those that service them will showcase their latest solution at their respective booths, and there will be a conference with talks and classes during the three-day event. The programme is up, so I made my own little Embedded World 2022 virtual schedule as there may be a few things to learn, even though I won’t be attending. Tuesday, June 21, 2022 10:00 – 13:00 – Rust, a Safe Language for Low-level Programming Rust is a relatively new language in the area of systems and low-level programming. Its main goals are performance, correctness, safety, and productivity. While still ~70% of […]

SkiffOS minimal Linux for embedded containers now supports Sipeed Nezha RISC-V board

SkiffOS minimal Cross-compiled Linux for embedded containers has just added support for Sipeed Nezha RISC-V single board computer, and work on the smaller Sipeed Lichee RV board has started. Wait… What is SkiffOS? I’ve never heard about it… That’s how the abstract from the white paper describes it: Embedded Linux processors are increasingly used for real-time computing tasks such as robotics and Internet of Things (IoT). These applications require robust and reproducible behavior from the host OS, commonly achieved through immutable firmware stored in read-only memory. SkiffOS addresses these requirements with a minimal cross-compiled GNU/Linux system optimized for hosting containerized distributions and applications, and a configuration layering system for the Buildroot embedded cross-compiler tool which automatically re-targets system configurations to any platform or device. This approach cleanly separates the hardware support from the applications. The host system and containers are independently upgraded and backed-up over-the-air (OTA). In other words, that’s […]