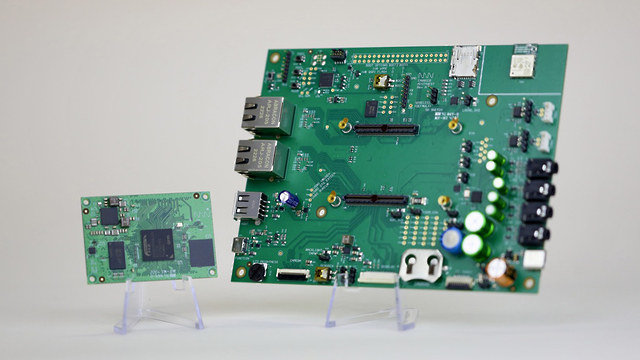

We’ve previously written about several system-on-modules and SBCs based on Renesas RZ/G2L or RZ/V2L Cortex-A55/M33 processors such as Geniatech “AHAURA” RS-G2L100 and “AKITIO” RS-V2L100 single board computers, Forlinx FET-G2LD-C system-on-module, and SolidRun RZ/G2LC SOM and devkit. But most of those are hard to buy, and you need to contact the company, discuss your project, etc… before purchase, except for the SolidRun Renesas RZ/G2LC Evaluation Kit going for $249. Another option is the MistyWest MistySOM module offered for $112 and up on GroupGets with either Renesas RZ/G2L or RZ/V2L processor, as well as an optional carrier board. MistySOM system-on-module MistySOM-G2L (aka MW-G2L) and MistySOM-V2L (aka MW-V2L) specifications: SoC – Renesas RZ/G2L or RZ/V2L with dual-core Cortex-A55 processor @ 1.2 GHz, Arm Cortex-M33 core @ up to 200 MHz, Arm Mali-G31 GPU, and DRP-AI vision accelerator (RZ/V2L only) System Memory – 2GB LPDDR4/DDR4 Storage – 32GB eMMC flash 2x 120-pin high-speed mezzanine […]

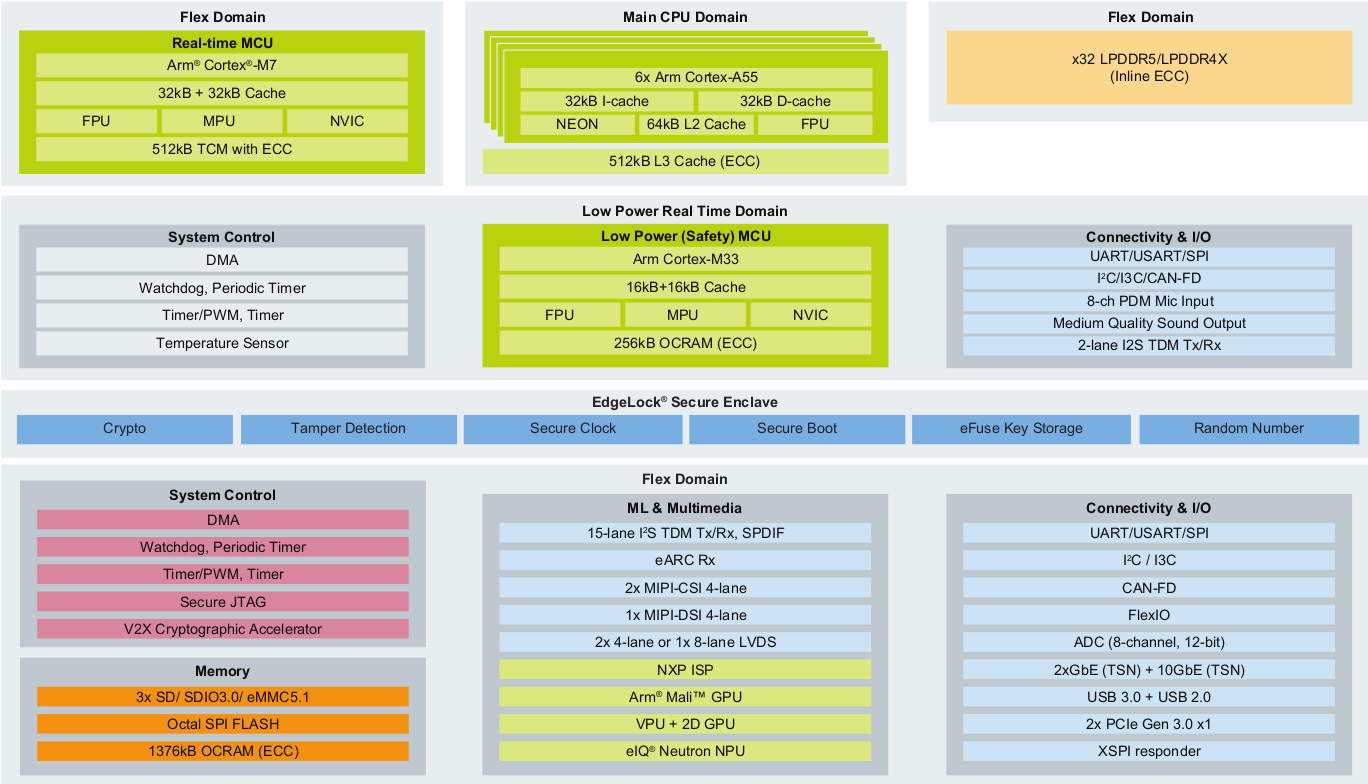

NXP i.MX 95 processor features Cortex-A55, Cortex-M33, and Cortex-M7 cores, eIQ Neutron NPU

NXP i.MX 95 is an upcoming Arm processor family for automotive, industrial, and IoT applications with up to six Cortex-A55 application cores, a Cortex-M33 safety core, a Cortex-M7 real-time core, and NXP eIQ Neutron Neural Network Accelerator (NPU). We’re just only starting to see NXP i.MX 93 modules from companies like iWave Systems and Forlinx, but NXP is already working on its second i.MX 9 processor family with the i.MX 95 application processor family equipped with a higher number of Cortex-A55 cores, an Arm Mali 3D GPU, NXP SafeAssure functional safety, 10GbE, support for TSN, and the company’s eIQ Neutron Neural Processing Unit (NPU) to enable machine learning applications. NXP i.MX 95 specifications: CPU Up to 6x Arm Cortex-A55 cores with 32KB I-cache, 32KB D-cache, 64KB L2 cache, 512KB L3 cache with ECC 1x Arm Corex-M7 real-time core with 32KB I-cache, 32KB D-cache, 512KB TCM with ECC 1x Arm Cortex-M33 […]

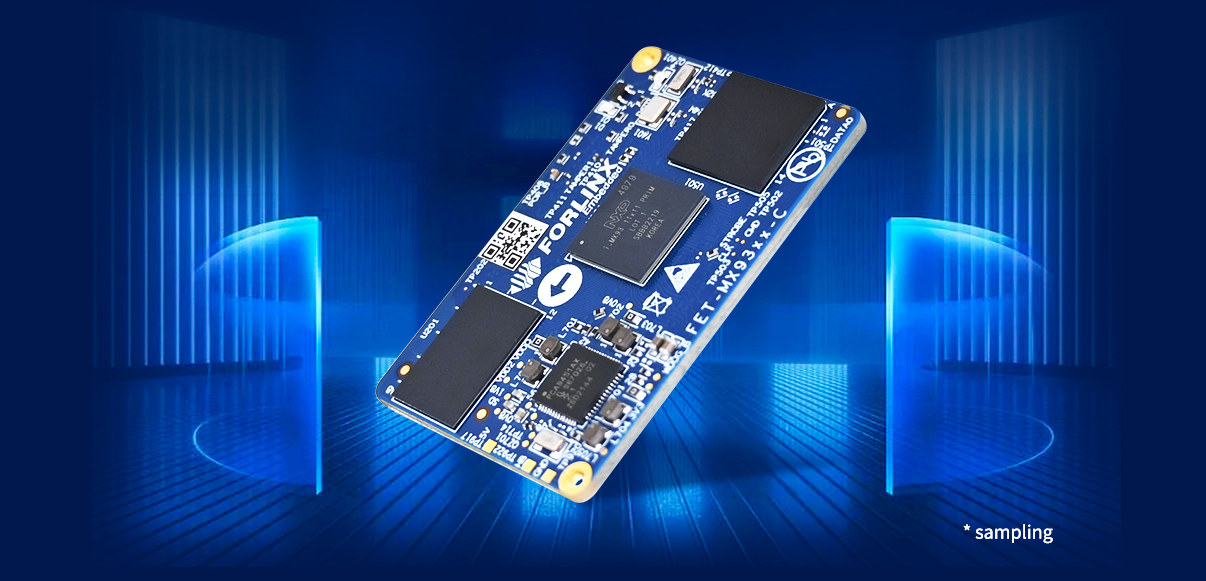

Forlinx FET-MX9352-C – An NXP i.MX 9352 system-on-module for industrial AIoT applications

Forlinx FET-MX9352-C is a system-on-module based on NXP i.MX 9352 dual Cortex-A55 processor with Cortex-M33 real-time core and a 0.5 TOPS AI accelerator that can be used for industrial control, IoT gateways, medical equipment, and various applications requiring machine learning acceleration. The FET-MX9352-C follows last week’s announcement of the iWave Systems iW-RainboW-G50M OSM module and SBC with a choice of NXP i.MX 93 processors. The Forlinx module comes with two board-to-board connectors instead of solderable pads and can be found in the OK-MX9352-C single board computer with dual GbE, various display and camera interfaces, RS485 and CAN Bus, etc… FET-MX9352-C i.MX 9352 system-on-module Specifications: SoC – NXP i.MX 9352 with 2x Arm Cortex-A55 cores @ up to 1.7GHz (commercial) or 1.5 GHz (industrial), Cortex-M33 real-time core @ 250 MHz, 0.5 TOPS Arm Ethos U65 microNPU System Memory – 1GB/2GB LPDDR4 RAM Storage – 8GB eMMC flash 2x high-density 100-pin board-to-board […]

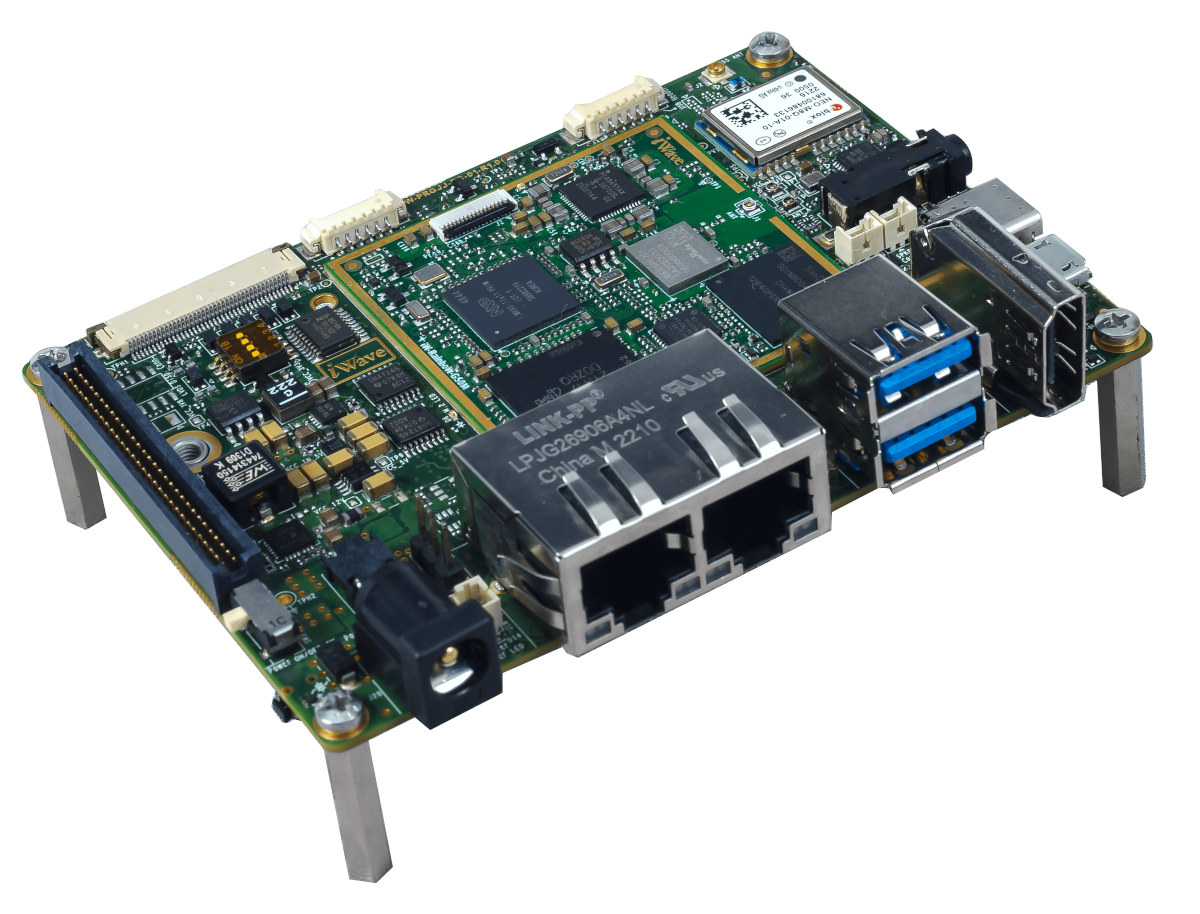

Pico-ITX SBC features NXP i.MX 93 LGA system-on-module

iWave Sytems iW-RainboW-G50M is an NXP i.MX 93 OSM-L compliant LGA module with up to 2GB RAM, WiFi 5 and Bluetooth 5.2 module that is found in the company’s iW-RainboW-G50S Pico-ITX SBC designed for industrial applications. The NXP i.MX 93 single and dual-core Cortex-A55 processor with an Ethos U65 microNPU was announced in November 2021, but we had yet to see any hardware based on the new NXP i.MX 9 processor family. The iW-RainboW-G50M and iW-RainboW-G50S change that with a system-on-module and single board computer. iW-RainboW-G50M NXP i.MX 93 system-on-module Specifications: SoC (one or the other) NXP i.MX 9352 dual-core Cortex-A55 processor @ up to 1.7 GHz with Arm Cortex-M33 @ 250 MHz, 0.5 TOPS NPU NXP i.MX 9351 single-core Cortex-A55 processor @ up to 1.7 GHz with Arm Cortex-M33 @ 250 MHz, 0.5 TOPS NPU NXP i.MX 9332 dual-core Cortex-A55 processor @ up to 1.7 GHz Arm Cortex-M33 @ […]

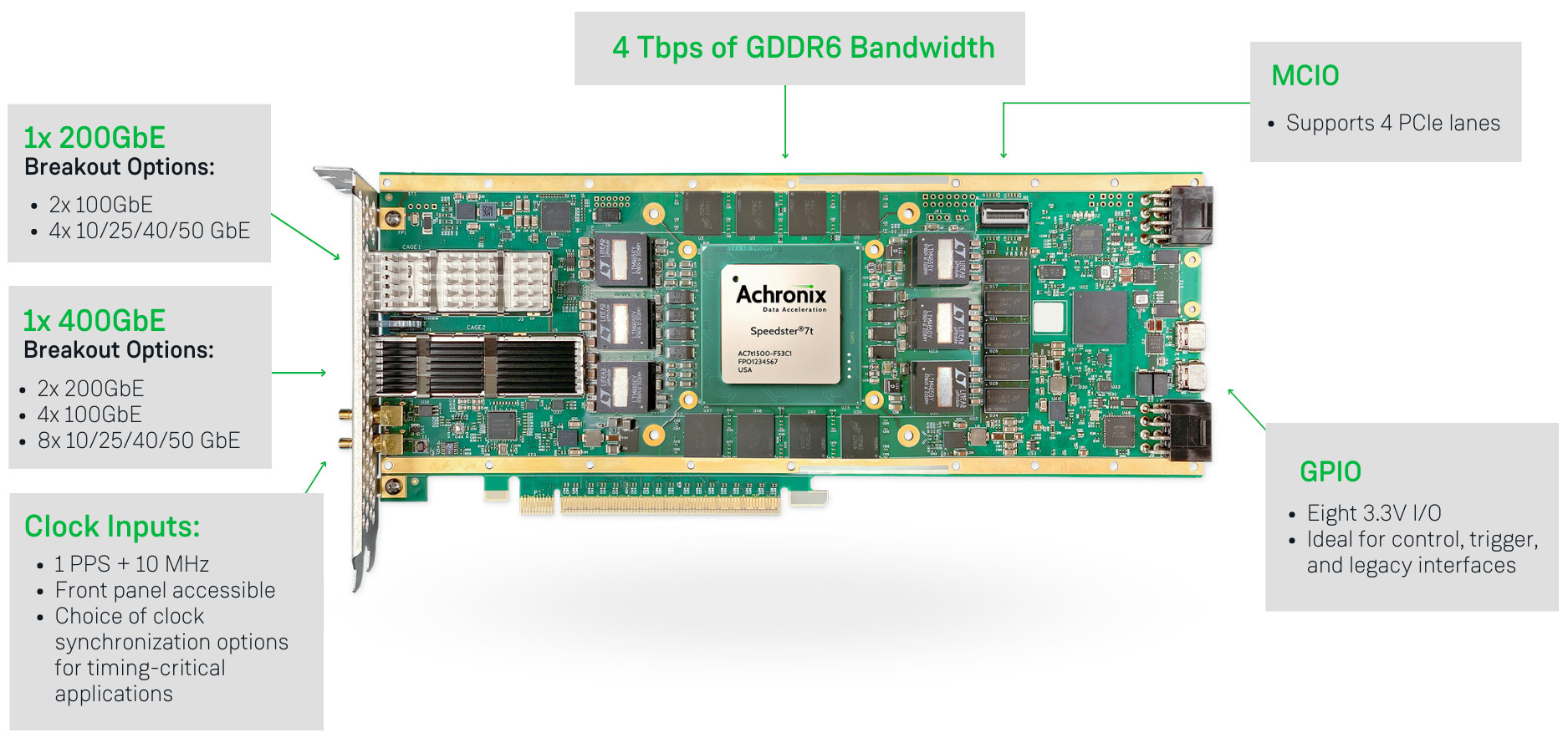

Achronix Speedster7t AC7t1500 FPGA is now available for high-bandwidth applications

Achronix Semiconductor has recently announced the general availability of the Speedster7t AC7t1500 FPGA designed for networking, storage, and compute (AI/ML) acceleration applications. The 7nm Speedster7t FPGA family offers PCIe Gen5 ports and GDRR6 and DDR5/DDR4 memory interfaces, delivers up to 400 Gbps on the Ethernet ports, and includes a 2D network on chip (2D NoC) that can handle 20 Tbps of total bandwidth. Achronix Speedster7t highlights: Two-dimensional network on chip (2D NoC) enabling high bandwidth data flow throughout and between the FPGA fabric and hard I/O and memory controllers and interfaces MLP (Machine Learning Processors) blocks with arrays of multipliers, adder trees, accumulators, and support for both fixed and floating-point operations, including direct support for Tensorflow’s bfloat16 format and block floating-point (BFP) format. Multiple PCIe Gen5 ports High-speed SerDes transceivers, supporting 112 Gbps PAM4 and 56 Gbps PAM4/NRZ modulation, as well as lower data rates Hard Ethernet MACs that support […]

ArduCam Mega – A 3MP or 5MP SPI camera for microcontrollers (Crowdfunding)

ArduCam Mega is a 3MP or 5MP camera specifically designed for microcontrollers with an SPI interface, and the SDK currently supports Arduino UNO and Mega2560 boards, ESP32/ESP8266 boards, Raspberry Pi Pico and other boards based on RP2040 MCU, BBC Micro:bit V2, as well as STM32 and MSP430 platform. Both cameras share many of the same specifications including their size, but the 3MP model is a fixed-focus camera, while the 5MP variant supports autofocus. Potential applications include assets monitoring, wildfire monitoring, remote meter reading, TinyML applications, and so on. ArduCam Mega specifications: Camera Type 3MP with fixed focus 5MP with auto-focus from 8cm to infinity Optical size – 1/4-inch Shutter type – Rolling Focal ratio 3MP – F2.8 5MP – F2.0 Still Resolutions 320×240, 640×480, 1280×720 x 1600 x1200x 1920 x 1080 3MP – 2048 x 1536 5MP – 2592×1944 Output formats – RGB, YUV, or JPEG Wake-up time 3MP – […]

T-Camera S3 – An ESP32-S3 board with camera, display, PIR motion sensor, and microphone

LilyGO has launched a new ESP32-S3 WiFi & BLE camera board with the T-Camera S3 also featuring a small display, a PIR motion sensor, and a microphone, as well as an optional plastic shell. The T-Camera S3 is an evolution of the TTGO T-Camera ESP32 board introduced in 2019 with many of the same features, but the ESP32 microcontroller has been replaced with an ESP32-S3 microcontroller with vector extensions that makes it suitable for machine learning and computer vision applications. The new board also comes with a larger 16 MB SPI flash, more I/Os, and a few other small changes. T-Camera S3 specifications: ESP32-S3-WROOM-1 wireless module SoC – ESP32-S3FN16R8 dual-core Tensilica LX7 microcontroller @ 240 MHz (Note: this SKU is not listed in the official ESP32-S3 datasheet) with 2.4 GHz 802.11n WiFI 4 and Bluetooth 5.0 LE connectivity Memory – 8MB PSRAM Storage – 16MB SPI flash Camera – 2MP […]

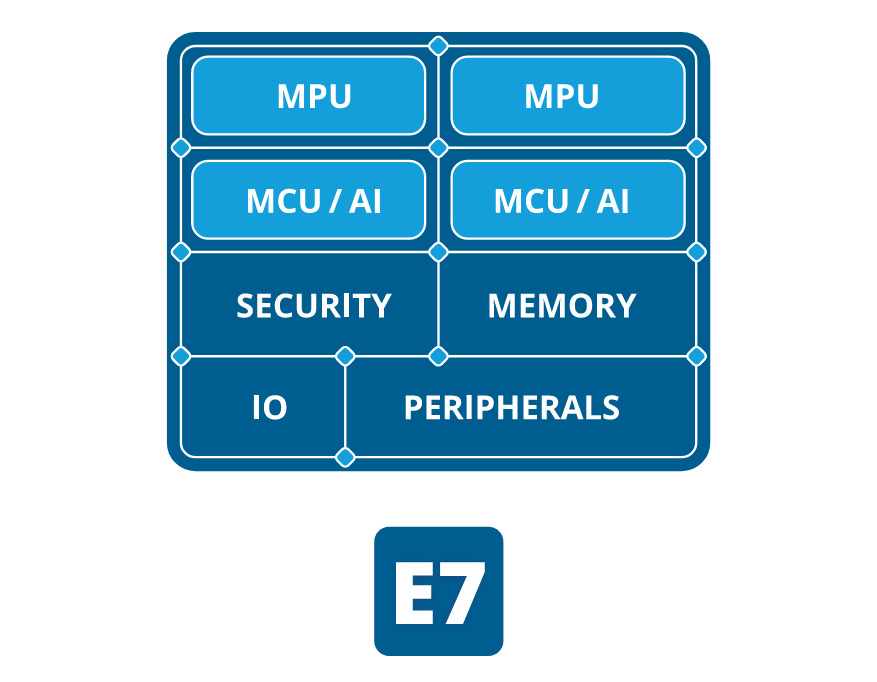

Alif Ensemble Cortex-A32 & Cortex-M55 chips feature Ethos-U55 AI accelerator

Alif Semiconductor’s Ensemble is a family of processors and microcontrollers based on Arm Cortex-A32 and/or Cortex-M55 cores, one or two Ethos-U55 AI accelerators, and plenty of I/Os and peripherals. Four versions are available as follows: Alif E1 single-core MCU with one Cortex-M55 core @ 160 MHz, one Ethos U55 microNPU with 128 MAC/c Alif E3 dual-core MCU with one Cortex-M55 core @ 400 MHz, one Cortex-M55 core @ 160 MHz, one Ethos U55 with 256 MAC/c, one Ethos U55 with 128MAC/c Alif E5 triple-core fusion processor with one Cortex-A32 cores @ 800 MHz, one Cortex-M55 core @ 400 MHz, one Cortex-M55 core @ 160 MHz, one Ethos U55 with 256 MAC/c, one Ethos U55 with 128MAC/c Alif E7 quad-core fusion processor with two Cortex-A32 cores @ 800 MHz, one Cortex-M55 core @ 400 MHz, one Cortex-M55 core @ 160 MHz, one Ethos U55 with 256 MAC/c, one Ethos U55 with […]