ReSpeaker 4-mic array is a Raspberry Pi HAT with four microphones that can work with services such as Google Assistant or Amazon Echo. It was launched in 2017. So nothing new on the hardware front.

What’s new is the expansion board is now supported by Picovoice that works much like other voice assistants except it allows people to create custom wake words and offline voice recognition.

Picovoice is described as an end-to-end platform for building customized voice products with processing running entirely on-device. It is cross-platform, is said to be more resilient to noise and reverberation, and thanks to running offline, it offers low-latency and complies with HIPAA and GDPR privacy regulations.

The platform is comprised of two main engines:

- Porcupine lightweight wake word engine that supports custom wake words trained through PicoVoice console. The engine can listen to multiple wake words and is cross-platform with support for Raspberry Pi, BeagleBone, Android, iOS, Linux (x86_64), macOS (x86_64), Windows (x86_64)

- Rhino Speech-to-Intent engine to understand naturally-spoken commands

For example with a smart coffee machine in your home, you could wake up your smart speaker with a custom wake word handle by Porcupine such as “Hey home sweet home”, and then ask it to make you a cup of coffee through Rhino: “make me a cup of coffee”. It’s also possible to combine both:

Hey home sweet home, make me a cup of coffee

Seeed Studio updated their Wiki to show how to use both PicoVoice’s Porcupine and Rhino using a Python demo running on Raspberry Pi plus ReSpeaker 4-mic array. The demo source code can also be found on Github. It supports nine different wake words: Alexa, Bumblebee, Computer, Hey Google, Hey Siri, Jarvis, Picovoice, Porcupine, and Terminator.

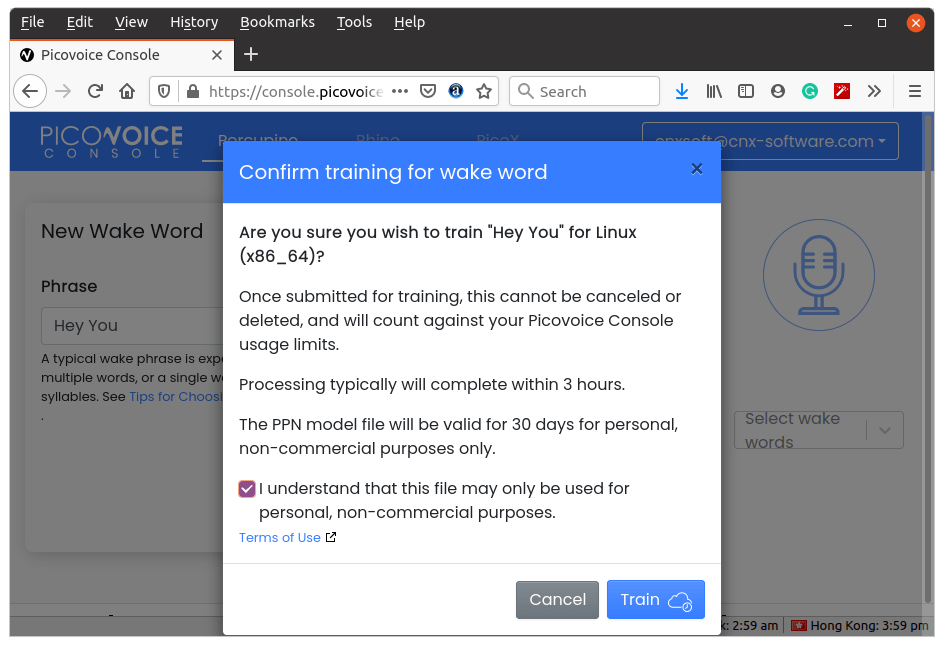

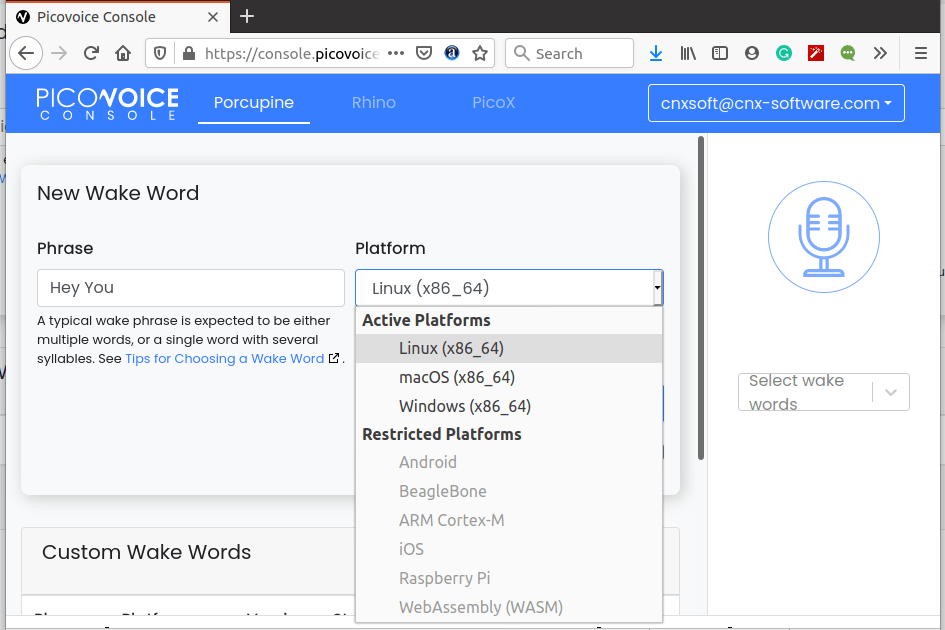

I decided to check out the Picovoice console to check out the process to create a custom wake word. During registration, you’ll be asked for you email address and whether you are an individual or represent a company aiming to create a commercial product. After registration, you’re being asked to select Porcupine wake word engine or Rhino speed-to-intend engine.

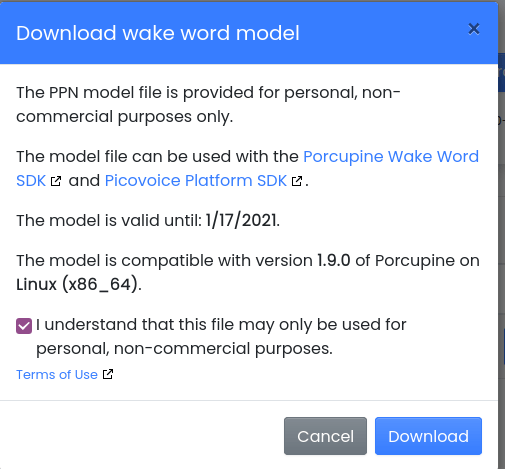

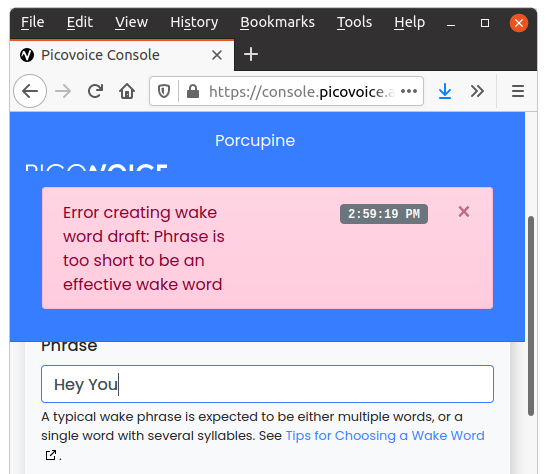

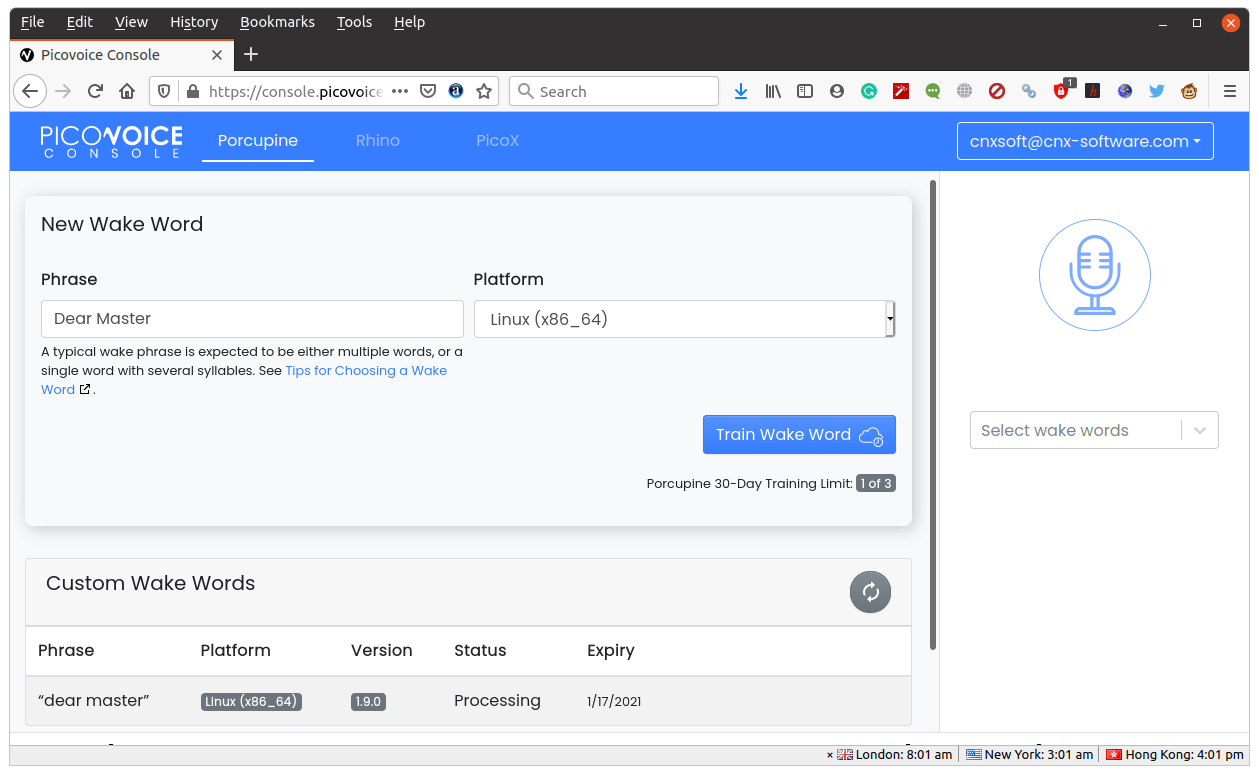

Let’s go with Porcupine since I’d like to make a custom keyword. I tried “Hey You”. For some reason, we have to select the OS and target and in this case, I left the default: Linux(x86_64).

It’s possible to select other platforms, but with a personal account only x86 64-bit OSes are active (Linux, Windows, macOS), and Android, Beaglebone, Arm Cortex-M, iOS, Raspberry Pi, and WebAssembly are all restricted. I suppose that the restrictions are only lifted for commercial accounts.

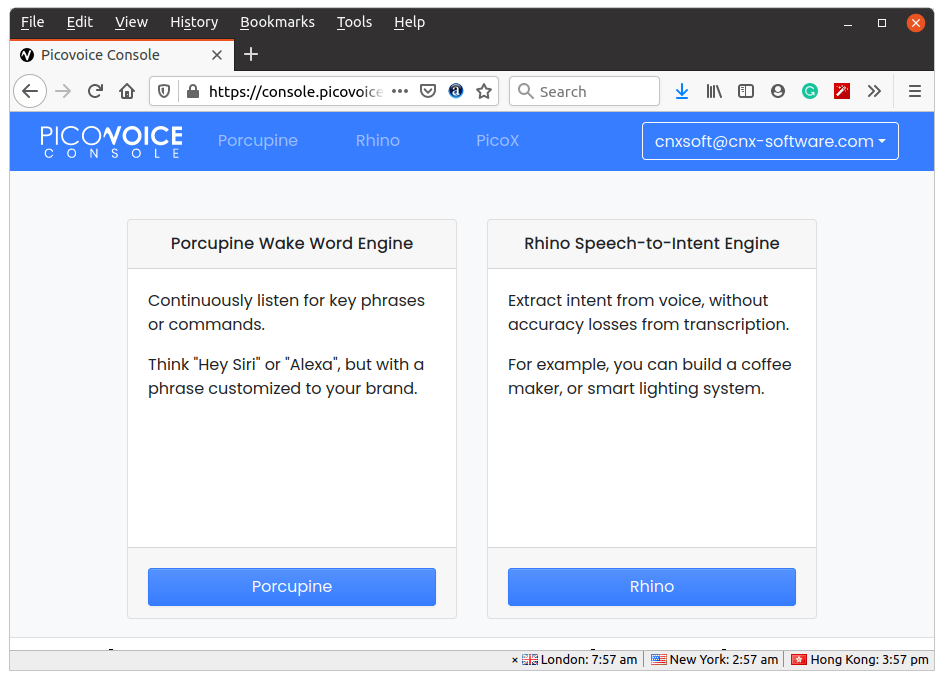

Personal accounts can only use custom wake words for 30 days, and there a limit of three trainings per month. Let’s click on Train.

Personal accounts can only use custom wake words for 30 days, and there a limit of three trainings per month. Let’s click on Train.

It did not like my wake word since “you” is too short. So I decided to be a good consumer and be submissive to my smart home, and changed that to “dear master”.

We’re told the process can take up to 3 hours, but I received a confirmation email within 20 minutes:

our wake word (“dear master”) has finished training.

You can test the wake word in-browser and download the model file at https://console.picovoice.ai/ppn

I’m not sure how they did it, because I’ve always read making a custom wake word takes time and requires thousands of voice samples.

My file is called “dear_master_linux_2021-01-17-utc_v1_9_0.ppn” and takes 3.1KB only. It can in a zip file with a text file containing “Picovoice Console Personal Account License Agreement”.

My file is called “dear_master_linux_2021-01-17-utc_v1_9_0.ppn” and takes 3.1KB only. It can in a zip file with a text file containing “Picovoice Console Personal Account License Agreement”.

Once the file is downloaded you can integrate it with Porcupine Wake Word SDK and icovoice Platform SDK whose documentation can be found here.

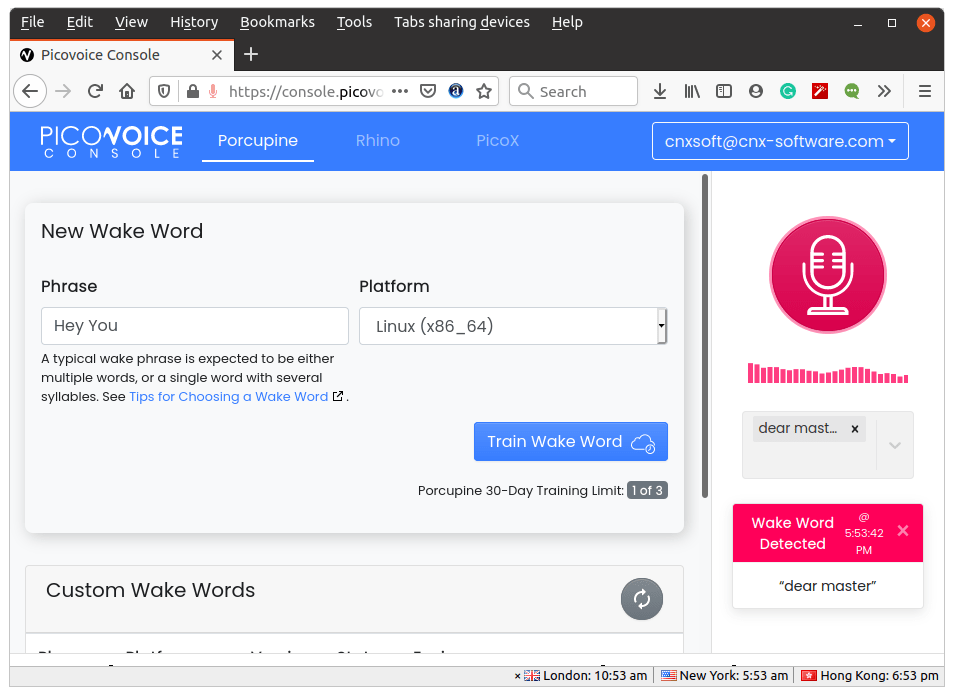

For a quick check, you can also test your new wake word in the web browser. It worked fine for me after adjusting my laptop’s microphone volume properly.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

Hi Jean-Luc,

which version of Raspberry Pi do you use for the demo?

Best,

It’s not my demo. But based on the cables used it looks like Raspberry Pi 3B or 3B+.

Here’s open source code to do the same thing…

https://github.com/nyumaya/nyumaya_audio_recognition

Nice, but I can see custom wake word is still a paid option for commercial projects.

I think that is just if you want him to build the model for you. Last time I played with it you could train it yourself too. The problem with training it yourself is that you need to have a lot of samples of people speaking.

If you don’t have those samples there is another project on github that will take a few samples and then distort them 1000s of different ways, then you can train on those samples. Not as good as real samples, but good enough for home use.

It is not an insignificant amount of work to train one of these models.

With Picovoice, I could just type the wake word I wanted with zero audio samples, and they trained it in 20 minutes. So I’m guessing they used text to speech technology to generate a thousand or so audio samples in order to create a model for my wake word.

Hi Jon, thanks this is interesting

“If you don’t have those samples there is another project on github that will take a few samples and then distort them 1000s of different ways, then you can train on those samples. Not as good as real samples, but good enough for home use.”

Can you share the link please?

Thanks

I can’t locate the one I originally played with, it is somewhere on github.

Here are two similar ones…

https://github.com/facebookresearch/WavAugment

And the other one

https://ai.googleblog.com/2019/04/specaugment-new-data-augmentation.html

I found the one I used….

https://github.com/JohannesBuchner/spoken-command-recognition

Supper.

Currently we are looking for wake word for our project. I’m checking all the possibilities to have custom trigger word for web and android… it is not so simple as somebody can imagine (especially for not english words). I’m studying this topic not from today and this is the first time somebody mention nyumaya. Now I have a new lecture – thanks for sharing Jon ?

This ReSpeaker HAT (and the other versions, for that matter) also work perfectly with Mycroft A.I. demo’d here;

https://youtu.be/ZuIyGyileYk

Picovoice can be used as alternative wakeword instead of precise, as well as many others.

Amazon has an extremely good wake word engine available if you have a commercial Alexa Device developer account with them. I keep hoping they will open source it instead of keeping it hidden behind a wall of paid consultants. If you want to use it you have to hire one of their third party consultants and pay them $$$$ to ‘integrate’ it for you.

Espressif is using this engine in their Alexa support.

Last I checked, you have to re-register your hotword with Picovoice every month.