Bootlin has just submitted the first patchset for the Allwinner V3 image signal processor (ISP) driver in mainline Linux which should pave the way for a completely open-source, blob-free camera support in Linux using V4L2.

There are several blocks in an SoC for camera support including a camera input interface such as MIPI CSI 2 and an ISP to process the raw data into a usable image. Add to this the need to implement the code for sensors, and there’s quite a lot of work to get it all working.

Allwinner SDK comes with several binary blobs, aka closed-source binary, but Bootlin is working on making those obsolete, having first worked on Allwinner A31, V3s/V3/S3, and A83T MIPI CSI-2 support for the camera interface driver in the V4L2 framework (and Rockchip PX30, RK1808, RK3128 and RK3288 processors), as well as implemented support for Omnivision OV8865 and OV5648 image sensors earlier this year.

Paul Kocialkowski has just published a blog post announcing initial support for Allwinner V3 “Hawkview” ISP in mainline Linux, and in combination with the earlier work we’ve just mentioned, the company was able to implement a proper V4L2 driver for the Allwinner V3’s ISP that is completely open-source, with no binary blob involved. You can check out the patchset submission thread for additional information. What may be missing is H.264 video encoding, since the company only got funding for developing H.264 and H.265 decoding on Allwinner processors, when the initiative for an open-source Allwinner VPU driver was introduced over three years ago.

Paul notes that the currently proposed Allwinner ISP driver only supports debayering with coefficients and 2D noise filtering, which represent only a subset of the 8M Hawkview ISP which, according to Allwinner V3 datasheet, supports spatial de-noise, chrominance de-noise, zone-based AE/AF/AWB statistics, black level correction, lens shading correction, color correction, and anti-flick detection statistics.

Nevertheless, the currently implemented features were sufficient for Bootlin use case, and they consider adding statistics support in order to implement 3A algorithms (auto-focus, auto-exposition, and auto-white-balance) needed for automatic configuration of scene-specific parameters. Support for these may eventually end up in libcamera open-source library.

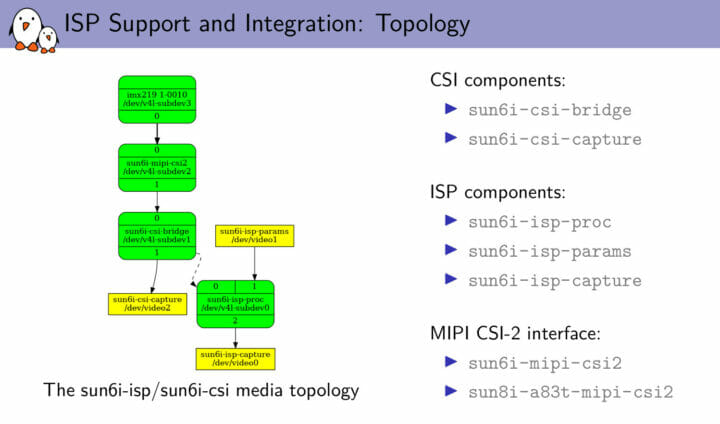

Paul also happens to have given a talk about “Advanced Camera Support on Allwinner SoCs with Mainline Linux” at the Embedded Linux Conference 2021 earlier this week, so you may want to check out the presentation slides if you are interested in the details. The ISP-specific part starts on page 35.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

This is very impressive work by bootlin. I am glad to see it being contributed. If other SoCs would start to fall in line, this could be a very useful shift in open source for these chips.

I was about to say the same. It’s fortunate that there are companies like bootlin taking the lead on such painful tasks. Even if they do it in a crowdfunding way to limit the costs, it’s still great that at least someone engages on this road.

What I really don’t understand is, since they have to develop a working driver for their customers and pay someone to do it, why Allwinner don’t hire bootlin or someone else with their expertise and publish an open source driver from start. I think that a mainline driver is the best choice in the long term since usually their modified kernels obsolete quite quickly.

I agree with you. Companies license stock hardware IP, so why not standard drivers for such hardware. aRM claim there is no demand for such drivers, yet companies produce the drivers mentioned here.

Would you buy a Windows 10,11 motherboard if the company never provided bios or drivers for the motherboard ?

AFAIK ARM claimed there was no demand for drivers for *their gpus*.

I doubt they give a crap about how a vendor supports junk IP they added to the cores they licensed from ARM as long as they pay for the ARM bits. If they did they wouldn’t have started licensing their stuff to anyone but the NXPs etc of the world in the first place.

We don’t know what company paid bootlin to do this. It could be a just for fun effort. Doing these sorts of things looks good for bootlin when scouting for customers that want similar work done.

A few years ago, Arm was not impressed by reverse-engineering efforts on Mali 400 GPUs. But they are apparently helping with Panfrost development to some levels: https://www.cnx-software.com/2020/09/18/arm-officially-supports-panfrost-open-source-mali-gpu-driver-development/

Collabora did not want to tell me whether Arm paid them or not. So it may just be related to documentation.

And even if ARM participates it’s very likely that they do not want it to be officially known. They probably make a lot of revenue from SDKs, training etc and do not want their customers to start to think “let’s not buy the new IP and wait for someone else to port it to mainline first, then we’ll save a lot of money”. The current situation makes it much less certain that this day ever happens so it’s easier for them to sell their sauce. It’s quite sad for end users, but most hardware vendors have no care at all for end users. And that will certainly not improve with nvidia…

I think ARM cares a bit about OSS. I wonder if the GPU driver change of heart was because they realised having a driver setup that only really works with Android was hurting them.

I don’t think that means they care about drivers for 3rd party IPs though. I suspect a lot of the reason for ATF existing is to hide closed drivers there and have the OSS part of the driver in the kernel just be a shim. A sort of remix of the shim module scheme nvidia uses.

Then why not customers fund bootlin, to write non blob GPU drivers. Free to funders, royalty fee to companies. Make the devils pay 😀😋

That’s not how the GPL works. As soon as you distribute binaries you need to start giving people sources too. If not the drivers are no better than the blobs from vendors.

And what you’re saying one of my biggest complaints with OSS; People thinking they decide what other people should do with their time.

“I want X and you should do it for me because you’re an OSS guy right?”. If you personally want opensource GPU drivers then either get on it or pay bootlin or someone to do it for you.

>why Allwinner don’t hire bootlin or someone else with their

>expertise and publish an open source driver from start.

In a lot of cases Allwinner didn’t come up with the IP in the first place.

They bought the IP from somewhere and it had a reference Linux driver with it, someone at AW hacked it up to remove the original source of the IP from it etc, and then they shipped that with their 4.x series kernel.

The only way to make AW care about mainlining is to get a big customer like google on their books and have that customer refuse to buy millions of dollars worth of parts unless mainlining happens.

This never ending problem is rooted in these fabless SOC companies being run by ‘chipheads’. Chipheads are the hardware engineering managers that run these companies. Their background is “ship and forget”. Get a chip out of the door, address the errata, and move onto the next chip. Software is a necessary nuisance that gets in the way of shipping chips. They put the software people into the chip design teams causing the software people to also practice “ship and forget”.

“Ship and forget” makes perfect sense form the hardware view point. And it is totally screwed up from the software one. For a ‘chiphead’ more is better. The more SOC designs they can ship the better the world appears to them. As long as the software is limping along they are happy. They equate shipping a new SOC to revenue.

My personal view point is that it would be better to have fewer SOC variations with better software support. Reduce the number of new SOC designs and extend the life time of the older ones. But try telling that to a ‘chiphead’ whose entire life is focused on making new SOC designs.

Intel got this right by accident. Since everything is compatible the software has had decades to mature.

Would be nice if their “ship and forget” started shipping to mainline Linux rather than a single fork of their own. A lot more people would be fine with that. I imagine the GPU driver situation could place some limits though on what the chipheads can use though.

Would you pay a 1 penny or 2 penny more for the device you buy? ( We know TAX & Revenue is complicated ). If Chip maker sold 500,000 SoC, Chips that would be 500,000 before TAX. So how much software support, could that buy for a SoC ? How much for Armbian to support that SoC ( as long as Armbian is given Document and hardware details..)

It’s not a money problem, it is a proliferation and management problem. Once the ‘chiphead’ manager ships the SOC he pulls all of the software people off from it and reassigns them to the next SOC design. The results in close to zero work being done on the code from the first SOC after it ships.

Allwinner is an example of a company that is on the path to self destruction over “ship and forget”. They are proliferating new SOC designs far faster than their pitiful software effort can keep up with.

The end game of “chiphead” management is Broadcom. Broadcom ends up with only two or three customers per chip. They are so extreme they don’t even want to talk to you unless you have a $1M+ to spend on chips. In the extreme form ‘chipheads’ are building ASICs for each large customer.

In the ARM world NXP is the leader in building a long term business. The don’t proliferate chips as fast and they provide much better software. This longer time horizon results in many more design wins per chip. Espressif is also a company that has slower device proliferation and much better software support.

Another factor at play is excessive secrecy with source code. Companies should be more intelligent about keeping IP secret from competitors (who likely already know how to build the secret feature) and preventing customers from getting their products working. For example why is h.264 encode/decode driver source code still secret? h.264 is twenty years old. Pretty sure all of the competitors have figured everything out. Customers don’t want this source code to learn your “secrets” they want it so that they can fix bugs in it that you won’t fix for them!

I’m curious about this space, but I’m a bit confused with the wording

“spatial de-noise, chrominance de-noise, zone-based AE/AF/AWB statistics, black level correction, lens shading correction, color correction, and anti-flick detection statistics.”

These all don’t seem particularly computationally intensive. Is the idea that these chips have dedicated in-hardware circuitry to do these steps? Or that the binary blobs provided by Allwinner were doing this in software?

“in order to implement 3A algorithms (auto-focus, auto-exposition, and auto-white-balance) needed for automatic configuration of scene-specific parameters. Support for these may eventually end up in libcamera open-source library.”

Is this saying that support will just be re-implemented in software? (surely that already exists)

Doing this on frames with high dimensions and high frame rates is actually very intensive and definitely cannot be done in software. This is why the ISP does this in hardware.

To give you an idea, you can try https://github.com/cruxopen/openISP which is a purely software implementation and see how long it takes to process one frame 😉

The implementations of 3A algorithms are typically hardware-specific because the way statistics are collected and how configuration parameters are applied are specific to the ISP. The general ideas behind the algos are in the literature but free implementations are rare.

Ah okay, yeah .. I’m not used to thinking in full-res. But yeah, at 1080p@60fps that’s a lot of pixels to crunch. The CPU wouldn’t have time for anything else 🙂

Thanks for the info and the reality check :)). And thank you for your work on this