Calxeda has released the results of ApacheBench benchmark comparing their ARM-based EnergyCore solution to an Intel Xeon server in order to showcase the performance and the much lower power consumption of their servers.

Here’s the setup:

- Hardware:

- Single Calxeda EnergyCore ECX-1000 @ 1.1 GHz, 4 GB of DDR3L-1066 memory, 1Gb Ethernet network port and 250 GB SATA 7200rpm HDD

- Intel Xeon E3-1240 @ 3.3 GHz, 16 GB memory and 1Gb Ethernet network port. No info on hard drive provided

- Software:

- Ubuntu Server v12.04

- Apache Server v2.4.2

- ApacheBench v2.3 (16k request size)

They performed power measurements every 2 seconds and averaged the results. Power supply overhead and hard drive power consumption were not excluded in the measurement, but the entire SoC and DDR memory power consumption were included together. For the Intel server however, they could not measure directly, so they used published TDP values for the CPU (80 W) and I/O chipset (6.7 W), along with an estimate for DDR memory (16 W). I’m not sure if those assumptions are realistic or not. [Update: Please read the comments for more information on this matter].

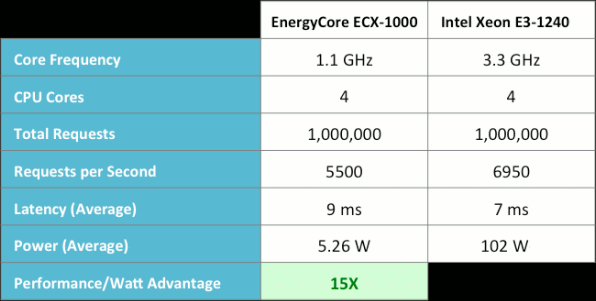

Here are the results:

For this particular benchmark, the results are really impressive with Calxeda ARM server being only about 20% slower then an Intel Xeon server while consuming about 5W instead of 102W for the Intel server that is it consumes nearly 20 times less. The Performance/Watt ratio shows a 15 times advantage for the Calxeda ARM server.

Calxeda also explains that the Sandybridge system saturated the single 1Gb NIC with less than 15% CPU utilization, which confirms the number previously mentioned by Mitac.

The company is still working on fine-tuning the power consumption in order to save even more power per watt.

This kind of performance per watt could allow companies managing large datacenters such as Google and Facebook to save several million dollars per year in electricity bills.

One benchmark is usually no enough to have a clear understanding of the performance of a system, so Calxeda also plans to provide some benchmark results running Cassandra database, Hadoop framework, Memcached caching system, and Graph500 benchmark for supercomputers in the future.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

the comparison should probably be IMHO Intel Medfield vs ARM of equivalent grunt …

http://www.phonearena.com/news/Intel-Medfield-chip-gets-benchmarked-beats-the-ARM-competition_id25154

@ mac me

The problem is that Melfield is not a server processor, so while it’s power consumption would be much better than the Xeon, I’m not sure how it would perform in terms of performance per watt.

Intel has plans to launch microserver based on a new Atom processor consuming 6W (SoC only) and Xeon (Ivy bridge) consuming 17W this year, so the current performance per watt advantage for ARM will seriously shrink in the coming years.

Source. Page 13 of Intel presentations at their investor’s meeting – http://www.cnx-software.com/pdf/Intel_2012/2012_Intel_Investor_Meeting_Bryant.pdf

You realize that the TDP is useless as an approximation don’t you? The TDP is related to the max theoretical energy a processor dissipates into heat that the cooling system is supposed to dissipate without the processor enter a critical internal temperature.

@ enk

No, I didn’t know. I I just thought Calxeda would not use “bogus” numbers in a public post (although they did say their method was not ideal). Thanks for the information. Is it possible to estimate the actual power consumption of the CPU, I/O chipset and Memory for the Xeon server?

Since the Xeon processor uses dynamic frequency scaling, which affects power consumption(it was designed to lower it), I believe it’s rather difficult, if not impossible, to estimate the power consumption of the system without a direct measurement(I believe i’ve seen it done with xeon in anandatech) in a real test case.

Just to share some thoughts about the testing:

1. As Enk mentioned, TDP of CPU only a technical specification on the max. power dissipation. There are many features in the Xeon processors could affect the power consumption – Turbo, Package C-State, Core C-State, SpeedStep etc. The realistic way to get power consumption of the server … measure the power consumption of the entire server.

2. Per testing results, E3-1200 @ higher frequency than E3-1240 consumes about 50-60W (system) @ 30% loading utilization. Certainly, the power consumption will vary with different workload. You may take a look at the test figures from SpecPower of Spec.org.

3. Agree with your comments that a single 1GbE port can be easily saturated with medium size packets while the Xeon processor remains low utilization. That’s why there are multiple 1GbE (range from 2 to 4) ports integrated on the motherboard.

4. Refer to the calculation of “Perf./Watt”, the figure will be changed from 15X to 7.5X if the power consumption of E3-1240 @ 30% utilization is halved to 51W … OR … if E3-1240 is able to saturate 4 ports, the perf/watt figure will be changed from 15X to 3.75X (without considering power consumption adjustment.

@ chowo

Thanks for the insightful comment.

I’ll just add a link and some details about your comment:

Here’s the power consumption figures for an Intel Xeon E3-1220 server @ 3.1 GHz: http://www.spec.org/power_ssj2008/results/res2011q3/power_ssj2008-20110806-00392.html. (I could not find E3-1200 results).

The average power consumption under 20% load is 34.8W. I’m not sure if this is the complete power consumption including HDD, PSU power dissipation, etc…

For full disclosure and credentials, chowo is apparently working at Intel.