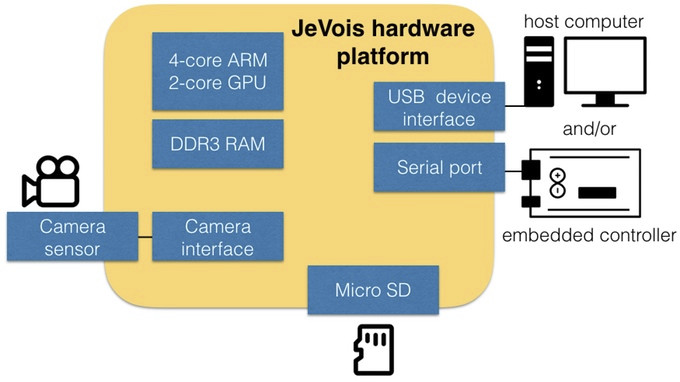

JeVois Neuromorphic Embedded Vision Toolkit – developed at iLab at the University of Southern California – is an open source software framework to capture and process images through a machine vision algorithm, primarily designed to run on embedded camera hardware, but also supporting Linux board such as the Raspberry Pi. A compact Allwinner A33 has now been design to run the software and use on robotics and other projects requiring a lightweight and/or battery powered camera with computer vision capabilities.

- SoC – Allwinner A33 quad core ARM Cortex A7 processor @ 1.35GHz with VFPv4 and NEON, and a dual core Mali-400 GPU supporting OpenGL-ES 2.0.

- System Memory – 256MB DDR3 SDRAM

- Storage – micro SD slot for firmware and data

- 1.3MP camera capable of video capture at

- SXGA (1280 x 1024) up to 15 fps (frames/second)

- VGA (640 x 480) up to 30 fps

- CIF (352 x 288) up to 60 fps

- QVGA (320 x 240) up to 60 fps

- QCIF (176 x 144) up to 120 fps

- QQVGA (160 x 120) up to 60 fps

- QQCIF (88 x 72) up to 120 fps

- USB – 1x mini USB port for power and act as a UVC webcam

- Serial – 5V or 3.3V (selected through VCC-IO pin) micro serial port connector to communicate with Arduino or other MCU boards.

- Power – 5V (3.5 Watts) via USB port requires USB 3.0 port or Y-cable to two USB 2.0 ports

- Misc

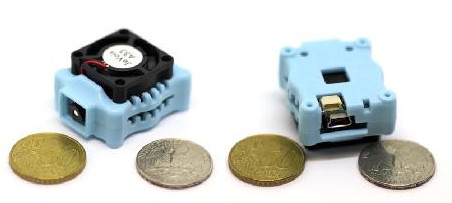

- Integrated cooling fan

- 1x two-color LED: Green: power is good. Orange: power is good and camera is streaming video frames.

- Dimensions – 28 cc or 1.7 cubic inches (plastic case included with 4 holes for secure mounting)

The camera runs Linux with the drivers for the camera, JeVois C++17 video capture, processing & streaming framework, OpenCV 3.1, and toolchains. You can either connect it to a host computer’s USB port to check out the camera output (actual image + processed image), or to an MCU board such as Arduino via the serial interface to use machine vision to control robots, drones, or others. Currently three modes of operation are available:

The camera runs Linux with the drivers for the camera, JeVois C++17 video capture, processing & streaming framework, OpenCV 3.1, and toolchains. You can either connect it to a host computer’s USB port to check out the camera output (actual image + processed image), or to an MCU board such as Arduino via the serial interface to use machine vision to control robots, drones, or others. Currently three modes of operation are available:

- Demo/development mode – the camera outputs a demo display over USB that shows the results of its analysis, potentially along with simple data over serial port.

- Text-only mode – the camera provides no USB output, but only text strings, for example, commands for a pan/tilt controller.

- Pre-processing mode – The smart camera outputs video that is intended for machine consumption, and potentially processed by a more powerful system.

The smart camera can detect motion, track faces and eyes, detect & decode ArUco makers & QR codes, detect & follow lines for autonomous cars, and more. Since the framework is open source, you’ll also be able to add your own algorithms and modify the firmware. Some documentation has already been posted on the project’s website. The best is to watch the demo video below to see the capacities of the camera and software.

The project launched in Kickstarter a few days ago with the goal of raising $50,000 for the project. A $45 “early backer” pledge should get you a JeVois camera with a micro serial connector with 15cm pigtail leads, while a $55 pledge will add an 8GB micro SD card pre-load with JeVois software, and a 24/28 AWG mini USB Y cable. Shipping is free to the US, but adds $10 to Canada, and $15 to the rest of the work. Delivery is planned for February and March 2017.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

That fan is unsightly!

The Kickstarter page says “Road detection for autonomous driving” … wow! Could that be true? A competitor for Tesla software, and George Hotz’ former project?

http://ilab.usc.edu/bu/ shows info on the software

http://ilab.usc.edu/bu/movie/index.html says “In this third video, performance of the model at detecting traffic signs is demonstrated. ” but also “the model is only looking for salient objects with no knowledge that these should be traffic signs.” so NO recognition of those road signs.

@sander

It could probably be implemented. The KS video shows the camera reading a sign with company name and address.

@sander

More than competing with big names like Tesla, I see a potential less recent cars market upgrade. Even without self driving abilities, but more information while driving.

Please see here for the autonomous navigation algorithm

http://ilab.usc.edu/publications/doc/Siagian_etal14jfr.pdf

We have not yet ported the whole framework described in that paper to JeVois, but we have:

– visual attention

– gist

– object recognition at attended locations

– road boundary detection and vanishing point computation

What is missing in JeVois compared to the above link is the probabilistic localization and mapping algorithms, code related to odometry, and obstacle avoidance using a laser range finder. We assume that this would be achieved elsewhere than on the JeVois camera.

Wonder if the project started with a recent kernel ie 4.x or using 3.4.x

I suspect it would have been more efficient to use the A31S for this instead of the A33. The only difference between the chips is the GPU. The A31S’ GPU can run OpenCL and the A33’s GPU can’t. With OpenCL OpenCV can offload a lot of work onto the GPU and maybe this wouldn’t need a fan.

@tcmichals

Based in an update showing the boot process, it is 3.4.39. https://www.kickstarter.com/projects/1602548140/jevois-open-source-quad-core-smart-machine-vision/posts/1775575