Machine learning used to be executed in the cloud, then the inference part moved to the edge, and we’ve even seen micro-controllers able to do image recognition with GAP8 RISC-V micro-controller.

But I’ve recently come across a white paper entitled “Resource-efficient Machine Learning in 2 KB RAM for the Internet of Things” that shows how it’s possible to perform such tasks with very little resources.

Here’s the abstract:

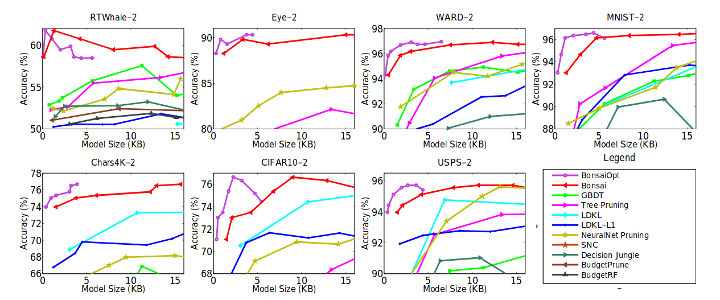

This paper develops a novel tree-based algorithm, called Bonsai, for efficient prediction on IoT devices – such as those based on the Arduino Uno board having an 8 bit ATmega328P microcontroller operating at 16 MHz with no native floating point support, 2 KB RAM and 32 KB read-only flash. Bonsai maintains prediction accuracy while minimizing model size and prediction costs by: (a) developing a tree model which learns a single, shallow, sparse tree with powerful nodes; (b) sparsely projecting all data into a low-dimensional space in which the tree is learnt; and (c) jointly learning all tree and projection parameters. Experimental results on multiple benchmark datasets demonstrate that Bonsai can make predictions in milliseconds even on slow microcontrollers, can fit in KB of memory, has lower battery consumption than all other algorithms while achieving prediction accuracies that can be as much as 30% higher than state-of-the-art methods for resource-efficient machine learning. Bonsai is also shown to generalize to other resource constrained settings beyond IoT by generating significantly better search results as compared to Bing’s L3 ranker when the model size is restricted to 300 bytes

The researchers actually tested it on both Arduino Uno (2KB RAM available) and BBC Micro:bit (16KB RAM available). Training is done in a laptop or the cloud, then you load the results on the development board which are able to perform inference by themselves without external help. They compare it to other algorithm that takes several megabytes instead of under 2 kilobytes for the same, and in some cases even end up with lower accuracy. Power consumption of Bonsai algorithm is also significantly lower. You’ll find all the details, including the mathematical formula of the algorithm in the white paper linked in the introduction. The source code can be found in Github, where we can find out the project was carried out by Microsoft Research India.

Via AlessandroDevs and Mininodes

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

Microcontrollers and other low-power devices are the only hope for progress in programming technologies and development of effective algorithms. On “big” PCs I see gradual decline in quality of source code, as most people care only about their deadlines and why bother, if you can just request more RAM?

People coded on a 1K zx81 and that had graphics out put in black and white.

Hardware is cheaper but small coding is re- inventing the wheel!

https://en.m.wikipedia.org/wiki/1K_ZX_Chess

I learned programming on Soviet rip-off on TI calculator and when I moved on to Yamaha MSX with huge 64K RAM and powerful Z80 CPU it felt like F1 after roller skates.

It’s the usual “silicon is cheaper than brains” problem. But this choice doesn’t scale in clouds where the silicon cost quickly has to be multiplied by hundreds to thousands just due to poor choices of algorithms or frameworks. Arduino, Attiny, PIC, ESP8286/32 all force the developer to make choices and use his brain. They are fantastic for education because it’s exactly where you want these people to spend time thinking instead of trying to be quick at producing shit. The one who understands the impact of a tree vs a linear lookup on an arduino will not make the mistake… Read more »

Gradual? Did you forget that Microsoft was a “thing”? The best product rarely wins, that’s why marketers get paid so much.

I thought hardware finally broke past software’s ability to thrash it a few years ago, but then HTML5 ‘apps’, node.js and other junk became the new hotness.

Good software is expensive to make, in part because the same stuff keeps getting remade in proprietary forms, patents, and because ceos and shareholders and other non-productive leeches on society keep skimming so much of the cream off the top at the expense of workers and society as a whole.

I do Frontend-Development for work. But I totally need to agree here. It was a bad idea to use Javascript for Backend or native Apps. (Desktop & Mobile) One of the most shitty inventions I ever seen. And there are so many people who still think it is a good idea to use it for these areas. The people should use the right tool for their problem. But instead: The changing the problem to fit in with their tool. So: Javascript is for Web-Frontend. That’s it. If you want to create Android Apps: Learn Java. If you want to create… Read more »