Intel has just announced the third generation of Movidius Video Processing Units (VPU) with Myriad X VPU, which the company claims is the world’s first SoC shipping with a dedicated Neural Compute Engine for accelerating deep learning inferences at the edge, and giving devices the ability to see, understand and react to their environments in real time.

Movidius Myraid X VPU key features:

Movidius Myraid X VPU key features:

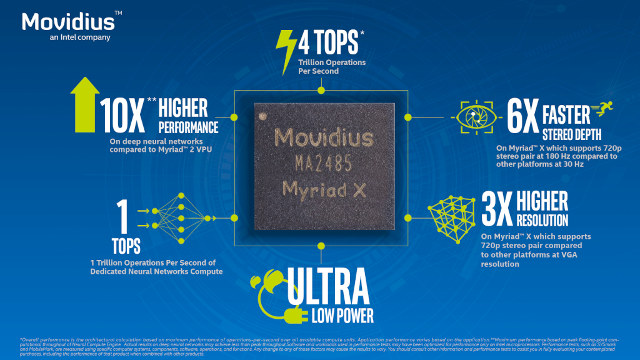

- Neural Compute Engine – Dedicated on-chip accelerator for deep neural networks delivering over 1 trillion operations per second of DNN inferencing performance (based on peak floating-point computational throughput).

- 16x programmable 128-bit VLIW Vector Processors (SHAVE cores) optimized for computer vision workloads.

- 16x configurable MIPI Lanes – Connect up to 8 HD resolution RGB cameras for up to 700 million pixels per second of image signal processing throughput.

- 20x vision hardware accelerators to perform tasks such as optical flow and stereo depth.

- On-chip Memory – 2.5 MB homogeneous memory with up to 450 GB per second of internal bandwidth

- Interfaces – PCIe Gen 3, USB 3.1

- Packages

- MA2085: No memory in-package; interfaces to external memory

- MA2485: 4 Gbit LPDDR4 memory in-package

The hardware accelerators allows to offload the neural compute engine, for example, the stereo depth accelerator can simultaneously process 6 camera inputs (3 stereo pairs) each running 720p resolution at 60 Hz frame rate. The slide below also indicates Myriad X to have 10x higher DNN performance compared to Myriad 2 VPU found in Movidius Neural Compute Stick.

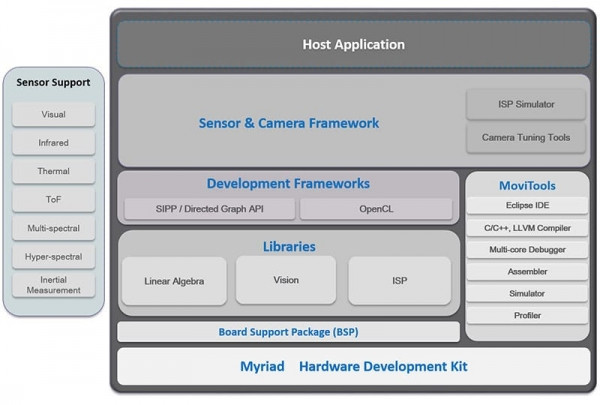

The VPU ships with an SDK that contains software development frameworks, tools, drivers and libraries to implement artificial intelligence applications, such as a specialized “FLIC framework with a plug-in approach to developing application pipelines including image processing, computer vision, and deep learning”, and a neural network compiler to port neural networks from Caffe, Tensorflow, and others.

More details can be found on Movidius’ MyriadX product page.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress