Many companies are now involved in the quest to develop self-driving cars, and getting there step by step with 6 levels of autonomous driving defined based on info from Wikipedia:

- Level 0 – Automated system issues warnings but has no vehicle control.

- Level 1 (”hands on”) – Driver and automated system shares control over the vehicle. Examples include Adaptive Cruise Control (ACC), Parking Assistance, and Lane Keeping Assistance (LKA) Type II.

- Level 2 (”hands off”) – The automated system takes full control of the vehicle (accelerating, braking, and steering), but the driver is still expected to monitor the driving, and be prepared to immediately intervene at any time. You’ll actually have your hands on the steering wheel, just in case…

- Level 3 (”eyes off”) – The driver can safely turn their attention away from the driving tasks, e.g. the driver can text or watch a movie. The system may ask the driver to take over in some situations specified by the manufacturer such as traffic jams. So no sleeping while driving 🙂 . The Audi A8 Luxury Sedan was the first commercial car to claim to be able to do level 3 self driving.

- Level 4 (”mind off”) – Similar to level 3, but no driver attention is ever required. You could sleep while the car is driving, or even send the car somewhere without your being in the driver seat. There’s a limitation at this level, as self-driving mode is limited to certain areas, or special circumstances. Outside of these areas or circumstances, the vehicle must be able to safely park the car, if the driver does not retake control.

- Level 5 (”steering wheel optional”) – Fully autonomous car with no human intervention required, no other limitations

So the goal is obviously to reach level 5, which would allow robotaxis, or safely drive you home whatever your alcohol or THC blood levels. This however requires lots of redundant (for safety) computing power, and current autonomous vehicle prototypes have a trunk full of computing equipments.

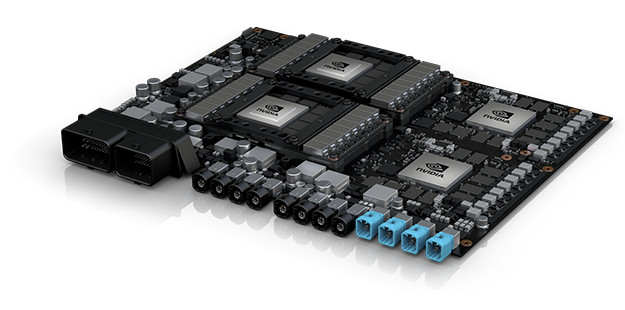

NVIDIA has condensed the A.I processing power required or level 5 autonomous driving into DRIVE PX Pegasus AI computer that’s roughly the size of a license plate, and capable of handling inputs from high-resolution 360-degree surround cameras and lidars, localizing the vehicle within centimeter accuracy, tracking vehicles and people around the car, and planning a safe and comfortable path to the destination.

NVIDIA has condensed the A.I processing power required or level 5 autonomous driving into DRIVE PX Pegasus AI computer that’s roughly the size of a license plate, and capable of handling inputs from high-resolution 360-degree surround cameras and lidars, localizing the vehicle within centimeter accuracy, tracking vehicles and people around the car, and planning a safe and comfortable path to the destination.

The computer comes with four A.I processors said to be delivering 320 TOPS (trillion operations per second) of computing power, ten times faster than NVIDIA DRIVE PX 2, or about the performance of a 100-server data center according to Jensen Huang, NVIDIA founder and CEO. Specifically, the board combines two NVIDIA Xavier SoCs and two “next generation” GPUs with hardware accelerated deep learning and computer vision algorithms. Pegasus is designed for ASIL D certification with automotive inputs/outputs, including CAN bus, Flexray, 16 dedicated high-speed sensor inputs for camera, radar, lidar and ultrasonics, plus multiple 10Gbit Ethernet

Machine learning works in two steps with training on the most powerful hardware you can find, and inferencing done on cheaper hardware, and for autonomous driving, data scientists train their deep neural networks NVIDIA DGX-1 AI supercomputer, for example being able to simulate driving 300,000 miles in five hours by harnessing 8 NVIDIA DGX systems. Once trained is completed, the models can be updated over the air to NVIDIA DRIVE PX platforms where inferencing takes place. The process can be repeated regularly so that the system is always up to date.

NVIDIA DRIVE PX Pegasus will be available to NVIDIA automotive partners in H2 2018, together with NVIDIA DRIVE IX (intelligent experience) SDK, meaning level 5 autonomous driving cars, taxis and trucks based on the solution could become available in a few years.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

Level 6: being able to drive in the movie series Fast And The Furious.