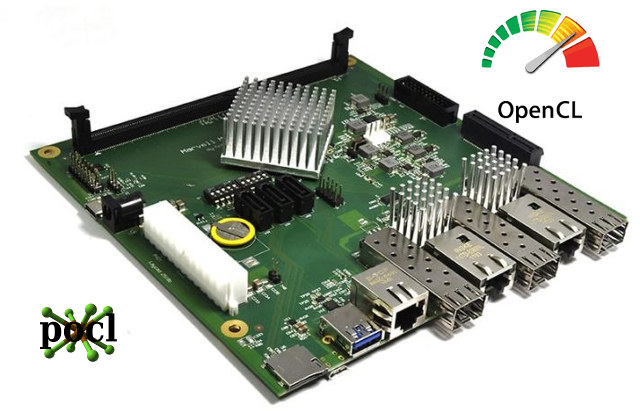

This is a guest post by blu about his experience with OpenCL on MacchiatoBin board with a quad core Cortex A72 processor and an Intel based MacBook. He previously contributed several technical articles such as How ARM Nerfed NEON Permute Instructions in ARMv8 or OpenGL ES development on Ubuntu Touch.

Qualcomm launched their long-awaited server ARM chip the other day, and we started getting the first benchmarks. Incidentally, I too managed to get some OpenCL ray-tracing code running on an ARM Cortex-A72 machine that same day (thanks to pocl – an LLVM-based open-source OCL multi-platform implementation), so my benchmarking curiosity got me.

The code in question is an OCL (half-finished) port of a graphics demo from 2014. Some remarks of what it does:

For each frame: a single thread builds a sparse voxel octree from a dynamic voxel scene; the octree, along with current camera settings are passed to an OCL kernel via double buffering; kernel computes a screen-space map of object IDs from primary-ray-hit voxels (kernel utilizes all compute units of a user-specified device); then, in headless mode used in the test, the app discards the frame. Test continues for a user-specified number of frames, and reports the average frames per second (FPS) upon termination.

Now, one of the baselines I wanted to compare the ARM machine against was a MacBook with Penryn (Intel Core 2 Duo Processor P8600), as the latter had exhibited very similar IPC characteristics to the Cortex-A72 in previous (non-OCL) tests, and also both machines had very similar FLOPS paper specs (and our OCL test is particularly FP-heavy):

- 2x Penryn @ 2400MHz: 4xfp32 mul + 4xfp32 add per clock = 38.4GFLOPS total

- 4x Cortex-A72 @ 1300MHz: 4xfp32 mul-add per clock = 41.6GFLOPS total

Beyond paper specs, on a SGEMM test the two machines showed the following performance for cached data:

- Penryn: 4.86 flop/clock/core, 23.33GFLOPS total

- Cortex-A72: 6.52 flop/clock/core, 33.90GFLOPS total

And finally RAM bandwidth (again, paper specs):

- Penryn: 8.53GB/s (DDR3 @ 1066MT/s)

- Cortex-A72: 12.8GB/s (DDR4 @ 1600MT/s)

On the ray-tracing OCL test, though, things turned out interesting (MacBook running Apple’s own OCL stack, which, to the best of my knowledge, is also LLVM-based):

- Penryn average FPS: 2.31

- Cortex-A72 average FPS: 7.61

So while on the SGEMM test the ARM was ~1.5x faster than Penryn for cached data, on the ray-tracing test, which is a much more complex code than SGEMM, the ARM speedup turned out ~3x? Remember, we are talking of two μarchs that perform quite closely by general-purpose-code IPC. Could something be wrong with Apple’s OCL stack? Let’s try pocl (exact same version of pocl and LLVM as on ARM):

- Penryn average FPS: 11.58

OK, that’s much more reasonable. This time Penryn holds a speed advantage of 1.5x. Now, while Penryn is a fairly mature μarch that has reached its toolchain-support peak long ago, could we expect improvements from LLVM’s (and pocl’s) support for the Cortex family? Perhaps. In the case of our little test I could even finish the Aarch64 port of the non-OCL version of this code (originally x86-64 with SSE/AVX), but hey, OCL saved me the initial effort for satisfying my curiosity!

[Update: See comment for new ARM Cortex A72 and A53 results after fixing some codegen issues]

What is more interesting, though, is that assuming a Qualcomm Falkor core is at least as performant as a Cortex-A72 core in both gen-purpose and NEON IPC (not a baseless supposition), and taking into account that the top specced Centriq 2400 has 12x the cores and 10x the RAM bandwidth of our ARM machine, we could speculate about Centriq 2400’s performance on this OCL test when using the same OCL stack.

Hypothetical Qualcomm Centriq 2400 server: Centriq 2400 48x Falkor @ 2200MHz-2600MHz, 6x DDR4 @ 2667MT/s (128GB/s)

Assumed linearly scaling from the ARMADA 8040 measured performance; in practice the single-thread part of the test will impede the linear scaling, and so could the slightly-lower per-core RAM BW paper specs.

|

1 2 3 4 |

$ echo "scale=4; 48 * 2.2 / (4 * 1.3) * 7.61" | bc 154.5408 $ echo "scale=4; 48 * 2.6 / (4 * 1.3) * 7.61" | bc 182.6400 |

Of course, CPU-based solutions are not the best candidate for this OCL test — a decent GPU would obliterate even a 2S Xeon server here. But the goal of this entire test was to get a first-encounter estimate of the Cortex-A72 for FP-heavy non-matrix-multiplication-trivial scenarios, and things can go only up from here. Raw data for POCL tests on MacchiatoBin and MacBook is available here.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

Re-reading the above, I realize a clarification needs to be made about a rather ambiguous statement. When I said that toolchain (compilers, OCL stacks et al) support for the Cortex’es will perhaps improve, I meant that as ‘if interest is present and efforts are made’ (in the case of POCL — community interest and effort), as I can say with confidence that there is room for improvement in the present codegen pipeline underlying this test on arm64. Unfortunately, my own LLVM experience is inadequate to solve that (but yes, I can and will file the necessary tickets with POCL). In… Read more »

Small update after identifying and eliminating some LLVM/POCL aarch64 codegen issues (shoutout to Michal Babej from the POCL team for the helpful hints and directions):

* Penryn average FPS: 11.58

* Cortex-A72 average FPS: 22.54

My new ARM workbook — Teres-A64 (4x cortex-a53 @ 1152MHz-1104MHz):

* Cortex-A53 average FPS: 14.21

And finally, the hypothetical Centriq 2400 linear scaling from the MacchiatoBin:

$ echo “scale=2; 48 * 2.2 / (4 * 1.3) * 22.54” | bc

457.56

$ echo “scale=2; 48 * 2.6 / (4 * 1.3) * 22.54” | bc

540.96

@blu

IIRC you got an performance increase by factor 5 (2.31 vs. 11.58) on the Core 2 Duo just by exchanging the toolchain? And now after eliminating some ‘codegen issues’ performance on the A72 increased by factor 3 (7.61 vs. 22.54)? Does this affect the Intel platform too? Since you used the same number 11.58 in both article and update comment?

@tkaiser

Yep, stock Apple toolchain turned, well, crap, for this task, so I switched to POCL on macos as well. I haven’t updated the Penryn POCL results as code there did not have the apparent codegen issues aarch64 had. I will update to latest POCL/LLVM on Penryn as well, just for POCL parity, but I’m away from home ATM.

For reference, here’s the POCL codegen ticket: https://github.com/pocl/pocl/issues/563 — new ARM results are from LLVM5 + proposed fmin/fmax patch.

I’m still hoping we’ll see an affordable board with at least dual A7x plus quad A5x cores SoC that could be fitted in a Teres chassic or Raspberry Pi-Top case.

Or someone correct me if such a thing exists already!

I’ve not seen anything from the MNT Reform project, I did sign up to their mailing list. No recent updates on the web page either.

ah, there’s the HiKey 960, which I discovered having stumbled on https://www.board-db.org/

it’s US$240 on Amazon.com, about double what I’d hoped, especially given only 3GB of RAM, but then it’s a “developers reference board” not for hobbyists.

and the rock960 – https://www.cnx-software.com/2017/09/29/rock960-board-is-a-96boards-compliant-board-powered-by-rockchip-rk3399-soc/

price unknown but expected to be US$140 for the 4GB RAM/ 32GB eMMC.

BTW, the formerly-private aarch64 version of the OCL benchmark used in the post is public now; it can be found in the original repo under prob_7 and built via build_headless.sh (might need CC var tweaking as compiler is hardcoded in the script).