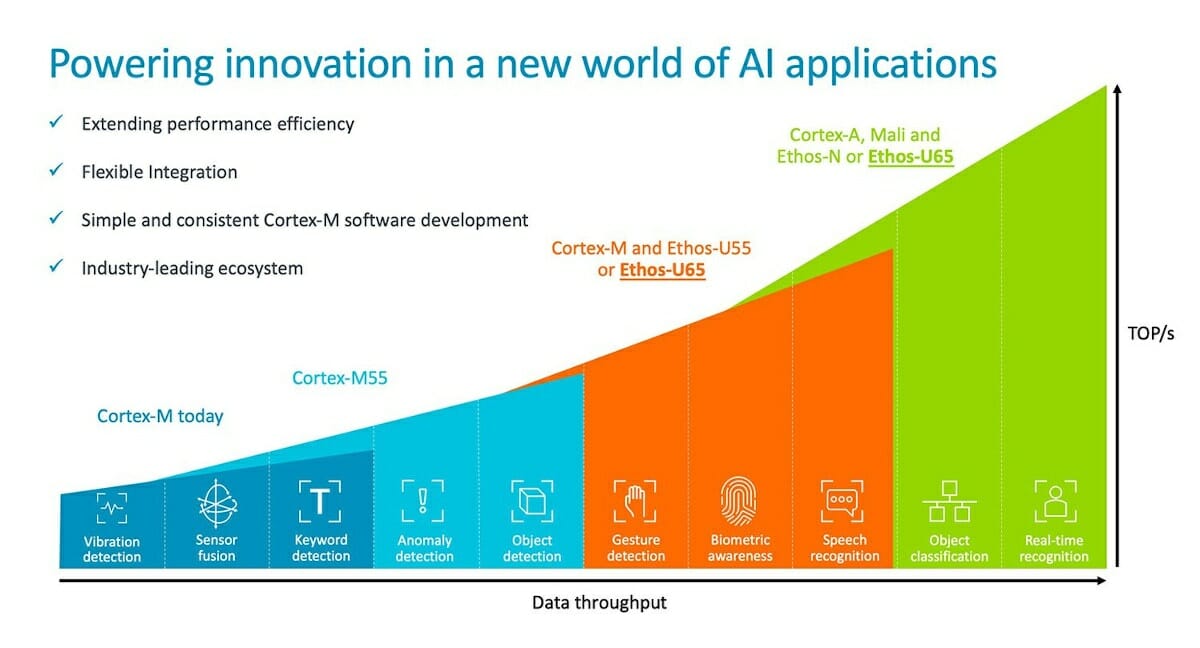

Arm introduced their very first microNPU (Micro Neural Processing Unit) for microcontrollers at the beginning of the year with Arm Ethos-U55 designed for Cortex-M microcontrollers such as Cortex-M55, and delivering 64 to 512 GOPS of AI inference performance or up to a 480x increase in ML performance over Cortex-M CPU inference.

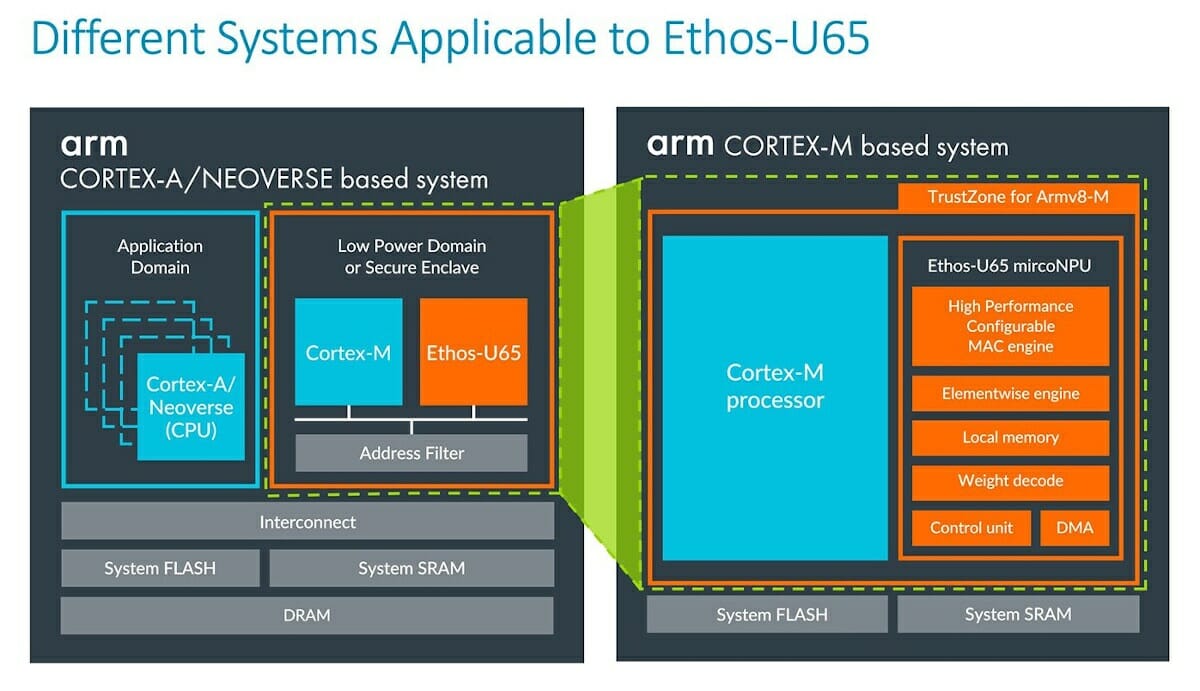

The company has now unveiled an update with Arm Ethos-U65 microNPU that maintains the efficiency of Ethos-U55 but enables neural network acceleration in higher performance embedded devices powered by Arm Cortex-A and Arm Neoverse SoCs.

The company says the development workflow remains the same with the use of the TensorFlow Lite Micro (TFLmicro) runtime that runs on a Cortex-M core and handles operations that are not directly supported by Ethos-U65. This is true even in Cortex-A/Neoverse based systems, where the microNPU is also paired with a Cortex-M microcontroller core.

Ethos-U65 supports many popular models including Mobilenet v1/v2, Resnet-50, Inception v2/v3, etc.., and models that are not supported can run on the Cortex-M cores using Arm Compute Library or CMSIS-NN libraries.

Systems-on-Chip based on Ethos-U65 will probably only start to show up sometimes in H2 2021, as Cortex-M55 and Ethos-U55 were announced last February, and AFAIK, no silicon vendor has announced a Cortex-M55 microcontroller so far. More information may be found on the product page and Arm’s community blog.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress