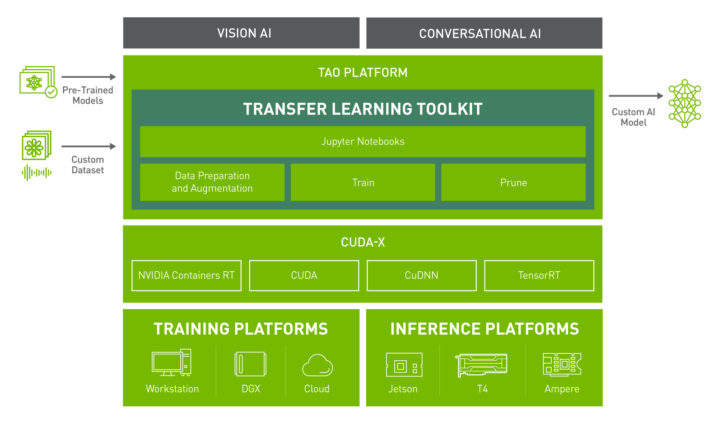

NVIDIA first introduced the TAO (Train, Adapt and Optimize) framework to eases AI model training on NVIDIA GPU’s as well as NVIDIA Jetson embedded platforms last April during GTC 2021.

The company has now announced the release of the third version of the TAO Transfer Learning Toolkit (TLT 3.0) together with some new pre-trained models at CVPR 2021 (2021 Conference on Computer Vision and Pattern Recognition).

The newly released pre-trained models are applicable to computer vision and conversational AI, and NVIDIA claims the release provides a set of powerful productivity features that boost AI development by up to 10 times.

Highlights of TAO Transfer Learning Toolkit 3.0

- Various computer vision pre-trained models for

- Computer vision:

-

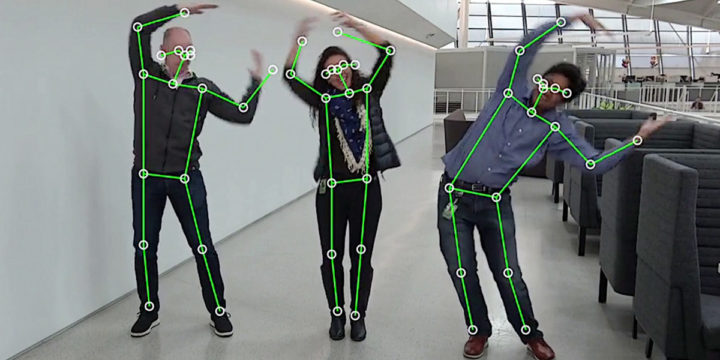

- Body Pose estimation model that supports real-time inference on edge with 9x faster inference performance than the OpenPose model.

- Emotion recognition

- Facial landmark

- License plate detection and recognition

- Heart rate estimation

- Gesture recognition

- Gaze estimation

- People segmentation via PeopleSemSegNet, a semantic segmentation network for people detection.

-

- Automatic Speech Recognition (ASR) and Natural Language Processing (NLP) models with inference samples for:

- CitriNet Speech to Text model trained on various proprietary domain-specific and open-source datasets.

- Named Entity Recognition (NER)

- Question/Answering using a new Megatron Uncased model

- Punctuation

- Text classification

- Computer vision:

- Training support on AWS, GCP, and Azure.

- Out-of-the-box deployment on NVIDIA Triton and DeepStream SDK for vision AI, and Jarvis for conversational AI.

If you’d like to get started you can download the latest Transfer Learning Toolkit 3.0 and access developer resources on NVIDIA developer website.

The 2D pose estimation demo above was performed with TAO Transfer Learning Toolkit 3.0 on a Jetson board. If you’d like to reproduce the demo yourself you can check the instructions on NVIDIA developer blog.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

A new version is out