Sipeed TinyMaix open-source machine learning library is designed for microcontrollers, and lightweight enough to run on a Microchip ATmega328 MCU found in the Arduino UNO board and its many clones.

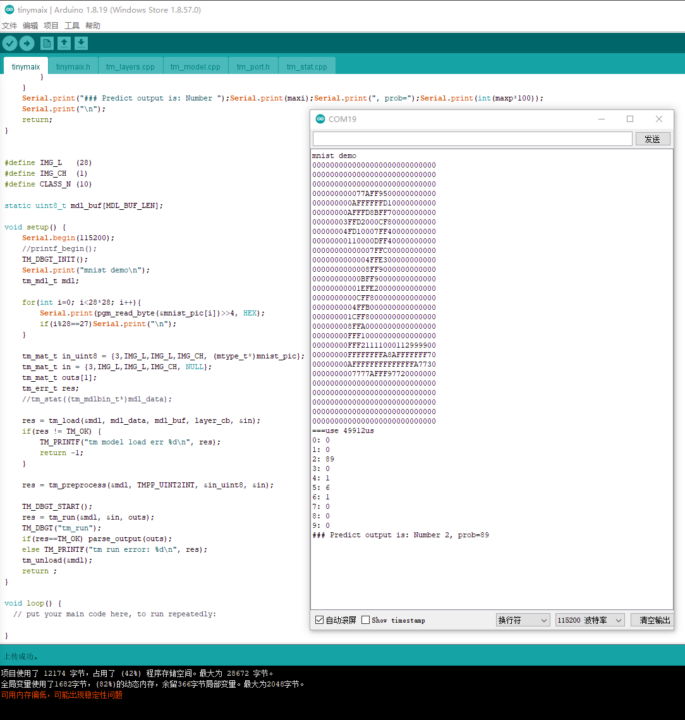

Developed during a weekend hackathon, the core code of TinyMax is about 400 lines long, with a binary size of about 3KB, and low RAM usage, enabling it to run the MNIST handwritten digit classification on an ATmega320 MCU with just 2KB SRAM and 32KB flash.

TinyMax highlights

- Small footprint

- Core code is less than 400 lines (tm_layers.c+tm_model.c+arch_O0.h), code .text section less than 3KB

- Low RAM consumption, with the MNIST classification running on less than 1KB RAM

- Support INT8/FP32 model, convert from keras h5 or tflite.

- Support multi-architecture acceleration: ARM SIMD/NEON, MVEI, RV32P, RV64V (32-bit & 64-bit RISC-V vector extensions)

- User-friendly interfaces, just load/run models

- Supports full static memory config

- MaixHub Online Model Training support coming soon

Sipeed says there are already machine learning libraries such as TensorFlow Lite for microcontrollers, microTVM, or NNoM but TinyMax aims to be a simpler TinyML library, does not use libraries like CMSIS-NN, and should take about 30 minutes to understand. Considering it can run on 8-bit microcontrollers, it might be more comparable to AIfES for Arduino which was made open-source by Fraunhofer IMS in July 2021.

Potential future features of TinyMaix include the INT16 quant model for higher accuracy and better support for SIMD/RV32P acceleration, at the cost of a higher footprint, Concat OPA for Mobilenet v2 support (but uses twice the memory and may be slow), and Winograd Convolution Optimization for higher inference speeds at the cost of an increase in RAM and memory bandwidth consumption.

You’ll find the source code and instructions to get started on the Github repository for the project.

Via Hackster.io

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

Great article thanks you

Promising library, definitely need to check it.