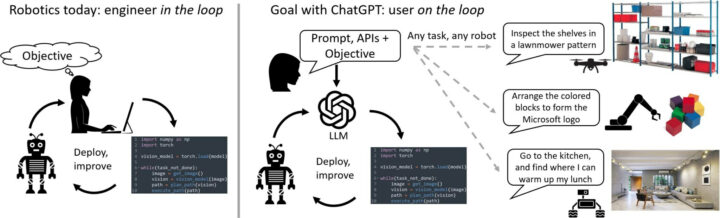

ChatGPT AI chatbot can help engineers write programs, and we recently tested it by letting it write a Python program to read data from an I2C accelerometer. But it can be used for more advanced programs and Microsoft Autonomous Systems and Robotics Group used ChatGPT for robotics and programmed robot arms, drones, and home assistant robots intuitively with (human) language.

The long-term goal is to let a typical user control/program a robot without having an engineer write code for the system. Microsoft explains that the current robotics pipelines begin with an engineer or technical user that needs to translate the task’s requirements into code for the system. That’s slow, expensive, and inefficient because a user needs to write code, skilled workers are not cheap, and several interactions are required to get things to work properly.

With ChatGPT or other large language models (LLM), a user could “program” the robot with human language, asking it to “inspect the shelves in a lawnmower pattern” with a drone, “arrange colors blocks to form the Microsoft logo” with a robotic arm, or “go to the kitchen and find where I can warm up my lunch” using another drone.

In this post, we’ll have a close look at what the research did to program Elephant Robotics’ myCobot 280 robotic arm with chatGPT to move color blocks including one demo to reproduce the Microsoft logo with four colored blocks.

Prompting LLMs is mostly a trial and error process but the following design principles should help with writing prompts for robotics projects:

- ChatGPT must be made aware of the high-level robot APIs or function library. For myCobot 280 robot four functions were fed to the chatbot:

- grab(): Turn on the suction pump to grab an object

- release(): Turns off the suction pump to release an object

- get_position(object): Given a string of an object name, returns the coordinates and orientation of the vacuum pump to touch the top of the object [X, Y, Z, Yaw, Pitch, Roll]

- move_to(position): It moves the suction pump to a given position [X, Y, Z, Yaw, Pitch, Roll].

- The text prompt should then describe the task goal while also explicitly stating which functions from the high-level library are available. The prompt can also contain information about task constraints or how ChatGPT should form its answers (specific coding language, using auxiliary parsing elements).

Example for myCobot 280:

“You are allowed to create new functions using these, but you are not allowed to use any other hypothetical functions.”

“Use Python code to express your solution”

“n the scene there are the following objects: white pad, box, blue block, yellow block, green block, red block, brown block 1, brown block 2. The blocks are cubes with height of 40 mm and are located inside the box that is 80 mm deep. The blocks can only be reached from the top of the box. I want you to learn the skill of picking up a single object and holding it. For that you need to move a safe distance above the object (100 mm), reach the object, grab it and bring it up.” - At this point, ChatGPT will output Python code. The user then evaluates ChatGPT’s code output, either through direct inspection or using a simulator, and uses natural language to provide feedback to ChatGPT on the answer’s quality and safety, and potential modifications.

- When the user is happy with the result, the code can be deployed onto the robot.

It’s probably best to start with easier tasks like moving blocks with the robotic arm, before moving to more complex instructions such as re-creating a logo. That’s what Microsoft did with the myCobot 280 robotic arm, and you can check out the complete prompt/discussion on GitHub, The final code looks like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 |

# get the positions of the blocks and the white pad white_pad_pos = get_position("white pad") blue_pos = get_position("blue block") yellow_pos = get_position("yellow block") red_pos = get_position("red block") green_pos = get_position("green block") # pick up the blue block pick_up_object("blue block") # calculate the position to place the blue block place_pos = [white_pad_pos[0]-20, white_pad_pos[1]-20, white_pad_pos[2]+40, 0, 0, 0] # place the blue block on the white pad place_object(place_pos) # pick up the yellow block pick_up_object("yellow block") # calculate the position to place the yellow block place_pos = [white_pad_pos[0]+20, white_pad_pos[1]-20, white_pad_pos[2]+40, 0, 0, 0] # place the yellow block on the white pad place_object(place_pos) # pick up the red block pick_up_object("red block") # calculate the position to place the red block place_pos = [white_pad_pos[0]-20, white_pad_pos[1]+20, white_pad_pos[2]+40, 0, 0, 0] # place the red block on the white pad place_object(place_pos) # pick up the green block pick_up_object("green block") # calculate the position to place the green block place_pos = [white_pad_pos[0]+20, white_pad_pos[1]+20, white_pad_pos[2]+40, 0, 0, 0] # place the green block on the white pad place_object(place_pos) |

The user was not involved in writing the code and only defined the API and provided descriptions and instructions for the project. You can also watch the video to see how ChatGPT was leveraged to control myCobot 280 robot.

Other example prompts to control robots can be found on PromptCraft collaborative open-source platform released by Microsoft on GitHub. The repository also includes an AirSim robotics simulator environment with ChatGPT integration that anyone can use to get started.

If you want to play with ChatGPT and robotics, you’ll find more hardware robotic platforms on Elephant Robotics’ website, and the company is committed to providing more solutions in the education field, such as AI Kit 2023, software upgrades for myCobot 280, and more. More details about Microsoft’s work ChatGPT for robotics can be found in a blog post or by directly reading the research paper.

This account is for paid-for, sponsored posts. We do not collect any commission on sales, and content is usually provided by the advertisers themselves, although we sometimes write it for our clients.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.