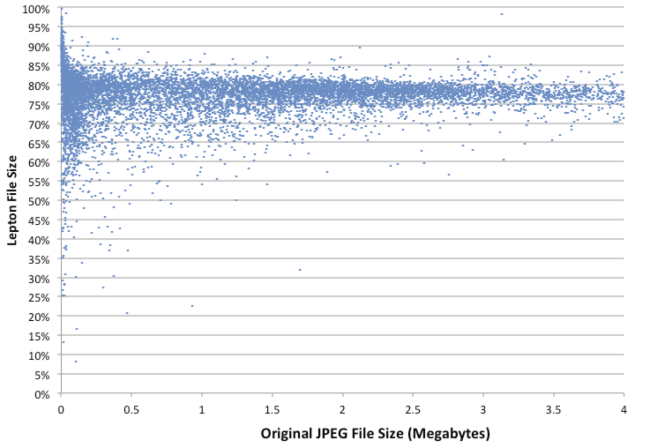

Dropbox stores billions of images on their servers, most of them JPEGs, so if they can reduce the size of pictures it can have a big impact on their storage requirements, so the company developed Lepton image compression, which – on average – achieved 22% lossless compression on the images stored in their cloud.

Compression and decompression speed is also important, since the files are compressed when uploaded and uncompressed on the fly when downloaded so that the complete process is transparent to the end users, who only see JPEG photos, and the company claims 5MB/s compression, and 15MB/s compression, again on average.

Compression and decompression speed is also important, since the files are compressed when uploaded and uncompressed on the fly when downloaded so that the complete process is transparent to the end users, who only see JPEG photos, and the company claims 5MB/s compression, and 15MB/s compression, again on average.

The good news is that the company released Lepton implementation on Github, so in theory it could also be used to increase the capacity of NAS which may contain lots of pictures. So I’ve given it a try in a terminal window in Ubuntu 14.04, but it can be built on Windows too with Visual Studio:

|

1 2 3 4 5 6 |

git clone https://github.com/dropbox/lepton cd lepton ./autogen.sh ./configure make -j8 make check -j8 |

If everything goes well for the last step, all tests should be successful:

|

1 2 3 4 5 6 7 8 9 10 |

============================================================================ Testsuite summary for lepton 0.01 ============================================================================ # TOTAL: 40 # PASS: 40 # SKIP: 0 # XFAIL: 0 # FAIL: 0 # XPASS: 0 # ERROR: 0 |

I also installed it in my path with:

|

1 |

sudo make install |

Now let’s go to some directory with photos I took with a DSLR camera:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

cd ~/Pictures/2016/07/07/ ls IMG_2652.JPG IMG_2661.JPG IMG_2670.JPG IMG_2680.JPG IMG_2689.JPG IMG_2653.JPG IMG_2662.JPG IMG_2671.JPG IMG_2681.JPG IMG_2690.JPG IMG_2654.JPG IMG_2663.JPG IMG_2672.JPG IMG_2682.JPG IMG_2691.JPG IMG_2655.JPG IMG_2664.JPG IMG_2673.JPG IMG_2683.JPG IMG_2692.JPG IMG_2656.JPG IMG_2665.JPG IMG_2675.JPG IMG_2684.JPG IMG_2693.JPG IMG_2657.JPG IMG_2666.JPG IMG_2676.JPG IMG_2685.JPG IMG_2694.JPG IMG_2658.JPG IMG_2667.JPG IMG_2677.JPG IMG_2686.JPG IMG_2695.JPG IMG_2659.JPG IMG_2668.JPG IMG_2678.JPG IMG_2687.JPG IMG_2696.JPG IMG_2660.JPG IMG_2669.JPG IMG_2679.JPG IMG_2688.JPG jaufranc@FX8350:~/Pictures/2016/07/07$ du -h 264M . |

44 pictures totaling 264 MB. I’ll compress them all, but first, let’s try with one to check the size difference and see if it is indeed lossless.

|

1 |

lepton IMG_2652.JPG IMG_2652.lep |

The Lepton file is definitely smaller (21.66% smaller):

|

1 2 3 |

ls -lh IMG_2652.* -rw-rw-r-- 1 jaufranc jaufranc 6.0M Jul 7 23:27 IMG_2652.JPG -rw------- 1 jaufranc jaufranc 4.7M Jul 15 11:40 IMG_2652.lep |

Now let’s uncompress the file and see if there’s any difference:

|

1 2 3 4 |

lepton IMG_2652.lep IMG_2652_uncompress.jpg diff IMG_2652.JPG IMG_2652_uncompress.jpg rm IMG5252_uncompress.jpg |

The diff did not generate any output, so the compression is indeed lossless.

Time to compress all 44 photos:

|

1 |

time for j in *.JPG; do lepton "$j" "${j%.JPG}.lep"; done |

I did so on a machine with an AMD FX8350 processor, and 8 cores were used during compression. The command took 5 minutes and 8 seconds, or about 7 seconds per picture. What about the size?:

|

1 2 3 4 5 |

mkdir lepton mv *.lep lepton cd lepton du -h 207M . |

That’s 207 MB down from 264 MB, or about 21.6% compression.

Via Phoronix

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

The blog post explaining the algorithm is interesting since it works with predictions so I would assume it’s optimized for JPEGs spitten out of mobile phones and DCams (this will make up 99.99% of images stored on DropBox). Will test that later with JPEG images created with optimized encoders we use in various workflows (it’s always a trade-off between speed, image quality and size). BTW: It should read just ‘./configure’ (without .sh here) and compilation fails on ARM: In file included from src/vp8/decoder/boolreader.hh:33:0, from src/vp8/decoder/boolreader.cc:15: src/vp8/decoder/../model/numeric.hh:11:23: fatal error: smmintrin.h: No such file or directory compilation terminated. Makefile:1814: recipe for target… Read more »

That was very informative. I’ve been hoarding so many images, that I’m compelled to try it out as well! Many thanks.

Should now work on other platforms than x86 (SSE4 instructions used) when doing