Most technological advances usually improve life of people, and with the costs coming down dramatically over the years, available to more people. But technology can be used for bad, for example by governments and some hackers. Today, I’ve come across two cheap hardware devices that could be considered evil. The first one is actually pretty harmless and can be use for education, but disconnects you from your WiFi, which may bring severe physiological trauma to some people, but should not be life threatening, while the other is downright scary with cheap targeted killing machines.

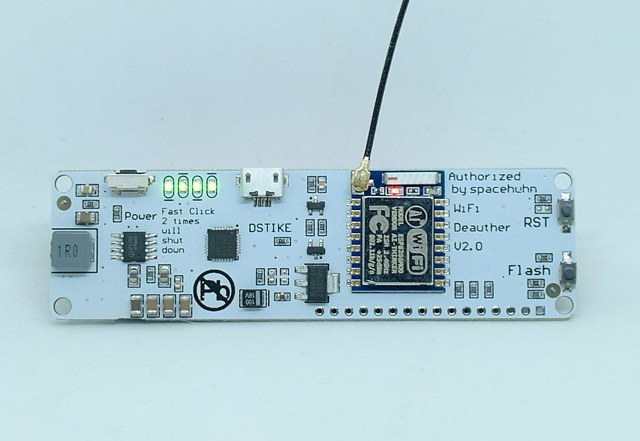

WiFi Deauther V2.0 board

Specifications for this naughty little board:

- Wireless Module based on ESP8266 WiSoC

- USB – 1x Micro USB type changed, more stable.

- Expansion – 17-pin header with 1x ADC, 10x GPIOs, power pins

- Misc – 1x power switch, battery status LEDs

- Power Supply

- 5 to 12V via micro USB port

- Support for 18650 battery with charging circuit (Over-charge protection, over-discharge protection)

- Dimensions – 10×3 cm

The board is pre-flashed with the open source ESP8266 deauther firmware, which allows you to perform a deauth attack with an ESP8266 against selected networks. You can select target IP address, and the board will then disconnect that node constantly, either blocking the connection or slowing it down. You don’t need to be connect to the access point or know the password for it to work. You’ll find more details on how it works on the aforelinked Github page. Note: The project is a proof of concept for testing and educational purposes.

WiFi Deauther V2.0 board can be purchased on Tindie or Aliexpress for $10.80 plus shipping.

A.I. Powered Mini Killer Drones

The good news is that those do not exist yet (at least not for civilians), but the video shows what could happen once people, companies, or governments weaponize face recognition, and drone technology to design mini drones capable of targeted killings. You could fit the palm-sized drones with a few grams of explosives (or lethal poison), tell them who to target, and once they’d find it, land on the skull of the victim, and trigger the explosive for an instant kill. Organizations or governments could also have army of those drones for killing based on metadata obtained from phone records, social media posts, etc… The fictional video shows how those drones could work, and what may happen to society as a consequence.

Technology is already here for such devices. Currently you could probably get $400+ DJI Spark drone to handle face recognition, but considering inexpensive $20+ miniature drones and $50 smart cameras are available (but not quite good enough right now), sub $100 drones with face recognition should be up for sale in a couple of years. The explosive and triggering mechanism would still need to be added, but I’m not privy to the costs… Nevertheless, it should be technically possible to manufacture such machine, even for individuals, for a few hundreds dollars. Link to fictitious StratoEnergetics company.

The Future of Life Institute has published an open letter against autonomous weapons that has been signed by various A.I. and robotics researchers, and other notable endorsers, stating that “starting a military AI arms race is a bad idea, and should be prevented by a ban on offensive autonomous weapons beyond meaningful human control”, but it will likely be tough to keep the genie in the bottle.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

This post is just stupid and superficial writing.

how you jump from “disconnects you from your WiFi” to ” design mini drones capable of targeted killings.”

had to check if i was on infowars xD

It is okay the CIA are planning to make the phone battery explode when you have the phone next to your ear. No need to worry about the drones. 😉

@theguyuk

+1

( unless people use Lithium ceramic battery, of course 🙂 )

Useless if your WIFI uses 802.11w Protected Management Frames.

@bantoto masabo sigola

Just happen to have seen both stories on the same day.

Well, maybe the WiFi deauther can be used against the killer drone… 🙂

@mi7chy: in this case the good old method involving the use of 4 video transmitters on distinct but overlapping channels will still work pretty well 😉

The concluding comment at 7 min 07 sec. is by Stuart Russell, Professor of Computer Science, U.C., Berkeley. He ends with:

“…the window to act is closing fast. Whirrrrrr – BANG!”

The deauther is unlawful for use in the United States. The presence of the FCC logo on the module does not indicate approval of the Federal Communications Commission for the entire device.

Isn’t it what Obama did for years, without any warrant or court order ? Of course haven’t heard someone complain …