Amazon Web Services (AWS) has launched Deeplens, the “world’s first deep learning enabled video camera for developers”. Powered by an Intel Atom X5 processor with 8GB, and featuring a 4MP (1080p) camera, the fully programmable system runs Ubuntu 16.04, and is designed expand deep learning skills of developers, with Amazon providing tutorials, code, and pre-trained models.

AWS Deeplens specifications:

- SoC – Intel Atom X5 Processor with Intel Gen9 HD graphics (106 GFLOPS of compute power)

- System Memory – 8GB RAM

- Storage – 16GB eMMC flash, micro SD slot

- Camera – 4MP (1080p) camera using MJPEG, H.264 encoding

- Video Output – micro HDMI port

- Audio – 3.5mm audio jack, and HDMI audio

- Connectivity – Dual band WiFi

- USB – 2x USB 2.0 ports

- Misc – Power button; camera, WiFi and power status LEDs; reset pinhole

- Power Supply – TBD

- Dimensions – 168 x 94 x 47 mm

- Weight – 296.5 grams

The camera can not only do inference, but also train deep learning models using Amazon infrastructure. Performance wise, the camera can infer 14 images/second on AlexNet, and 5 images/second on ResNet 50 for batch size of 1.

Six projects samples are currently available: object detection, hot dog not hot dog, cat and dog, activity detection, and face detection. Read that blog post to see how to get started.

Six projects samples are currently available: object detection, hot dog not hot dog, cat and dog, activity detection, and face detection. Read that blog post to see how to get started.

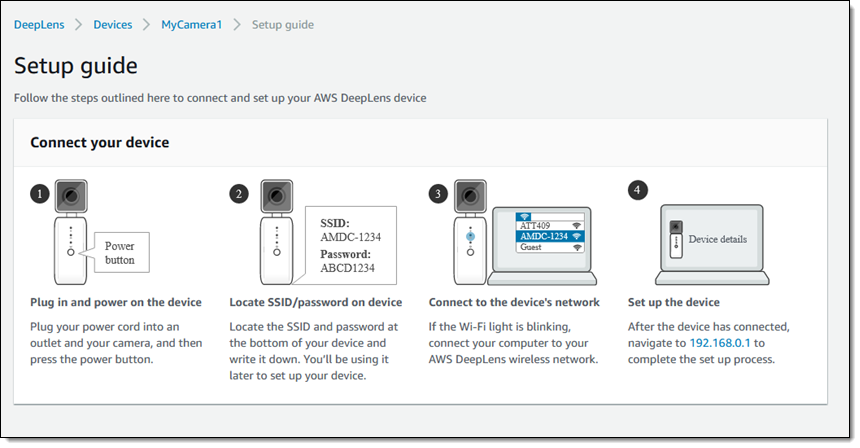

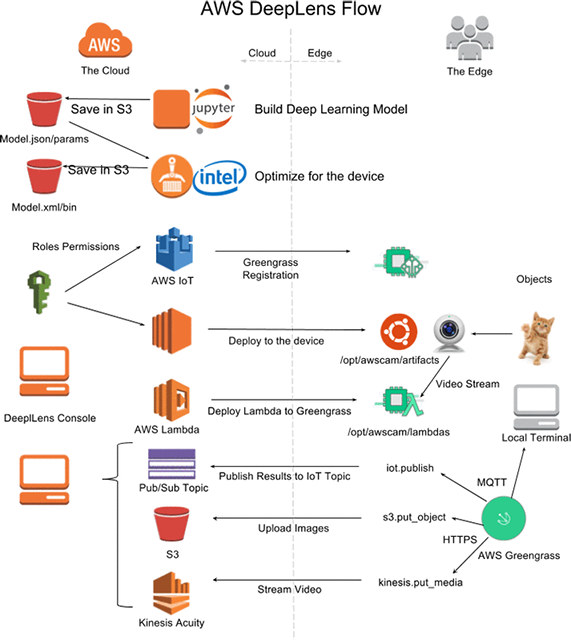

But if you want to make your own project, a typical workflow would be as follows:

- Train a deep learning model using Amazon SageMaker

- Optimize the trained model to run on the AWS DeepLens edge device

- Develop an AWS Lambda function to load the model and use to run inference on the video stream

- Deploy the AWS Lambda function to the AWS DeepLens device using AWS Greengrass

- Wire the edge AWS Lambda function to the cloud to send commands and receive inference output

This steps are explained in details on Amazon blog.

Intel also published a press release explaining how they are involved in the project:

DeepLens uses Intel-optimized deep learning software tools and libraries (including the Intel Compute Library for Deep Neural Networks, Intel clDNN) to run real-time computer vision models directly on the device for reduced cost and real-time responsiveness.

…

Developers can start designing and creating AI and machine learning products in a matter of minutes using the preconfigured frameworks already on the device. Apache MXNet is supported today, and Tensorflow and Caffe2 will be supported in 2018’s first quarter.

AWS DeepLens can be pre-ordered today for $249 by US customers only (or those using a forwarding service) with shipping expected on April 14, 2018. Visit the product page on AWS for more details.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

Atom + Gen9 at ~100+ GFLOPS leads me to deduce it’s an Apollo Lake N3350 SoC.

$249 seems a reasonable price for the hardware, but I also suspect it is a USB attached camera and any old Ubuntu based machine will be able to run the software. Plus I figure my desktop is going to be way faster than this hardware and I already own it.

@ASM

It’s an X5 processor according to Intel, so not N3350. It has to be Cherry Trail, as I don’t know any X5 Apollo Lake processor.

Sentribot security camera that covers 360 degrees in ultra high definition, 1.5 GigaFlops GPU, ulta small and ultra low power using AI and IoT.