Warning note: While there won’t be any NSFW photos in this post, there will be some photos of ladies in light clothing (e.g. bikini) and “naked” animals for testing purpose…

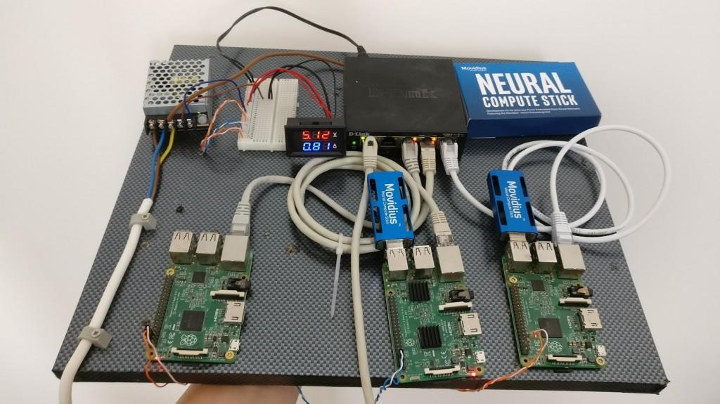

Intel released Movidius Neural Compute Stick allowing low power image recognition at the edge earlier this year, and we’ve seen it work just fine with Raspberry Pi 3 board delivering three times the performance against an inference solution leveraging VideoCore IV GPU.

Christian Haschek owns a photo hosting site (PictShare) which happens to run open source code with the same name, and allows user to upload images anonymously. However, he soon found out that at least one user uploaded some child pornography. He contacted the authorities, but then wondered whether there may be others? Since there are simply too many photo on the website to look it up manually, he decided to look for a solution, and went with a few Raspberry Pi 3 boards, some Neural Compute Sticks, as well as Yahoo’s deep learning solution for detecting NSFW Images.

He completed the setup with an Ethernet switch, and a solar panel and related bits to power the whole thing that costs around $300 in total. The results is called “NaaS cluster board”, where NasS stands for “Nudity-detection as a service”.

He then wrote a script to use the setup on his website using a 30% probability, and found 16 images with child pornography. The system detects all sort of pornography, not only child pornography, but it still helps flagging suspicious photos. You can try it yourself by going to https://nsfw.haschek.at/.

I started with a sample with some pink skin with some “naked pigs”.

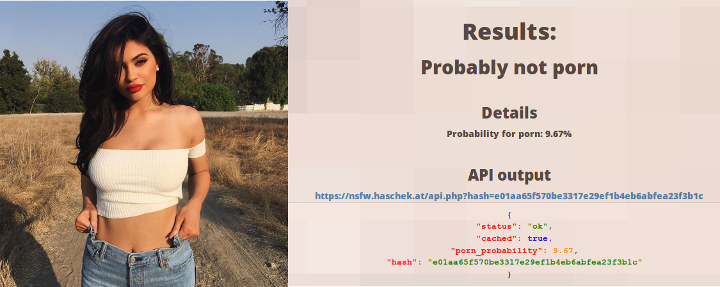

0.16% of porn probability. It was not fooled by that one. Switching to a lady showing some skin, red lips, etc…

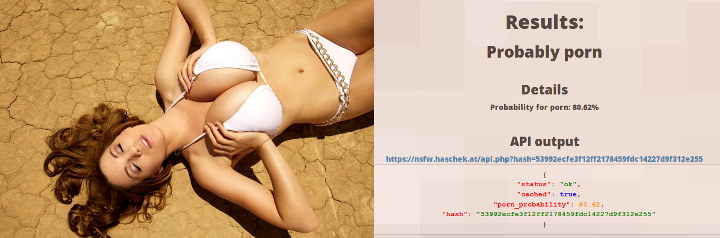

Probability increase to 9.67% as one might have expected, but still did not go over the 30% threshold. I took the last image from an eBay listing about “Hot Sexy Girl Bikini Big Boobs Titts Wall Print POSTER US”.

80.62% probability. I did not know eBay was in this kind of business, but Yahoo NFSW solution thinks otherwise. I’ll let you be the judge.

80.62% probability. I did not know eBay was in this kind of business, but Yahoo NFSW solution thinks otherwise. I’ll let you be the judge.

Christian’s setup ended up being quite useful, as a larger photo sharing site – wishing to remain anonymous – used his system. It took one week to go through all their images, but they managed to find 3292 images that contained child pornography, and took appropriate actions.

Thanks to theguyuk for the tip. Via BBC

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

“there won’t be any NSFW photos in this post” … depends on your work environment, I guess; both pictures with ladies would not be suitable for my work. Probably a culture thing.

Are u working in Iran ?