NVIDIA Jetson Xavier NX SoM was launched last month for $459. But while some third-party carrier boards were also announced at the time, the company had yet to offer Jetson Xavier NX Developer Kit as they did for Jetson Nano.

But as GTC 2020 conference is now taking place in the kitchen of Jensen Huang, NVIDIA CEO, the company had plenty to announce including Jetson Xavier NX Developer Kit as well as “Cloud-Native” support for all Jetson boards and modules.

NVIDIA Jetson Xavier NX Developer Kit

- CPU – 6-core NVIDIA Carmel ARMv8.2 64-bit processor with 6 MB L2 + 4 MB L3 cache

- GPU – NVIDIA Volta architecture with 384 NVIDIA CUDA cores and 48 Tensor cores

- Accelerators

- 2x NVDLA Engines

- 7-Way VLIW Vision Processor

- Memory – 8 GB 128-bit LPDDR4x 51.2GB/s

- Storage – MicroSD slot, M.2 Key M socket for NVMe SSD

- Video Output – HDMI and DisplayPort

- Video

- Encode – 2x 4Kp30 | 6x 1080p 60 | 14x 1080p30 (H.265/H.264)

- Decode

- 2x 4Kp60 | 4x 4Kp30 | 12x 1080p60 | 32x 1080p30 (H.265)

- 2x 4Kp30 | 6x 1080p60 |16x 1080p30 (H.264)

- Camera – 2x MIPI CSI-2 D-PHY lanes compatible with Raspberry Pi HQ camera and RPi V2 camera

- Connectivity – Gigabit Ethernet, WiFi & Bluetooth via M.2 Key-E card (included)

- USB – 4x USB 3.1 ports, USB 2.0 Micro-B

- Expansion – Header with GPIOs, I2C, I2S, SPI, UART

- Power Supply – 9 to 19V DC via power barrel jack

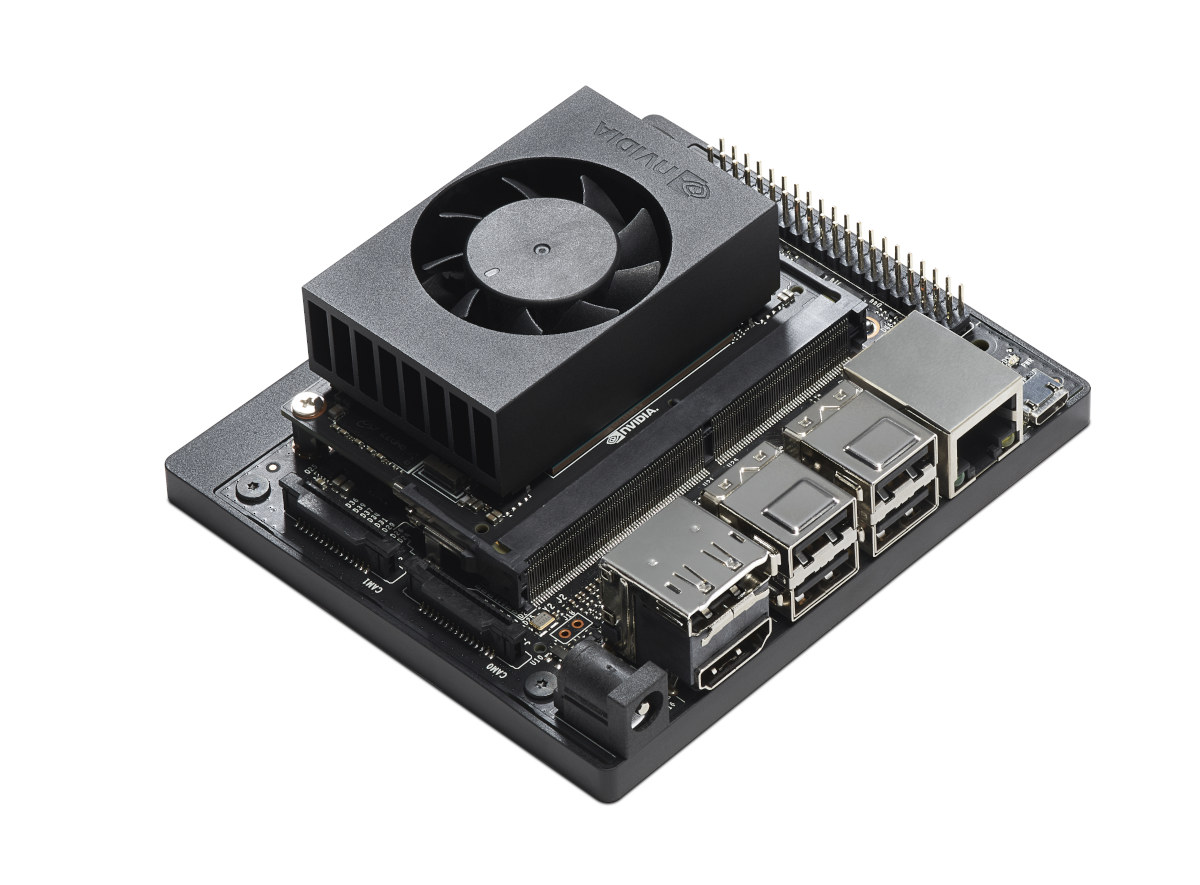

- Dimensions – 103 x 90.5 x 31 mm

NVIDIA Jetson Xavier Developer Kit includes the carrier board, an NVIDIA Jetson Xavier NX module (without eMMC flash), a power adapter, a quick start guide, and a support guide. The board runs a customized version of Ubuntu Linux, and AI applications can be developed with the NVIDIA JetPack SDK.

NVIDIA Jetson Xavier Developer Kit includes the carrier board, an NVIDIA Jetson Xavier NX module (without eMMC flash), a power adapter, a quick start guide, and a support guide. The board runs a customized version of Ubuntu Linux, and AI applications can be developed with the NVIDIA JetPack SDK.

Thanks to the magic of sponsored developer hardware (and lack of eMMC flash), Jetson Xavier Developer Kit is actually cheaper than the module itself, as it goes for $399 plus shipping on sites like Amazon, Seeed Studio or NVIDIA own’s store.

NVIDIA Jetson Xavier NX Developer Kit vs Other Jetson Modules

One obvious change against the fanless Jetson Nano developer kit is that the new AI computer will ship with a fansink for cooling because while the former supports 5W and 10W modes, the latter operates between 7.5W and 15W.

You’ll find a comparison between Jetson Nano, Jetson TX2, Xavier NX, and AGX Xavier in the table below courtesy of Seeed Studio.

| Jetson Nano Developer Kit | Jetson TX2 Developer Kit | Jetson Xavier NX Developer Kit |

Jetson AGX Xavier Developer Kit | |

|---|---|---|---|---|

| AI Performance | 0.5 TFLOPS (FP16) | 1.3 TFLOPS (FP16) | 6 TFLOPS (FP16) 21 TOPS (INT8) |

5.5-11 TFLOPS (FP16) 20-32 TOPS (INT8) |

| GPU | 128-core NVIDIA Maxwell GPU | 256-core NVIDIA Pascal GPU architecture with 256 NVIDIA CUDA cores | NVIDIA Volta architecture with 384 NVIDIA CUDA cores and 48 Tensor cores | 512-Core Volta GPU with Tensor Cores |

| CPU | Quad-core ARM A57 @ 1.43 GHz | Dual-Core NVIDIA Denver 2 64-Bit CPU Quad-Core ARM® Cortex®-A57 MPCore |

6-core NVIDIA Carmel ARM v8.2 64-bit CPU 6 MB L2 + 4 MB L3 | 8-Core ARM v8.2 64-Bit CPU, 8 MB L2 + 4 MB L3 |

| Memory | 4 GB 64-bit LPDDR4 25.6 GB/s | 8GB 128-bit LPDDR4 1866 MHz – 59.7 GB/s |

8 GB 128-bit LPDDR4x @ 51.2GB/s | 32 GB 256-Bit LPDDR4x | 137 GB/s |

| Power Consumption | 5-10W | 7.5-15W | 10-15W | 10-30W |

| Price | $99 | $399 | $399 | $699 |

The new developer kit is twelve times faster than Jetson Nano for AI workloads using FP16, and performance can further be improved using 8-bit integers.

NVIDIA Cloud-Native Support

The second part of NVIDIA announcement was Cloud-Native support for all Jetson platforms. So what is it exactly? There’s a lot of close to meaningless marketing speak in the press release:

With support for cloud-native technologies now available across the NVIDIA Jetson lineup, manufacturers of intelligent machines and developers of AI applications can build and deploy high-quality, software-defined features on embedded and edge devices targeting robotics, smart cities, healthcare, industrial IoT and more.

Cloud-native support helps manufacturers and developers implement frequent improvements, improve accuracy, and use the latest features with Jetson-based AI edge devices. And developers can quickly deploy new algorithms throughout an application’s lifecycle, at scale, while minimizing downtime.

The way I understand it is that you should be able to deploy and update apps easily to Jetson hardware over the cloud, and it looks like there may be a partnership with Microsoft?:

Moe Tanabian, general manager of Azure Edge Devices at Microsoft, said: “The Jetson Xavier NX enables AI at the edge with powerful computing performance, while keeping the small form factor of the Jetson Nano. This makes it possible to deploy containerized Azure solutions with AI acceleration at scale for applications like processing multiple camera feeds, more sophisticated robotics applications and edge AI gateway scenarios.”

The press release later reads “the embedded and robotics world is about to transform from fixed-function software devices to modern, software-defined computers”… After searching a bit more, I found a Github repo for “Documentation for the NVIDIA cloud-native technologies“, and we get to learn some of what it does:

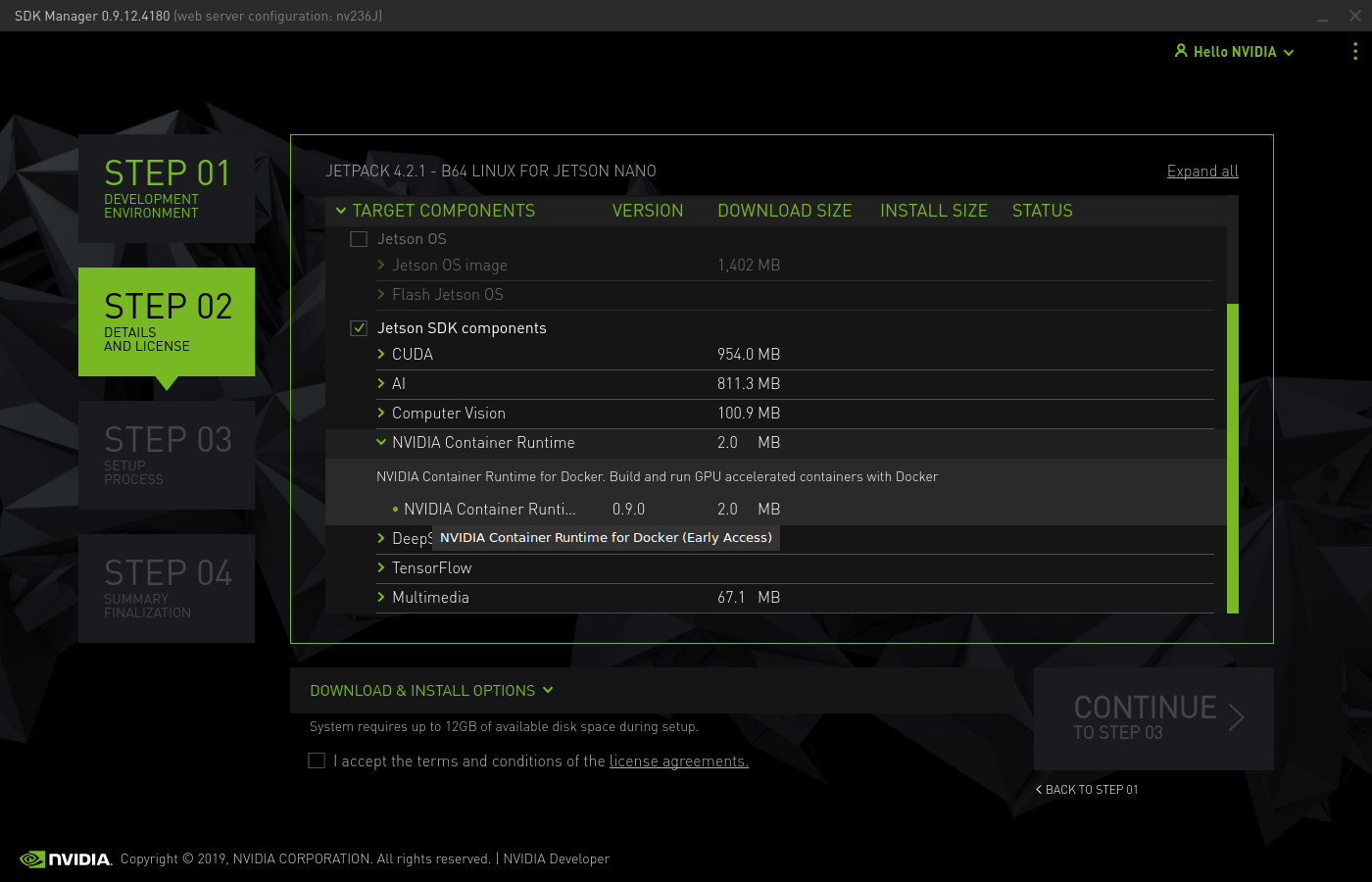

Starting with v4.2.1, NVIDIA JetPack includes a beta version of NVIDIA Container Runtime with Docker integration for the Jetson platform. This enables users to run GPU accelerated Deep Learning and HPC containers on Jetson devices

Some of the screenshots show they are indeed using Docker to deploy apps. So basically, the way I understand it Cloud-Native means Docker container support is now built into JetPack SDK instead of you having to handle it on your own…

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

Why didn‘t they declare the max core clock?

Xbox One X Test: 6 TFLOPS Grafikpower equivalent Jetson Xavier NX ?

This is 6TFlops of AI Compute, not GPU TFlops

Is it possible to use “nvenc” with Geforce and recompress XMedia Recode/HandBrake videos (H.264 in H.265)?

I wonder what these are like for general computing tasks, that’s a lot of RAM and great storage/connectivity…