With the increasing need for video encoding, there are some breakthrough developments in hardware-accelerated video encoding for Linux. Bootlin has been working on the implementation of Hantro H1 hardware accelerated video encoding to support H.264 encoding on Linux which follows the company’s work on the previously-released open-source VPU driver for Allwinner processors.

Hantro H1 Hardware

Hantro H1 is a common hardware H.264 encoder, it can also do VP8 and JPEG. It is found in a few ARM SoCs including a lot of Rockchip (RK3288, RK3328, RK3399, PX30, RK1808) and NXP (i.MX 8M Mini). Depending on the version, it can support up to 1080p at 30 or 60 fps.

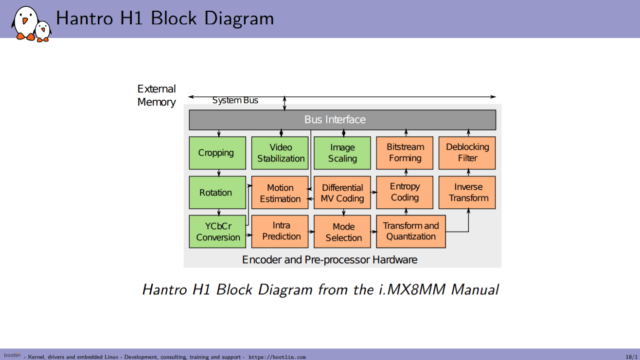

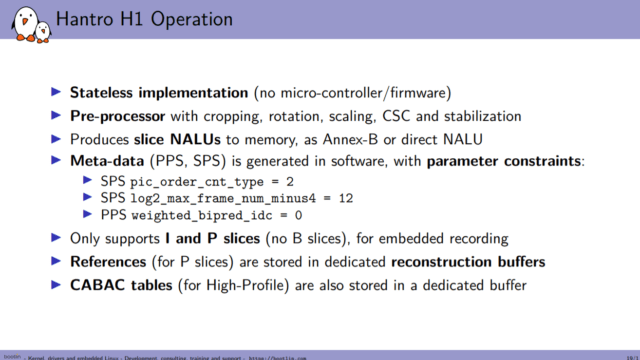

Here we can see different blocks used for encoding. Hantro H1 is a stateless hardware implementation which means it has no microcontroller or firmware running. As can be seen in the diagram, it has a pre-processor that can do things like cropping, rotation, scaling, stabilization, and CSC. It will produce slice NALUs which means no meta-data. There are also some constraints on specific parameters. It only supports I and P slices (no B slices) for embedded encoding. It will take references from P slices that need to be stored in specific reconstruction buffers. Hantro H1 also has internal rate control mechanisms.

H.264 Semantics

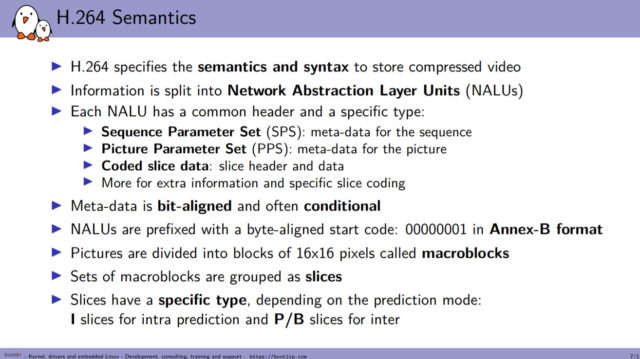

Some of the acronyms above may require some explanations. H.264 has semantics and specific units which are called Network Abstraction Layer Units (NALUs). Each of them has a data header with a specific type that indicates the data inside each unit. For example, there is meta-data in NALUs such as the Sequence Parameter Set (SPS). So, this is meta-data for the whole sequence.

Basically, meta-data is just a series of bits that needs to be understood according to the syntax of H.264. Similarly, there is also a Picture Parameter Set (PPS) for each picture. These are for meta-data, likewise, for coded data, we have coded slice data. There is a specific format called Annex-B which will put the prefix before the beginning of each NALU. This makes it easier to find the start of the next one.

Macroblocks are subdivisions of pictures into blocks of 16×16 pixels. These are grouped as slices. So basically the coded information in the coded slice data in NALU will be composed of data around macroblocks.

V4L2 Stateless Encoding

For Hantro H1, a lot of parameters can be set which control the encoding. Some of them need to be set to a specific value for the bitstream to be valid. In the stateless case, the state is tracked by V4L2 driver and userspace code. Bootlin is using the media request API just like in stateless decoding to tie the buffers and the parameters. The reconstruction buffers must also be taken care of, as they need to be attached to the input buffers. The current rate control in stateless cases can be implemented by the V4L2 driver and userspace which will read the feedback data itself.

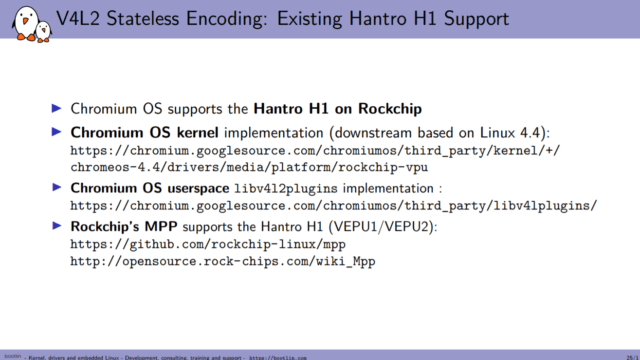

There is already some existing Hantro H1 code, for example on Chromium OS it is used with a downstream kernel driver that is pretty much stateless. There’s also a user-space implementation which is available as libv412plugins implementation. Also, the Rockchip has its own implementation of its own downstream kernel which is called MPP supports Hantro H1.

So, the approach that was taken to support this encoder was to use a mainline hantro driver that supports decoding with Hantro G1. Bootlin then added support for H.264 Hantro H1 encoding to the driver. The kernel implementation along with the userspace side is available on GitHub.

Future Plans?

Since the first implementation, there are some issues that cannot be accepted as is, but this gives some ideas to work for a proper interface for status encoders. Future work will also involve a clean up of the Hantro encoding code and bring it upstream with a nice interface that might also affect other hardware like the Allwinner encoder, which is also stateless.

Source: All the images were taken from Bootlin’s Embedded Linux Engineer, Paul Kocialkowski’s presentation slides from the Open Source Summit 2020.

Abhishek Jadhav is an engineering student, RISC-V Ambassador, freelance tech writer, and leader of the Open Hardware Developer Community.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress. We also use affiliate links in articles to earn commissions if you make a purchase after clicking on those links.

I supported the Allwinner video decode/encode support and I’d do it again in a heartbeat. But this one? No. Rockchip is big enough and NXP certainly is that they can pay for their own development.

As a separate issue, hardware video encode is horrible, just say no. As a means to capture video at a reasonable bit rate, sure, but as a mean of transmission or long term storage? Oh, no, no, no.

And H.264 now? H.265 is being phased out. Why such an old encoder? What specific use do they see this benefiting? The millions of Tinkerboard video streamers?

I think getting the framework in place for these sort of hardware is worth the effort even if H.264 is going out of fashion to help vendors and others mainline similar encoders in the future.

If there isn’t a strong framework in place then you get every vendor implementing basically the same thing in twenty different ways because they don’t want to work on something shared just in case it helps a competitor.

Is this mostly about implementing the stateless API for encoding, then? Sort of like the Allwinner decoding effort was about implementing a stateless API for decoding?

>Is this mostly about implementing the stateless API for encoding, then?

That last few slides seem to suggest that. My impression was that drivers already exist for this thing but they need to get agreement on how it should work to get them into mainline.

H.264 is in the meantime kind of a baseline codec, like many years mjpeg was, some reduced size, not so much efforts CPU whise, with nowadays computing power. Definitely the way to quickly encode an action cam’s stream or as a not so power hungry solution on a drone, a poe powered webcam, a rearview cam, you name it. For sure also for many industrial use cases H.264 is still good enough also nowadays.

But if you want to watch 5 simultaneous HDR UHD streams over a single BB link, or even are a streaming operator, then H.264 won’t cut it anymore, for sure, but they won’t use RK or NXP SOCs…

h.264 is going to be with us for a very long time. At 1080P h,265 is about the same as h.264 and h.265 costs a heck of a lot more in royalties. Most h.264 patents expire in 2027 which will make it free then. Maybe AV1 will replace h.264 sooner or later but there are billions of devices out there right now that can’t do AV1. So at the moment, it is h.264 for everything except 4K and that uses h.265 for the most part.

This work is not being done because NXP, Rockchip, Allwiner, etc can’t pay for their own development. All of those companies have closed source drivers for their hardware. It is being done so that we have open source drivers for these hardware blocks. Open source is needed to prevent us from getting trapped on specific kernel releases due to inability to recompile the code. These companies keep the source code closed out of a misguided belief that they have secret technology in these IP blocks. That might have been true in 2003, but it certainly is not true today and we are long past the time where h.264 hardware support should have been open sourced.

Apologies for being off-topic, but this is one of the few places I have found where the Hantro H1 encoder is (vaguely) discussed.

Has any of you used the Hantro H1 for h.264 encoding?

I’m working on an IP camera based on the i.MX8MMini and find the quality of encoding made with the H1 poor to say the least. I’ve enabled CABAC + 8×8 transform, the only 2 features documented by NXP which made a modest improvement over the default, but it’s still not amazing. Many artefacts are visible, especially around the text overlays inserted in the video.

Testing condition: 1080p30 video with text overlays, gop=15, bitrate=5Mbps, Qp=0 (auto). Cranking up the bitrate improves quality – as expected – but even at max bitrate (40Mbps), there are still obvious artefacts visible around the text overlays.