Hardkernel has just launched their ODROID-HC1 stackable NAS system based on a cost-down version of ODROID-XU4 board powered by Samsung Exynos 5422 octa-core Cortex-A15/A7 processor, which as previously expect, you can purchase for $49 on Hardkernel website, or distributors like Ameridroid.

We now have the complete specifications for ODROID-HC1 (Home Cloud One) platform:

We now have the complete specifications for ODROID-HC1 (Home Cloud One) platform:

- SoC – Samsung Exynos 5422 octa-core processor with 4x ARM Cortex-A15 @ 2.0 GHz, 4x ARM Cortex-A7 @ 1.4GHz, and Mali-T628 MP6 GPU supporting OpenGL ES 3.0 / 2.0 / 1.1 and OpenCL 1.1 Full profile

- System Memory – 2GB LPDDR3 RAM PoP @ 750 MHz

- Storage

- UHS-1 micro SD slot up to 128GB

- SATA interface via JMicron JMS578 USB 3.0 to SATA bridge chipset capable of achieving ~300 MB/s transfer rates

- The case supports 2.5″ drives between 7mm and 15mm thick

- Network Connectivity – 10/100/1000Mbps Ethernet (via USB 3.0)

- USB – 1x USB 2.0 port

- Debugging – Serial console header

- Misc – Power, status, and SATA LEDs;

- Power Supply

- 5V via 5.5/2.1mm power barrel (5V/4A power supply recommended)

- 12V unpopulated header (currently unused)

- Backup header for RTC battery

- Dimensions – 147 x85 x 29 mm (Aluminum case also serving as heatsink)

- weight – 229 grams

The company provides Ubuntu 16.04.2 with Linux 4.9, and OpenCL support for the board, the same image as ODROID-XU4, but there are also community supported Linux distributions including Debian, DietPi, Arch Liux ARM, OMV, Armbian, and others, which can all be found in the Wiki.

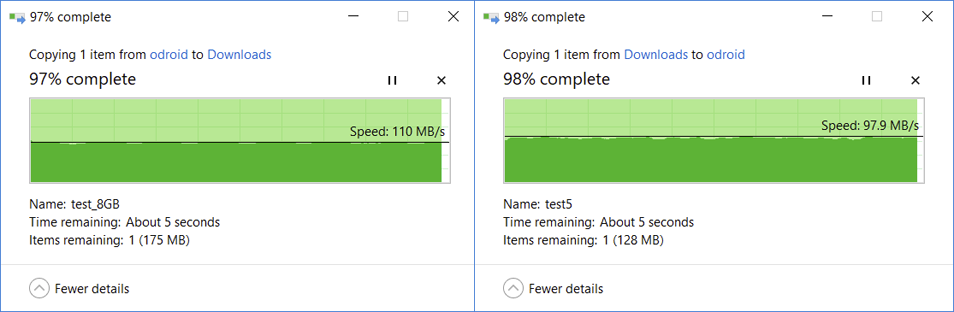

Based on Hardkernel’s own tests, you should be able to max out the Gigabit Ethernet bandwidth while transferring a files over SAMBA in either directions. tkaiser, an active member of Armbian, also got a sample, and reported that heat dissipation worked well, and that overall Hardkernel had a done a very good job.

While power consumption of the system is usually 5 to 10 Watts, it may jump to 20 Watts under heavy load with USB devices attached, so a 5V/4A power supply is recommended with the SATA drive only, and 5V/6A if you are also going to connect power hungry devices to the USB 2.0 port. The company plans to manufacture ODROID-HC1 for at least three years (until mid 2020), but expects to continue production long after, as long as parts are available.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

Just a quick note. To benefit from HC1’s design (excellent heat dissipation, no USB3 contact/cable/powering hassles any more) settings and kernel version are important (to make use of ‘USB Attached SCSI’ (UAS) Hardkernel’s 4.9 LTS or mainline Linux kernel is necessary). I did a quick comparison of two Debian Jessie variants obviously following different philosophies using their respective defaults with an older 2.5″ 7.2k Hitachi HDD testing on the fastest partition: – 3.10.105 kernel: https://pastebin.com/VWicDntJ – 4.9.37 kernel + optimizations: https://pastebin.com/0eWy00bY The 2nd image is my test image I currently explore whether limiting the 4 big cores on the Exynos… Read more »

@tkaiser So tkaiser, you would suggest to use this solution for a DIY NAS, to at least a bit increase the safety of the data. For example such setup – 1 usb3 disk and 1 usb2 disk connected to the HC1, the usb3 disk is primary and used also for streaming while the usb2 is only meant for regular daily backups of usb3 disk….So data would then be on personal computers and on both usb3 and usb2 disks on the HC1, thus a bit safer if the disks in the personal computers die. Could also other software be run on… Read more »

Since I realized that the OS image I chose for testing not only uses an outdated (and UAS incapable kernel) but also cpufreq scaling settings I don’t really understand (interactive governor and allowing the CPU cores to clock down to 200 MHz), I re-tested with both OMV/Armbian (4.9.37 kernel, this time big cores allowed to clock up to 2 GHz as it’s the real default) and DietPi (3.10.105) this time with a rather fast SSD (Samsung EVO840). Difference to before: I switched in a 2nd round from distro defaults to performance cpufreq governor (something I wouldn’t recommend with XU4 for… Read more »

@Mark ODROID-XU4 is a performance beast and the HC1 here is just the same minus display output and GPIO headers (no one uses anyway since 1.8V) and also fortunately missing USB3/underpowering hassles. In other words: You have reliable storage access here and can run plenty of other stuff in parallel to pure NAS/OMV use cases (a single client pushing/fetching NAS data through SMB/AFP/NFS keeps one big core busy and 3 little cores at around ~50%, so you have 3 beefy big cores left for other tasks and can let the little cores do background stuff as well — you might… Read more »

What configuration/filesystem would you use to have redundancy with two or three of these NAS?

@Mark I forgot to mention that HC1 has the potential to run much much more heavy stuff on the CPU (or maybe the GPU as well utilizing OpenCL) since the huge heatsink works really well and can be combined with an efficient large but also slow/silent fan if really needed (I really hate XU4’s noisy fansink but unfortunately the quite XU4Q version has been announced just a week after I purchased XU4 some months ago). BTW: with really heavy stuff (eg. cpuminer on all CPU cores which uses NEON optimizations) the heatsink + huge fan is not enough at least… Read more »

@DurandA

I’m gonna pre-empt tkaiser’s answer here: No ECC? Then it can’t be trusted with your data. ( 😉 )

If you want to ignore that though maybe Gluster would do? Just don’t expect it to be bulletproof. ZFS can do all the checksumming it likes but if it’s being accessed through some dodgy RAM there’s nothing you can do.

*edit* Or you just have one unit as a front man and rsync between it and the other two which act as backups. That’s a bare minimum but simple to set up.

Are all the drivers for this board in the upstream kernel, or is Hardkernel maintaining it’s own 4.9 fork? It sounds like drivers have been upstreamed, but hardkernel only seem to talk about their 4.9 LTS kernel.

@CampGareth For all those following naïve optimism thoughts like ‘if I put a one into my DRAM then it’s save to assume that I get back a one and not a zero‘ reading through all three pages of this explanation why (on-die) ECC soon becomes a necessity for mobile DRAM might be enlightening or frightening based on perspective/experiences 😉 @camh Hardkernel maintains an own 4.9 branch which receives regular updates from upstream 4.9 LTS where they can fix intermediate issues soon on their own if needed (eg. a while ago they discovered an issue with coherent-pool memory size too small… Read more »

Hello Tkaiser,

Could you please confirm the power consumption for idle & disk powered-off with your optimized image?

The article contains consumption values 5-10watts, if your optimized image should lower the maximum for 3 watts, does it means 5-7 watts (in idle)?

Or should we take some more variables taken into account and the final consumption is different?

@tkaiser

Great news, gonna soon order it then :)…Yeah its a bit over my head the more complicated and better ways, but still nice to know, thanks for the awesome reply tkaiser!

CampGareth now frightened me a bit but I’m gonna try to ignore that :).

@tkaiser Am I missing something? It seems to me that article doesn’t have anything on reliability. What it’s saying is that if you gain ECC you can further abuse your RAM to save power, just reduce its voltage, and the speed of refresh cycles, and its node size. Make it less reliable then correct for the newly introduced unreliability. A similar approach is used in mobile radios since high power broadcasting takes a lot of power but a few million transistors dedicated to error correction take far less. Still the more I read the more I tend to agree with… Read more »

blackie : The article contains consumption values 5-10watts, if your optimized image should lower the maximum for 3 watts, does it means 5-7 watts (in idle)? Nope. My specific use case is NAS and I’m investigating whether it’s worth to let the A15 CPU cores clock up to 2.0 GHz which wastes a lot more energy — see below — or only allow them to clock as high as 1.4GHz when performance is the same. My number of 3W less is based on that (full NAS performance comparing big cores at 2 / 1.4GHz). Idle consumption is not affected here… Read more »

@CampGareth Oh, that was already two years ago on CNX: https://www.cnx-software.com/2015/12/14/qnap-tas-168-and-tas-268-are-nas-running-android-and-linux-qts-on-realtek-rtd1195-processor/#comment-520316 (check especially the links there). The more processes and structures shrink the more we are away from something ‘digital’ and the more such error detection and correction stuff has to happen. On-die ECC as it’s optional with LPDDR4 is one logical requirement for further process shrink but it should be pointed out why this is different from ECC DIMMs. The latter allow to read out bit flips happening so you can act on accordingly (that’s what monitoring is for — in the beginning I configured SNMP traps and pager… Read more »

HC2 arrived and works as expected: https://forum.armbian.com/topic/4983-odroid-hc1-hc2/?do=findComment&comment=45690

Fully software compatible so all available OS images will work out of the box. ‘Performance’ tests useless since only depending on the disk used. With SSDs (the enclosure is also prepared for 2.5″) and OS images that use good settings ~400 MB/s sequential performance is possible while random IO performance is limited due to USB3 SATA here. But with spinning rust only the HDD in question will define performance, the JMS578 used here as USB-to-SATA bridge is no bottleneck at all.