H.265 promises the same video quality as H.264 when using half the bitrate, so you may have thought about converting your H.264 videos to H.265/HEVC in order to reduce the space used by your videos. However, if you’ve ever tried to transcoding videos with tools such as HandBrake, you’ll know the process can be painfully slow, and a single movie may take several hours even with a machine with a power processor. However, there’s a better and fster solution thanks to hardware accelerated encoding available in some Intel and Nvidia graphics cards. For this purpose, GearBest sent me Maxsun MS-GTX960 graphics card, a second generation Maxwell GPU, that supports H.265 accelerated video encoding and promised up to 500 fps video encoding. So I’ve put the graphics card to the test in a computer running Ubuntu 14.04, and reports some of my findings here. Similar instructions can also be followed in Windows.

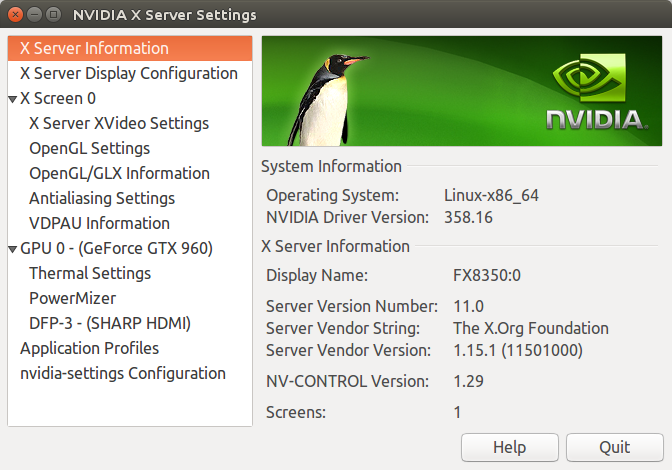

In order to leverage Nvidia Maxwell 2 GPU capabilities you’ll need to download and install Nvidia Video Codec SDK. The latest version (6.0.1) requires Nvidia Drivers 358.xx or greater, and my system had version 352.xx, so I followed some instructions to install the latest drivers in Ubuntu 14.04.

|

1 2 3 |

sudo add-apt-repository ppa:graphics-drivers/ppa sudo apt-get update sudo apt-get install nvidia-358 nvidia-settings |

Upon restart I had the latest 358.16 drivers installed.

Somehow the fonts were very small right after installation as xorg.conf was missing, so I recreated with the command:

|

1 |

sudo nvidia-xconfig --no-use-edid-dpi |

Then I adjust the font sizes further with Unity Tweak Tool.

The next step is to download and extract nvidia_video_sdk_6.0.1.zip into a working directory:

|

1 2 |

unzip nvidia_video_sdk_6.0.1.zip cd nvidia_video_sdk_6.0.1 |

The instructions in the Readme simply tell you to go to Samples directory, and type make in order to build the samples, but I had to do a few more steps:

|

1 2 |

sudo apt-get install libxmu-dev freeglut3 freeglut3-dev export LDFLAGS="-L /usr/lib/nvidia-358/" |

I also had to modify Samples/NvTranscoder/Makefile to replace := by += in front of LDFLAGS.

|

1 2 3 4 5 6 7 |

ifeq ($(OS_SIZE),32) LDFLAGS += -L/usr/lib64 -lnvidia-encode -ldl -lpthread CCFLAGS := -m32 else LDFLAGS += -L/usr/lib64 -lnvidia-encode -ldl -lpthread CCFLAGS := -m64 endif |

and finally I could successfully build the samples:

|

1 2 |

cd Samples make |

There are several samples in the SDK: NvEncoder, NvEncoderCudaInterop, NvEncoderD3DInterop, NvEncoderLowLatency, NvEncoderPerf, NvTranscoder, NvDecodeD3D9, and NvDecodeGL. For the purpose of this post I used NvTranscoder to convert H.264 video to H.265 using the GPU.

At first I had some issues with the error:

|

1 |

cuInit(0, __CUDA_API_VERSION, hHandleDriver) has returned CUDA error 999 |

I followed a workaround provided on Blender, and it did not work at first, but after using NvTranscoder with sudo once, I could use the tool as a normal user thereafter.

Here’s the output to transcode a H.264 1080p video with High Quality preset.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

time ./NvTranscoder -i h264_1080p_sample.m4v -o h265_1080p_sample.ts -codec 1 -preset hq Encoding input : "h264_1080p_sample.m4v" output : "h265_1080p_sample.ts" codec : "HEVC" size : 1920x1088 bitrate : 5000000 bits/sec vbvMaxBitrate : 0 bits/sec vbvSize : 0 bits fps : 90000 frames/sec rcMode : CONSTQP goplength : INFINITE GOP B frames : 0 QP : 28 preset : HQ_PRESET Total time: 31314.338000ms, Decoded Frames: 4901, Encoded Frames: 4901, Average FPS: 156.509775 real 0m31.959s user 0m33.667s sys 0m1.429s |

The video lasts 2 minutes 43 seconds (4901 frames in total), and encoding was done in about 32 seconds meaning about 5 times faster than real-time, and at 156.5 fps on average.

I repeated the same test by with High Performance preset.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

time ./NvTranscoder -i h264_1080p_sample.m4v -o h265_1080p_sample_fast.ts -codec 1 -preset hp Encoding input : "h264_1080p_sample.m4v" output : "h265_1080p_sample_fast.ts" codec : "HEVC" size : 1920x1088 bitrate : 5000000 bits/sec vbvMaxBitrate : 0 bits/sec vbvSize : 0 bits fps : 90000 frames/sec rcMode : CONSTQP goplength : INFINITE GOP B frames : 0 QP : 28 preset : HP_PRESET Total time: 23886.508000ms, Decoded Frames: 4901, Encoded Frames: 4901, Average FPS: 205.178589 real 0m24.433s user 0m26.159s sys 0m1.104s |

Decoding took around 24 seconds at 205 fps. It looked pretty good, but I tried the same test with HandBrake using H.265 with RF quality set to 25, and it took 4 minutes and 30 seconds to encode the video, or about 9 times slower than with the GPU. For reference, my computer is based on an AMD FX8350 octa-core processor clocked at 4.0 GHz.

But then I tried to play the video, and I could not find any tool to play them, and NvTranscode appears to generate raw H.265 video data, so as I did not want to write my own little program, I found that ffmpeg also support nvenc, but just not by default, and you have to compile it yourself.

There are instructions to build ffmpeg with nvenc in Ubuntu 15.10, but they did not work on Ubuntu 14.04 so I mixed those with ffmpeg Ubuntu compilation guide to build it for my computer.

First we’ll need to install some dependencies and create a working directory:

|

1 2 3 4 |

sudo apt-get -y --force-yes install autoconf automake build-essential libass-dev libfreetype6-dev \ libsdl1.2-dev libtheora-dev libtool libva-dev libvdpau-dev libvorbis-dev libxcb1-dev libxcb-shm0-dev \ libxcb-xfixes0-dev pkg-config texinfo zlib1g-dev yasm mkdir ffmpeg_sources |

You’ll also need to download and install/compile some extra packages depending on the codecs we want to enable. I’ll skip H.264 and H.265 since this will be handled by Nvidia GPU instead, and will enable AAC and MP3 audio encoders, VP8/VP9 and XviD video decoders and encoders, and libopus decoder and encoder as explained in the building guide:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

cd ffmpeg_sources wget -O fdk-aac.tar.gz https://github.com/mstorsjo/fdk-aac/tarball/master tar xzvf fdk-aac.tar.gz cd mstorsjo-fdk-aac* autoreconf -fiv ./configure --prefix="$HOME/ffmpeg_build" --disable-shared make make install make distclean cd .. sudo apt-get install libmp3lame-dev wget http://storage.googleapis.com/downloads.webmproject.org/releases/webm/libvpx-1.5.0.tar.bz2 tar xjvf libvpx-1.5.0.tar.bz2 cd libvpx-1.5.0 PATH="$HOME/bin:$PATH" ./configure --prefix="$HOME/ffmpeg_build" --disable-examples --disable-unit-tests PATH="$HOME/bin:$PATH" make make install make clean cd.. sudo apt-get install libxvidcore-dev sudo apt-get install libopus-dev |

Now I’ll download and extract ffmpeg snapshot (January 3, 2016) and copy the required NVENC 6.0 SDK header files into /usr/local/include:

|

1 2 3 |

wget http://ffmpeg.org/releases/ffmpeg-snapshot.tar.bz2 tar xjvf ffmpeg-snapshot.tar.bz2 sudo cp ../nvidia_video_sdk_6.0.1/Samples/common/inc/*.h /usr/local/include/ |

Before configuring and building ffmpeg with nvenc enabled:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

cd ffmpeg PATH="$HOME/bin:$PATH" PKG_CONFIG_PATH="$HOME/ffmpeg_build/lib/pkgconfig" ./configure \ --prefix="$HOME/ffmpeg_build" \ --pkg-config-flags="--static" \ --extra-cflags="-I$HOME/ffmpeg_build/include" \ --extra-ldflags="-L$HOME/ffmpeg_build/lib" \ --bindir="$HOME/bin" \ --enable-gpl \ --enable-libass \ --enable-libfdk-aac \ --enable-libfreetype \ --enable-libmp3lame \ --enable-libopus \ --enable-libtheora \ --enable-libvorbis \ --enable-libvpx \ --enable-nvenc \ --enable-libxvid \ --enable-nonfree make -j9 |

You can also optionally install it (which I did):

|

1 |

sudo make install |

This will install it in $HOME/bin/ffmpeg. Now we can verify nvenc support for H.264 and H.265 is enabled:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

ffmpeg -codecs | grep nvenc ffmpeg version N-77671-g97c162a Copyright (c) 2000-2016 the FFmpeg developers built with gcc 4.8 (Ubuntu 4.8.4-2ubuntu1~14.04) configuration: --prefix=/home/jaufranc/ffmpeg_build --pkg-config-flags=--static --extra-cflags=-I/home/jaufranc/ffmpeg_build/include --extra-ldflags=-L/home/jaufranc/ffmpeg_build/lib --bindir=/home/jaufranc/bin --enable-gpl --enable-libass --enable-libfdk-aac --enable-libfreetype --enable-libmp3lame --enable-libopus --enable-libtheora --enable-libvorbis --enable-libvpx --enable-nvenc --enable-nonfree --enable-libxvid libavutil 55. 12.100 / 55. 12.100 libavcodec 57. 21.100 / 57. 21.100 libavformat 57. 21.100 / 57. 21.100 libavdevice 57. 0.100 / 57. 0.100 libavfilter 6. 23.100 / 6. 23.100 libswscale 4. 0.100 / 4. 0.100 libswresample 2. 0.101 / 2. 0.101 libpostproc 54. 0.100 / 54. 0.100 DEV.LS h264 H.264 / AVC / MPEG-4 AVC / MPEG-4 part 10 (decoders: h264 h264_vdpau ) (encoders: nvenc nvenc_h264 ) DEV.L. hevc H.265 / HEVC (High Efficiency Video Coding) (encoders: nvenc_hevc ) |

Perfect. Time for a test with our 1080p H.264 video sample, and encoding at 2000 kbps.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 |

time ffmpeg -i h264_1080p_sample.m4v -vcodec nvenc_hevc -b:v 2000k h265_1080p_sample.mkv ffmpeg version N-77671-g97c162a Copyright (c) 2000-2016 the FFmpeg developers built with gcc 4.8 (Ubuntu 4.8.4-2ubuntu1~14.04) configuration: --prefix=/home/jaufranc/ffmpeg_build --pkg-config-flags=--static --extra-cflags=-I/home/jaufranc/ffmpeg_build/include --extra-ldflags=-L/home/jaufranc/ffmpeg_build/lib --bindir=/home/jaufranc/bin --enable-gpl --enable-libass --enable-libfdk-aac --enable-libfreetype --enable-libmp3lame --enable-libopus --enable-libtheora --enable-libvorbis --enable-libvpx --enable-nvenc --enable-nonfree --enable-libxvid libavutil 55. 12.100 / 55. 12.100 libavcodec 57. 21.100 / 57. 21.100 libavformat 57. 21.100 / 57. 21.100 libavdevice 57. 0.100 / 57. 0.100 libavfilter 6. 23.100 / 6. 23.100 libswscale 4. 0.100 / 4. 0.100 libswresample 2. 0.101 / 2. 0.101 libpostproc 54. 0.100 / 54. 0.100 Input #0, mov,mp4,m4a,3gp,3g2,mj2, from 'h264_1080p_sample.m4v': Metadata: major_brand : mp42 minor_version : 512 compatible_brands: isomiso2avc1mp41 creation_time : 2015-12-29 10:35:15 title : MVI_0820 encoder : HandBrake 7412svn 2015082501 Duration: 00:02:43.53, start: 0.000000, bitrate: 5870 kb/s Stream #0:0(und): Video: h264 (Main) (avc1 / 0x31637661), yuv420p(tv, bt709), 1920x1080 [SAR 1:1 DAR 16:9], 5703 kb/s, 29.97 fps, 29.97 tbr, 90k tbn, 180k tbc (default) Metadata: creation_time : 2015-12-29 10:35:15 handler_name : VideoHandler Stream #0:1(eng): Audio: aac (LC) (mp4a / 0x6134706D), 48000 Hz, stereo, fltp, 160 kb/s (default) Metadata: creation_time : 2015-12-29 10:35:15 handler_name : Stereo Output #0, matroska, to 'h265_1080p_sample.mkv': Metadata: major_brand : mp42 minor_version : 512 compatible_brands: isomiso2avc1mp41 title : MVI_0820 encoder : Lavf57.21.100 Stream #0:0(und): Video: hevc (nvenc_hevc) (Main), yuv420p, 1920x1080 [SAR 1:1 DAR 16:9], q=-1--1, 2000 kb/s, 29.97 fps, 1k tbn, 29.97 tbc (default) Metadata: creation_time : 2015-12-29 10:35:15 handler_name : VideoHandler encoder : Lavc57.21.100 nvenc_hevc Side data: unknown side data type 10 (24 bytes) Stream #0:1(eng): Audio: vorbis (libvorbis) (oV[0][0] / 0x566F), 48000 Hz, stereo, fltp (default) Metadata: creation_time : 2015-12-29 10:35:15 handler_name : Stereo encoder : Lavc57.21.100 libvorbis Stream mapping: Stream #0:0 -> #0:0 (h264 (native) -> hevc (nvenc_hevc)) Stream #0:1 -> #0:1 (aac (native) -> vorbis (libvorbis)) Press [q] to stop, [?] for help .... video:39825kB audio:2327kB subtitle:0kB other streams:0kB global headers:4kB muxing overhead: 0.295402% real 0m30.338s user 1m19.296s sys 0m1.555s |

It took 30 seconds, or about the same time as with NvTranscode, but this time I had a watchable video with audio, and I could not notice any visual quality degradation.

I repeated the test with a H.264 1080p movie lasting 1 hour 57 minutes 29 seconds. The movie H.264 stream was encoded at 2150 kbps, so to decrease the file size by half I encoded the movie at 1075 kbps (-b:v 1075k option). The encoding only took 13 minutes and 12 seconds, or about 9 times faster real-time at 218 fps.

I also checked some GPU details during the transcoding:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

nvidia-smi Mon Jan 4 11:57:40 2016 +------------------------------------------------------+ | NVIDIA-SMI 358.16 Driver Version: 358.16 | |-------------------------------+----------------------+----------------------+ | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | |===============================+======================+======================| | 0 GeForce GTX 960 Off | 0000:01:00.0 On | N/A | | 42% 38C P2 33W / 120W | 658MiB / 2047MiB | 10% Default | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | Processes: GPU Memory | | GPU PID Type Process name Usage | |=============================================================================| | 0 1705 G /usr/bin/X 265MiB | | 0 2582 G compiz 117MiB | | 0 10267 G /usr/lib/firefox/plugin-container 22MiB | | 0 22818 G totem 35MiB | | 0 22917 C ffmpeg 200MiB | +-----------------------------------------------------------------------------+ |

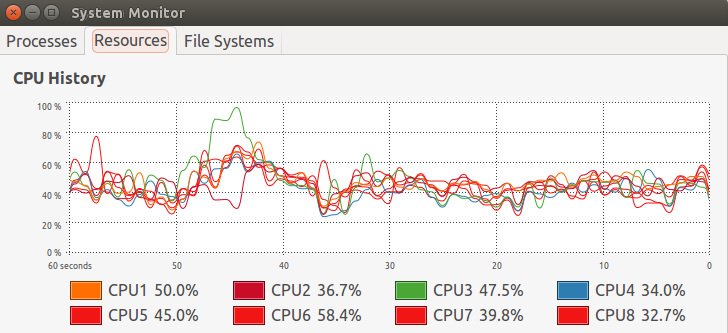

This shows for example that it does not maxes out the GPU power consumption (P2 mode: 33 Watts). My processor load was however a bit higher than expected, although not at 100% all the time as would have been the case for software video transcoding.

Beside saving time, transcoding videos with a GPU graphics should also reduce your electricity bill. How much exactly will depend on your video library size, electricity rate, and overall computer power consumption.

While the original file size was 2.0GB, the H.265 video was only 985 MB large, and video quality appeared to be very close to the one of the original video.

Finally, I transcoded a 4K H.264 video @ 30 fps (big_buck_bunny_4k_H264_30fps.mp4) at slightly less half bitrate (3500 kbps for H.265 vs 7480 kbps for H.264) and it took 6 minutes and 56 seconds to encode the 10 minutes 30 seconds video. While checking quality the main problem was my computer struggled to cope with the H.265 4K video when using Totem and VLC video players with lots of artifacts at times, and sound cuts, but the videos played just fine with ffplay and Kodi.

I’d like to thanks GearBest for providing Maxsun MS-GTX960 graphics card selling for $240.04 on their website.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress

a little bit disappointed with the HEVC encoding speed for a GPU encoder, but we can’t complain much for the moment, it we done it on the CPU, it would take ages

otherwise I would like to see how the nvidia encoder is standing in front of android HW accelarated encoding

@natsu

Which available ARM SoC supports H.265 encoding?

HELIO X10 (xiaomi redmi note 3, letv s1…) is supporting it (4k@30fps HEVC encoding) and maybe some snapdragons but I’m not sure, the real problems are the drivers and software support

And.. for AMD????????????? 🙁

If the encoder generates raw video, ffmpeg or avconv should be able to insert that data stream into a container of your choice (probably mp4) without any trouble, and without having to recompile. Or there should be plenty of command lines tools to mux/demux data streams into a container.

H.265 might promise the same video quality as H.264 when using half the bitrate, but it doesn’t promise transcoding from H.264 keeps the same quality, doesn’t it?

@Dego

I could not find info about HEVC encode with AMD Graphics.

The latest Polaris GPU will support it though: http://www.phoronix.com/scan.php?page=news_item&px=Radeon-Polaris-Details

@G

Yes, that’s correct that there should be visual quality loss each time you re-encode the video. But the video quality still looked about the same after my tests.

Hi,

looks like there are two small errors. I replaced libXlut by libxmu-dev and removed the space from export LDFLAGS=”-L/usr/lib/nvidia-358/”. I tried to convert a h264 mkv to h265 and at first it looked good but after some time the audio is lost in the h265 file. I used VLC for replay. Any ideas ?

@Bernd Peters

Thanks. The libXlut was definitely a mistake, and checking my history showed I installed libxmu-dev. I also had the space in the LDFLAGS, and it should not matter with the quotes.

About your audio issue, I’m not sure. Try another player, e.g. ffplay, and it is still happen you could consider filling a bug with ffmpeg.

hi very thx abou this tuto

i have Geforce 9400 GT && ubuntu 15.10:32BIT && intel doual E8600 3.2 Ghz

this tuto will work for me ?

@S265

Sorry, your graphics card does not support H.265 video encoding. You’d need a maxwell 2 GPU, something like GTX960 or greater.

http://www.hardocp.com/images/articles/1463427458xepmrLV68z_2_15_l.gif

http://www.techpowerup.com/reviews/NVIDIA/GeForce_GTX_1080/images/features3.jpg

Nvidia has upgraded the NVENC HEVC hardware encoder to support 10bit hardware encoding in Pascal GPU family.

ffmpeg 3.1 has been released https://git.ffmpeg.org/gitweb/ffmpeg.git/blob/refs/heads/release/3.1:/RELEASE_NOTES, so you don’t need to get the master branch anymore.

Changelog:

– Generic OpenMAX IL H.264 & MPEG4 encoders for Raspberry Pi

– VA-API accelerated H.264/HEVC/MJPEG encoding

– VAAPI-accelerated format conversion and scaling

– Native Android MediaCodec API H.264 decoding

– CUDA (CUVID) HEVC & H.264 decoders

– CUDA accelerated format conversion and scaling

– DXVA2 accelerated HEVC Main10 decoding on Windows

– many new muxers/demuxers

– a variety of new filters

Just dropping this bit of info here… A GTX960 can actually support two nvenc sessions simultaneously. This means you can run two ffmpeg processes in parallel and encode two videos at full speed, thereby doubling the theoretical encoding speed. An example: $ find Videos/ -type f -name \*.avi -print | sed ‘s/.avi$//’ | xargs -n 1 -I@ -P 2 ffmpeg -i “@.avi” -c:a aac -c:v hevc_nvenc “@.mp4” This will transcode an entire directory with .avi files into HEVC/H.265+AAC with the ending .mp4, using Nvidia nvenc and running two ffmpeg processes in parallel (i.e. 200fp/s+200fp/s). I am not sure why they… Read more »

Some extra performance can be had with the “scale_npp” module, which uses Nvidia CUDA for scaling image sizes. It’s however a bit tricky to use: ffmpeg -i input.avi \ -c:a aac -b:a 128k \ -filter “hwupload_cuda,scale_npp=w=852:h=480:format=nv12:interp_algo=lanczos,hwdownload,format=nv12” \ -c:v hevc_nvenc -b:v 1024k \ -y output.mp4 It actually requires three filter modules. The module “hwupload_cuda” sends frames to the CUDA engine where then “scale_npp” applies scaling (here with the lanczos algorithm) and finally a third module is needed to download the frame. The format of the image also has to be specified in this process (yuv420p also works as format). One can… Read more »

Thanks @Sven for those useful tips.

@Jean-Luc Aufranc (CNXSoft)

You’re welcome. When I started looking into HEVC/H.265 encoding was I using my distribution’s default ffmpeg and encoding speed was at around 10-20 fp/s. It took a while to figure out all the bits’n’pieces to make it work. So now I am at 720 fp/s (2×360 fp/s). While I had to pick up all the info from various blogs and other places did your blog provided some good insights and I just want to give some of it back now. 🙂

Very very nice article, it gives me know the opportunity to follow this and use the max out of the GTX960.

As I am a broadcast engineer and not a developer I am having a hard time testing out all this.

However my question is: Can I use the ffmpeg and NVenc solution above to encode live content (coming from eg Decklink card supported by FFmpeg) and stream it out on a UDP TS ?

@Coremans

Short answer: I don’t know.

But ffmpeg supports streaming -> https://trac.ffmpeg.org/wiki/StreamingGuide

So if you have not done so already, try to set it up with software decode first, and then if it works, switch to NVenc instead of the software codecs.

@Coremans You definitely should be able to do this. The Nvidia encoder NVENC has two special settings for low-latency streaming. I’ve been playing around with the Nvidia options and could improve on quality and performance. Turns out that while the lanczos filter is good to preserve details (“interp_algo=lanczos”) does it lower the quality of shaded surfaces. So its basically a tradeoff. I got best results from the super sampling filter (“interp_algo=super”). It appears that super sampling keeps the quality steady frame by frame, which allows the encoder to do the best job and results in a noticeably higher compression (file… Read more »

@Coremans Regarding streaming… I used ffmpeg many years ago to stream to Justin.TV (I believe they now call themselves Twitch.TV). What worked for me back then with ffmpeg on Linux was to use “-f flv” as the format with a file name of “-y rtmp://live.justin.tv/app/…”

You will have to look into what your receiving end is exactly capable of supporting. If it can support different input formats then try them all. See which works best.

Thank you guys for all the valuable information. The idea is to find the optimal encoding solution for UHD. If I see how hard it is for the GTX960 to decode HEVC UHD, I think I will start off with H264. The goal is to get the live stream encoded out again via an Mpeg-TS stream in ASI or UDP so it can go into a satellite modulator. FFmpeg can do it out of the box but by implementing the NVenc, I expect this to be even better and to offload the CPU cores. Nice project and this place is… Read more »

One more question: We have seen Encode and Transcode in this article, is there anything to be found for NVdecode ?

If I could implement this on ffplay then it could make my HEVC decoding more performant, not ?

Today I got a satellite feed converted to UDP (56Mbit/s) and have it decoded with VDPAU, the 4 cores went to 83%

the nvidia-smi reported 41% GPU usage. (content was HEVC in MPEG2Ts with UHD resolution)

Not bad I think, but if the GPU is using 41%, then there is some headroom #JustThinking

@Coremans The main problem you are facing isn’t so much with the GPU but with the CPU. Whenever the data has to be moved into main memory does this present a bottleneck. Decoding of H.264 with VDPAU seems pretty wide-spread these days. However, VLC and ffmpeg seem not to support H.265 decoding with VDPAU at this time. However the mpv video player already does support it (see mpv.io). mpv plays an U-HD video with 3840×2160 pixels on my computer with the CPU at about 40%. I only have a 1920×1080 display and so it also needs to perform some scaling,… Read more »

I’m only getting around 2x realtime doing a 1080p encode using nvenc_hevc on a 1060 (3 gig) using latest snapshot of ffmpeg. nvidia-smi shows about 200m of memory use, about 3% on gpu-util and does show ffmpeg process. Driver is 367.35. ffmpeg is hovering around 97% in top command. This is on an i5 cpu (2500k I think). Any idea why so slow?

@Frank

It looks like ffmpeg may be using software encoding with the CPU instead of encoding with the GPU.

@Frank What does your ffmpeg line look like and what do you use as input?

@Sven ~/ffmpeg/bin/ffmpeg -i Test.mkv -vcodec hevc_nvenc output.mp4 Some details about the source: Format : Matroska Format version : Version 2 File size : 20.9 GiB Duration : 1h 42mn Overall bit rate : 29.6 Mbps Writing application : MakeMKV v1.10.0 win(x64-release) Writing library : libmakemkv v1.10.0 (1.3.3/1.4.4) win(x64-release) Format : VC-1 Format profile : Advanced@L3 Codec ID : V_MS/VFW/FOURCC / WVC1 Codec ID/Hint : Microsoft Bit rate : 24.4 Mbps Width : 1 920 pixels Height : 1 080 pixels Display aspect ratio : 16:9 Frame rate mode : Constant Frame rate : 23.976 fps Color space : YUV Chroma… Read more »

@Jean-Luc Aufranc (CNXSoft)

It does seem that way.. but then why does the task show up in the nvidia-smi utility? Dirver issue? I noticed in the driver notes it said something like “added support for 1060 6gig” could that mean the 3gig maybe isn’t supported completely yet?

@Frank The problem may be with the decoder. Your test file is HD1080 with a 24mbit/s bit rate. This is a lot for a software decoder to decode. You could try the cuvid decoder, which is a hardware decoder only meant for transcoding. It won’t allow for any filters currently and is not yet fully stable. So don’t expect it to work, but be happy if it does: $ ffmpeg -c:v vc1_cuvid -i Test.mkv -c:v hevc_nvenc output.mp4 This decodes the VC1 source on your graphics card and sends it to the hardware decoder without going back over the CPU or… Read more »

@Sven

I tried building ffmpeg with cuvid support (–enable-cuvid), but I’m doing something wrong…

~/ffmpeg/ffmpeg# PATH=”$HOME/ffmpeg/bin:$PATH” PKG_CONFIG_PATH=”$HOME/ffmpeg/build/lib/pkgconfig” ./configure –prefix=”$HOME/ffmpeg/build” –pkg-config-flags=”–static” –extra-cflags=”-I$HOME/ffmpeg/build/include” –extra-ldflags=”-L$HOME/ffmpeg/build/lib” –extra-cflags=”-I/usr/local/cuda-8.0/include” –extra-ldflags=”-L/usr/local/cuda-8.0/lib64/stubs” –bindir=”$HOME/ffmpeg/bin” –enable-gpl –enable-libass –enable-libfdk-aac –enable-libfreetype –enable-libmp3lame –enable-libopus –enable-libtheora –enable-libvorbis –enable-libvpx –enable-libx264 –enable-libx265 –enable-nonfree –enable-nvenc –enable-cuvid

ERROR: CUVID requires CUDA

@Frank

This seems to work.. had to add “–enable-cuda”

PATH=”$HOME/ffmpeg/bin:$PATH” PKG_CONFIG_PATH=”$HOME/ffmpeg/build/lib/pkgconfig” ./configure –prefix=”$HOME/ffmpeg/build” –pkg-config-flags=”–static” –extra-cflags=”-I$HOME/ffmpeg/build/include” –extra-ldflags=”-L$HOME/ffmpeg/build/lib” –extra-cflags=”-I/usr/local/cuda-8.0/include” –extra-ldflags=”-L/usr/local/cuda-8.0/lib64/stubs” –bindir=”$HOME/ffmpeg/bin” –enable-gpl –enable-libass –enable-libfdk-aac –enable-libfreetype –enable-libmp3lame –enable-libopus –enable-libtheora –enable-libvorbis –enable-libvpx –enable-libx264 –enable-libx265 –enable-nonfree –enable-nvenc –enable-cuda –enable-cuvid

@Frank

I’m getting a little over 5x realtime now! Cool!

@Frank

Turns out I didn’t need the second “–extra-ldflags” and it seems faster now (getting 6x realtime). Cpu is still around low to mid 90% (only 1 core) and nivida-smi is showing around 17% on gpu. So there might be extra potential here?

@Frank Judging only by the configure arguments, you may be doing something wrong there. Group all –extra-cflags=”…” into one and do the same with –extra-ldflags=”…”. Here is how I’m doing this: prefix=$HOME/ffmpeg configure –prefix=$prefix –extra-cflags=”-I$prefix/include -I/usr/local/cuda/include -I/usr/local/Video_Codec_SDK_7.0.1/Samples/common/inc” –extra-ldflags=”-L$prefix/lib -L/usr/local/cuda/lib64″ … I don’t know if ffmpeg’s configure script will concatenate multiple –extra flags into one or if they might over-write one another. So I’m putting them all into one argument each and it has worked for me so far. /usr/local/cuda is a symlink to cuda-8.0. I use the $prefix directory to install all the prerequisites (i.e. libx264, libx265, libopus, …) before… Read more »

@Sven

Didn’t seem to make much difference.. still getting about 5x encoding time

@ Frank, how did you compile ffmpeg with cuvid? I managed to compile it with nvenc and nvresize, but when I try it with cuvid it does not work – it stops with -enable-cuda (Unknown option “–enable-cuda”.

See ./configure –help for available options.

). Yet i have installed cuda-8 and SDK7. Do you know a good tutorial?

Thanks for helping

So has someone found the optimal settings (parameters) to get the most out of realtime encoding (from live source) using CBR ? I was wondering if the rc-lookahead and ME is actually doing something when using CBR.

FYI I’m using UltraHD at 30fps

A H.265 hardware encoder is still a good concept though. This is especially true with HSA etc (which will become more utilised in the future no doubt). Basically with HSA, you can use the best option for the processing of the encoding between the CPU and GPU without loss of quality (supposedly). Of course, bytecopy software would have to be cleverly written to make the best use of this.

@natsu It’s worth noting that this is 4K video, or 4x 1080p… so the equivalent of 40 minute 1080p video in h.265 in 6-7 minutes… that’s way impressive… I’m seeing 260-290fps for my 1080p recodes using nvenc.

For reference, I’m using staxrip, setting nvenc to vbr2 with bitrate settings (23/20/26, 1200, adaptive), a 42-44minute 1080p is generally around 800mb

Hi. When I compare the results with soft/CPU encoding, there is a noticable difference in quality of the resulting video. I’m transcoding x264 video files to HEVC/h265. I’m transcoding in Ubuntu 16.04 and I’m using this ffmpeg build: https://www.johnvansickle.com/ffmpeg/ My video card is a GTX 960, with nVidia drivers 378 installed. Commandline: CPU encoding: ffmpeg -i in_x264.mkv -c:s copy -c:v libx265 -preset medium -x265-params crf=19 out_hevc.mkv nVidia HW encoding: ffmpeg -i in_x264.mkv -c:s copy -vcodec hevc_nvenc -preset medium -x265-params crf=19 “out_hevc.mkv I tried with different settings for the nVidia method to increase the quality, but no luck. Like : “-c:s… Read more »

It apears that the hardware is still somehow “limited”. It might be better with the newer Pascal chips, but still some limitations there:

https://en.wikipedia.org/wiki/Nvidia_NVENC

Maybe a silly question/thought: Should it be possible to implement a soft encoder (like libx265) in the CUDA cores of those nVidia cards to have the same output quality?

The quality will always degrade with every further compression. If it’s quality you want then stick with the original. Also, without knowing the exact parameters that were used during the compression of the original will it be nearly impossible to get close to the original quality. You basically will have to apply identical parameters for H.265 as have been used for H.264 and hope it produces the least amount of artefacts in the subsequent compression. But without any knowledge over it will ffmpeg simply decode the H.264 video frame by frame and feed it as new input to the H.265… Read more »

@Sven

Yes, but when I use soft transcoding by using ffmpeg and the CPU (libx265), the quality is nearly identical as the source x264. I just want to achieve the same quality via hevc_nvenc as I get via the much slower libx265.

But the hardware isn’t yet able to do that. A good thread about this here:

https://forum.doom9.org/showthread.php?t=172618

One of their conclusions:

Posted by JohnLai

There is no perfect fixed function encoder . Intel, AMD and Nvidia fixed function encoders omit a lot of ‘features’. Speed/quality tradeoff.

Stick with software encoders for the best quality per bitrate plus flexibility.

Sorry, Gunter. My experience is a different one and I have good success with the hardware encoders. Neither hardware nor software encoder are perfect by the way. Still, if the quality of the hardware encoder differs as much as you say it does then you must be doing something wrong and I cannot tell you what it is. Perhaps ask on the ffmpeg-users mailing list.

Those hardware encoders are still missing some quality features (like B-frame support, max CE size of 32, …) to achieve high quality results. Maybe the next generation of the will add this (each generation, features are added). Anyway, if you are happy with the results, good for you, but I’m comparing on a freeze frame basis and the difference in quality between soft encoding and the nVidia HW encoder is quite noticable. And I don’t think it is because I’m using bad/wrong parameters (I might tried them all 😉 ) because if you read the Doom9 thread, it’s clear that… Read more »

Maybe don’t watch movies frame by frame, because if that’s the only way you can spot differences then you’re proving my point. Good Luck!

@Dego

AMD is DEAD

@Sven: After the additional 6 months, did you have any additional insight or change to your recommendations?

Those were much appreciated, thanks.

@emk2203 Hello emk2203, yes, a few things have in fact improved. Aside from the updates by Nvidia to CUDA and the Video SDK does ffmpeg support a couple more advanced features by the hardware encoders on the newer cards (GTX 10×0-series). See the following for more info on this: ffmpeg -h encoder=nvenc_hevc ffmpeg -h encoder=nvenc_h264 Further does transcoding now work fully in hardware, which in the past didn’t work for me. One needs to specify “-hwaccel cuvid” as well as the hardware decoder “-c:v h264_cuvid” on the command line before sending the stream to the hardware encoder. A full example… Read more »

can this card be be use to Transcode Live , to lower bitrate .. and how many channels on HD can be transocde>??

@juan

Yes, you can use it to transcode live streams. By default can most NVidia cards run two encoders simultanously. See here for more details on the number of maximum streams:

https://developer.nvidia.com/video-encode-decode-gpu-support-matrix

It’s also possible to produce multiple output streams from one input stream. See here for an example:

https://developer.nvidia.com/ffmpeg

The example there might be a bit flawed and the second scale_npp filter will also need an “=”-sign in there:

ffmpeg -hwaccel cuvid -c:v h264_cuvid -i input.mp4 -vf scale_npp=1280:720 -vcodec h264_nvenc output0.mp4 -vf scale_npp=640:480 -vcodec h264_nvenc output1.mp4