Ztachip is an open-source RISC-V accelerator for vision and AI edge applications running on low-end FPGA devices or custom ASIC that is said to perform 20 to 50 times faster than on non-accelerated RISC-V implementations, and is also better than RISC-V cores with vector extensions (no numbers were provided here).

Ztachip, pronounced zeta-chip, is not tied to a particular architecture, but the example code features a RISC-V core based on the VexRiscv implementation and can accelerate common computer vision tasks such as edge detection, optical flow, motion detection, color conversion, as well as TensorFlow AI models without retraining.

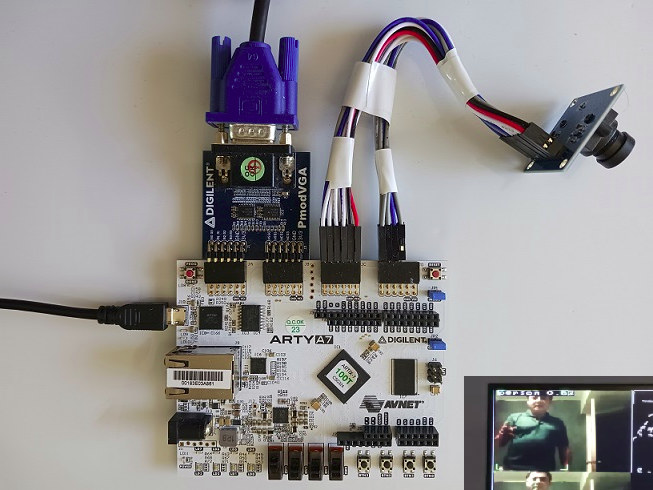

The open-source AI accelerator has been tested on Digilent ArtyA7-100T FPGA board in combination with a PMOD VGA module to connect to a display and an OV7670 VGA camera module. You can then build the sample found on Github with the Xilinx Vivado Webpack free edition and flash it to the board with OpenOCD following the provided instructions on Github.

The video demonstrating the Ztachip AI vision accelerator below runs a multi-tasking demo with object detection, edge detection, Harris corner detector, and motion detection running at the same time.

Vuong Nguyen, the developer, explains his accelerator is more flexible and supports a broader range of AI workloads compared to other accelerators that tend to accelerate only a narrow range of applications only, for example, convolution neural networks (CNN) only.

The project has been released under an MIT license and is free to use even for commercial applications.

Via Hackaday.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress